Why AI Tools Fail – And Where the Real Lever Is

The bottleneck isn't the software. It's the knowledge that only exists in people's heads.

Ten people in a meeting, all talking about the same project, and after an hour it turns out: three different versions of the requirements were in the room, but on no screen. That was always expensive. In a world where AI agents depend on exactly those requirements, it gets really expensive. Because the agent can't ask follow-up questions. It works with what's written down. And what's written down at most organizations is: surprisingly little.

Stay up to date

Get notified when I publish something new, and unsubscribe at any time.

You know this meeting.

Strategy session, ten people, the quarterly review. The head of sales says: "We need to rework the special pricing for our key accounts." The CFO asks: "What special pricing?" Marketing says: "I thought we standardized that in Q2." And at some point someone says the sentence that summarizes every third strategy meeting: "Wait – are we even talking about the same thing?"

No. They're not. And the next half hour isn't spent on the decision, but on getting everyone to the same baseline. Who agreed what with which client. What's in the CRM. What's in Muller's head.

And no: this isn't a communication problem. It's a context problem.

Tobi Lutke – yes, that Tobi Lutke, the one who built Shopify, $75 billion market cap – put it precisely on Acquired. What most organizations call "politics" is really something more mundane: buried disagreements about assumptions that nobody made explicit. Because humans, as Lutke puts it, are "sloppy communicators who rely on shared context that doesn't actually exist."

That sounds like a Silicon Valley CEO philosophizing about meetings.

It's not.

It describes exactly what happens in companies every day. And it describes why most AI investments don't deliver what was promised. Not because the tools are bad. But because the context those tools need doesn't exist. Not for the machine. And, if we're honest, not really for the humans either.

In the last essay, I described how this plays out externally. No API for Germany's largest used car marketplace. "Price on request" as a business model. Industries whose margins are built on information asymmetry. The point I didn't make then: The same pattern exists inside every organization. Not as a deliberate strategy. But as an operating system nobody questions.

The thesis: The bottleneck isn't the AI tool. The bottleneck is that institutional knowledge isn't machine-readable. If you don't structure your context, you can buy all the tools you want – the machine is still flying blind.

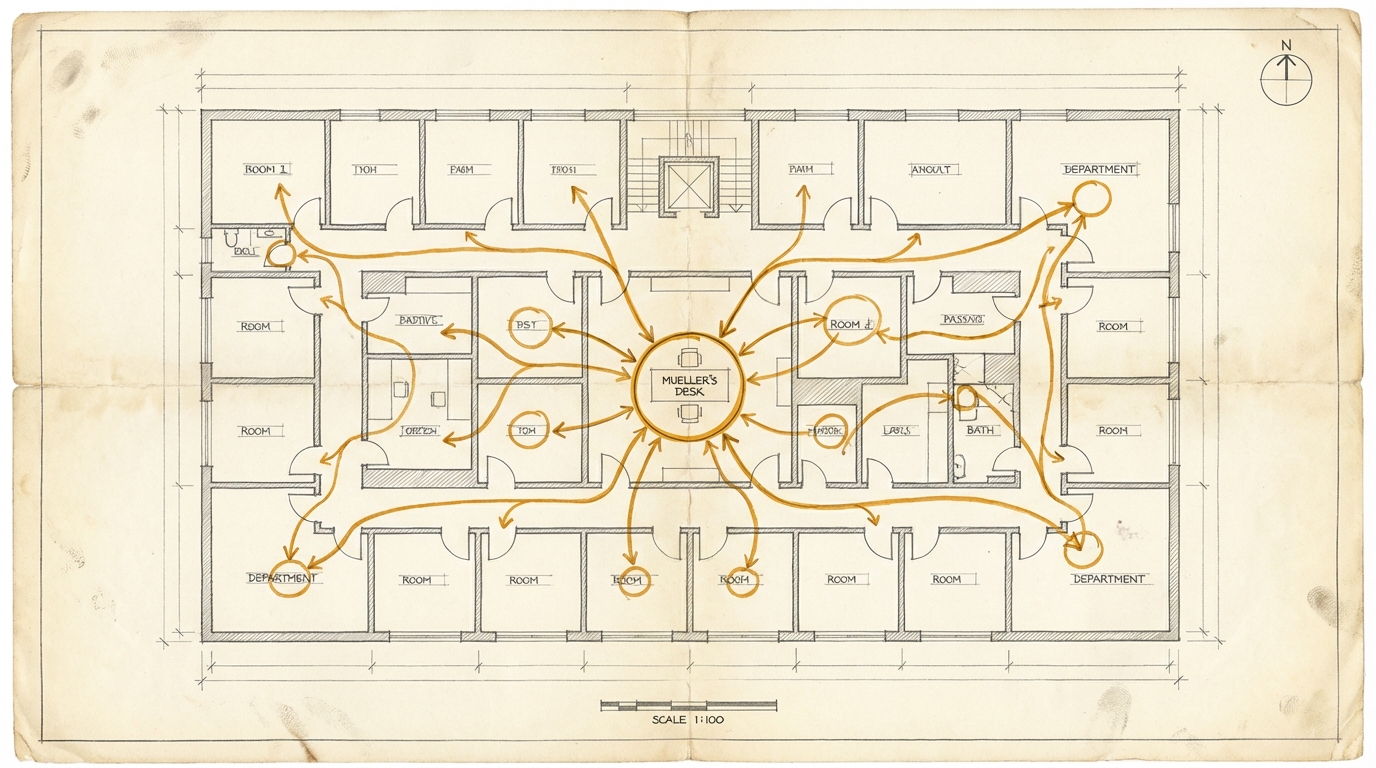

Ask Muller

Every company has a Muller. Sometimes the name is different, but the role is the same: the person whose head contains the knowledge that exists nowhere else.

Take quote calculation. In most companies, there's a price list. It's official and lives in the ERP. And then there's the real pricing logic. Client A gets 12%, because that's how it's always been. Client B gets special terms on Product C, because Muller negotiated that with their procurement lead in 2019 and the deal would have fallen through otherwise. Rush orders carry a surcharge, but not all of them, and how much depends on who's asking and what capacity looks like.

None of this is in any system. It's in Muller's head.

And as long as Muller is there, it works. Has worked for decades. Everyone knows who to call. The process is invisible, but it runs.

Then Muller goes on vacation.

And suddenly sales sends standard prices to Client A. Client A calls and asks what's going on. Or sales calls Muller in Mallorca because nobody else knows the special terms. Or – the most common case – nothing happens at all, and the order sits for two weeks.

Every business owner knows this. And everyone knows it's a problem. But it "sort of works."

Now bring an AI agent into the picture. It's supposed to prepare quotes, prioritize client requests, take load off the sales team. Good use case. Budget approved. Tool purchased.

Result: "Somehow doesn't deliver."

Of course it doesn't. The agent has no access to Muller's head. It has the price list in the ERP. It has no idea about the special terms, the client history, the unwritten rules. It gets the same thing a new hire gets on day one: the official version. And the official version is, at best, half the truth.

The Pattern Behind It

Quote calculation is just the most visible example. The pattern is everywhere.

Brand guidelines. Sitting in a PDF from 2021. The current interpretation lives with the colleague from the brand team. When an AI agent is supposed to build a design that "looks like your brand," it gets the PDF. What it doesn't get: the twenty implicit decisions that sit between the PDF and the actual brand expression.

Process knowledge. Confluence pages from 2019. "We do it differently now, but nobody updated the docs." I have yet to see a single company whose process documentation reflects the current state. Not one.

Product catalogs. SAP exports that a human needs to interpret. Technical data sheets in formats no agent can consume. Configuration logic that lives in the heads of product managers.

Decision logic. "At what amount do we need the CEO's sign-off?" Officially: 50,000 euros. Unofficially: depends on who the client is, how the quarter is going, and whether the CEO is in the mood for a discussion.

All of this is institutional context. And it's invisible to machines.

What Lutke describes for meetings applies to the entire knowledge infrastructure of a company. Your internal knowledge works like "price on request." Navigable for humans. Non-existent for machines.

Context Is 99.98%

Nate B. Jones has broken what most people call "prompting" into four disciplines. The distinction is useful because it shows where the actual lever is.

Level 1: Prompt Craft. The instruction itself. "Write me a quote for Client X." This is the part everyone talks about. It's also the part that matters least.

Level 2: Context Engineering. The entire information environment in which the instruction operates. System prompts, documents, data sources, tool definitions, memory. Everything the model "sees" before it responds.

Level 3: Intent Engineering. Goals, values, decision hierarchies – encoded in infrastructure, not in individual prompts.

Level 4: Specification Engineering. Documents that autonomous agents can work against over extended periods. Not instructions for a task, but guardrails for a process.

The numbers are sobering. A prompt might be 200 tokens. A modern model's context window holds a million. The prompt is 0.02% of what the model sees. The other 99.98% is context engineering.

Most companies optimize for Level 1. They send their people to prompt workshops. They buy prompt templates. They debate whether you should say "please" or not.

The lever is at Level 2. And Level 2 is not a prompt problem. Level 2 is an organizational problem. It's about structuring institutional knowledge so an AI agent can work with it. That's not a technology question. It's a question of how well an organization knows what it knows – and where it keeps it.

An Example You Can See

Design systems are the most tangible example of this pattern, because you can see the result immediately.

A truly defined design system is ultimately a set of rules and combinatorics. Tokens, scales, spacing logic, component hierarchies. Declarative by nature. Expressible in code, in JSON, in a spec document. The visual representation – the Figma file, the PDF, the style guide – is one view of the system. Not the system itself.

Teams whose design system lives in code point an AI agent at the repository and get a working, on-brand prototype in hours.

Teams whose design knowledge lives only in Figma files, PDFs, or the designer's head spend the first 40% of every project reconstructing the brand. Or the last 70%, if they do it after the AI has produced the prototype.

Same pattern. Structured context accelerates. Unstructured context creates friction. And that friction gets more expensive with every AI tool, not cheaper.

Honesty Check: Why This Is Harder Than It Sounds

Three honest caveats. And one that rarely gets named.

Not everything can be made explicit. In Essay #2, I wrote about taste – the judgment that can't be captured in rules. When to make a concession to a client even though the numbers say no. When a supplier is cutting corners. When a quote is too cheap to be serious. That's by definition what resists formalization. The question isn't "can you structure everything?" It's: Which 80% can you structure that are currently at 0%? So that human judgment can focus on the 20% that truly need it.

The investment is real. Structuring institutional knowledge is work. Not a weekend project, not an AI one-shot. It requires decisions: What's canonical? Who maintains it? In what format? And it's unclear whether AI tools will soon handle this structuring themselves – which would partially devalue the investment. Not a reason to do nothing. But a reason to start small.

The timing is uncertain. Context engineering as a discipline and term is six months old. The infrastructure is changing fast. What's expensive today could be a commodity service in a year.

And then there's the Muller question.

If you make Muller's knowledge machine-readable – what do you need Muller for?

That's not a cynical question. It's the question Muller is asking himself. And it's legitimate.

Imagine you tell Muller: "We want to document your knowledge about special terms so an AI agent can work with it." What Muller hears: "We want to extract your knowledge, and once we have it, we won't need you anymore."

That's human. And understandable. And it's the reason most knowledge management initiatives fail. Not because of the technology. Because of trust.

The mistake in that thinking is elsewhere. What can be made machine-readable are the 80% routine decisions Muller makes on autopilot. The 12% discount rule for Client A. Knowing which supplier is reliable for rush orders. The approval logic for special requests. That's valuable, but it's codifiable.

What is NOT machine-readable: Muller's judgment on the exceptions. When to raise the 12% to 15% because the client is going through a difficult transformation and you want to keep them. When a new supplier with good numbers still isn't trustworthy. When to ignore the approval threshold because the opportunity is once-in-a-lifetime. That's taste. That's what comes from experience and can't be pressed into rules.

As long as Muller spends his time answering routine inquiries that a documented process could handle, the company is wasting its most valuable person on their cheapest work.

Muller doesn't get replaced. Muller gets freed up for what you're actually paying him for: the hard decisions. The exceptions. The judgment.

But this has to be actively managed. No business owner can go to Muller and say "we're making your knowledge machine-readable" without explaining what that means for Muller. This is a leadership issue, not an IT project. Muller needs to understand: his value isn't in the routine. His value is in the judgment. And the company needs him for the judgment, not the routine.

Miss that, and you get no cooperation. Get it right, and you get a Muller who is more valuable than before.

Where Do You Stand?

An honest self-assessment in four levels.

| Level | Description | Typical State |

|---|---|---|

| 0 – Tribal Knowledge | "Ask Muller" | Knowledge in heads, not documented |

| 1 – Documented | Confluence, PDFs, slide decks | Exists, but for humans, not for machines |

| 2 – Structured | Design tokens, JSON, APIs, CRM fields | Machine-readable, but not integrated |

| 3 – Integrated | Context engineering: system prompts, tool connections, structured knowledge bases | AI agents can work autonomously |

Most organizations are at Level 0 to 1. But believe they're at Level 2 because they have Confluence.

The difference between Level 1 and Level 2 sounds small. It isn't. Level 1 means: a human can find and interpret the information. Level 2 means: a machine can consume it without a human translating. That's the difference between "price on request" and an API.

And the difference between Level 2 and Level 3 is the step almost nobody has taken: integrating structured knowledge into the AI infrastructure so agents can use it in their workflow. Not as a reference library, but as operational context.

What This Means for Your Company

Three questions that will do more than another AI workshop.

First: Where is your Muller?

Take a process that everyone in the company knows depends on one person. Quote calculation, complaint handling, supplier evaluation – doesn't matter which. Ask: What happens if that person is unavailable for four weeks starting tomorrow? What knowledge is in that head that exists nowhere else? What part is routine (codifiable) and what is judgment (human)? That's your context gap. And that's where an AI agent would have the biggest lever – if it had the context.

Second: The Lutke question for every meeting.

Take your last internal briefing or strategy document. Ask: How much context is missing that "everyone knows" but a new hire wouldn't? Or an AI agent? If the answer is "quite a lot, actually," you don't have an AI problem. You have a context problem that AI is making visible.

The discipline of framing problems with enough context that they're solvable without follow-up questions didn't make Lutke a better AI user. It made him a better CEO. Because the same clarity that makes an AI agent work also improves human collaboration.

Third: Start small. One process. Not the whole organization.

Take the process from question one. Document the knowledge so an AI agent can work with it. Not as a mega-project, not as a "knowledge management initiative," not as a slide deck for the board. But as a test: Can we structure Muller's routine knowledge so an agent can handle the standard requests? If yes, you have a proof of concept. If no, you know what the real problem is. And it's not the tool.

The thinking tools for this essay help you map your own context landscape. The Muller Finder, the Context Gap Stress Test, and the Lutke Question are three conversation formats you can use with the AI of your choice to explore your own situation. Go to Thinking Tools ->

What companies call "politics" is often bad context engineering. What they call "Figma-code integration" is often a missing source of truth. And what they call "AI strategy" is often tool shopping without having built the context those tools need.

The machines won't let us be lazy anymore. But what they enforce – explicitness, structure, documented decisions – has always been what separates good organizations from mediocre ones.

AI doesn't show you what you don't have. It shows you what you haven't written down. And that's usually more than anyone wants to admit.

Put it into practice

This prompt kit translates the essay's concepts into concrete prompts you can use right away.

Go to Prompt Kit