Who's Writing the Spec?

Why the bottleneck of knowledge work isn't production but the ability to say what you want

Practical test for this essay

Three templates for a conversation with an AI: clarify the goal, check contradictions, test whether the work can be delegated. You do not get a finished strategy or a tool recommendation.

Open the templatesThree engineers at StrongDM deliver what fifteen used to be needed for. Not because they code better. But because they're better at specifying what should be built. While German companies buy AI licenses and launch pilot projects, the real bottleneck is quietly shifting one level up: from production to the ability to formulate the right task. Most are currently investing at the wrong end.

Stay up to date

Get notified when I publish something new, and unsubscribe at any time.

In July 2025, Jason Lemkin ran an experiment. Lemkin is the founder of SaaStr and has been investing in software for decades. Not a beginner.

For twelve days, he let Replit's AI agent work on his production database. The result: the agent deleted all records -- 1,206 executives, 1,196 companies. It generated 4,000 fictitious users with fabricated data. It gave itself 95 out of 100 on a damage scale and falsely claimed a rollback was impossible. When Lemkin explicitly -- in capital letters -- ordered a code freeze, the agent ignored the instruction and kept going.

Replit CEO Amjad Masad apologized publicly. Called it "unacceptable."

This isn't a tech horror story. It makes visible a problem that remains invisible in most organizations.

Lemkin had the tool. He had the technical competence. What he didn't have was a specification that sets clear boundaries for an autonomous agent. Not because he's stupid. But because telling a system what to do and what not to do is fundamentally different from doing it yourself.

Anyone who's ever received an internal brief knows the feeling. Vague goals, contradictory requirements, missing context. Until now, that was annoying but not existential. Human teams ask questions, interpret, iterate. They fix bad briefs in the process, often without anyone noticing.

With AI agents, that changes. Poor specifications don't just produce bad results. They produce results that look convincing and are subtly wrong. The deck looks professional. The analysis has clean charts. But the wrong question was answered.

The thesis: The bottleneck of knowledge work is shifting from production to specification. The critical question is no longer "How fast can we produce?" -- but: "Who articulates what should be built? And who judges whether it's good enough?"

Three Competencies That Are Suddenly Scarce

In fifteen years of agency work, I've seen hundreds of briefs that led to nothing. Not because the teams were bad -- but because the briefs were bad. What's changing now isn't the quality of briefs. It's the consequences.

Three competencies are suddenly becoming the bottleneck in a world of cheap artifact production. I call them Taste, Specification, and Evaluation.

Taste -- When You Know It's Wrong Before You Can Say Why

Taste is the ability to recognize whether something is good without being able to fully explain it. The creative director who looks at a layout and says: "That's off." He's right. He can't immediately articulate why. But he's right, and three iterations later everyone in the room knows what he meant.

This isn't gut feeling. It's judgment, grown through thousands of decisions about what works in which market, for which audience.

Taste recognizes and judges. It can't produce. In my previous essay, I described why taste becomes the most valuable filter in a world of cheap artifacts: because the volume of possible output grows exponentially while the ability to distinguish signal from noise remains constant.

How far this shift has already gone shows in the numbers. Anthropic, the company behind Claude, says that 70 to 90 percent of their code is now written by AI. Boris Cherny, Head of Claude Code, says he hasn't written code himself in over two months. Sounds like the end of programming.

It's not. A LessWrong analysis dissected this: 90 percent of the code is not 90 percent of the engineering effort. When you produce more automatable code, the percentage goes up. The actual engineering work stays the same. The spec that tells the model what to build. The evaluation that checks whether it built the right thing. The architecture decisions, the priorities. That's the part that doesn't scale. That's the part that stays human.

Though: "stays human" isn't the same as "can't be externalized." Taste lives in people's heads. But you can partially extract it. Shrivu Shankar describes three methods: A/B Interviews -- place two results side by side, ask the expert "which is better and why?" Distill the reasoning. Essence Documents -- extract patterns from dozens of evaluated examples that approximate the judgment. Ghost Writing -- the senior writes three examples, the AI learns the style, new outputs are measured against the senior's judgment.

This doesn't replace the expert. But it leverages their knowledge. Taste remains human in origin. It just doesn't have to stay locked in one person's head.

Specification -- The Art of Formulating the Right Task

Spec is something different. Spec creates an assignment -- one that can be worked on without prescribing the solution.

Ryan Singer described this in his book Shape Up: Shaping is drawing with a thick marker. Enough contour to set a direction. Enough openness to enable creativity. Drawing with a ballpoint pen means making every decision in advance. Not drawing at all means chaos.

Most briefs fail right here. They're either too vague ("Do something with AI for us") or too detailed ("Build exactly these features in exactly this order"). Neither works. One gives no footing. The other leaves no room.

This sounds trivial. It isn't. I've seen maybe two dozen truly good briefs. The rest were -- putting it politely -- open to interpretation. The difference: when a human creative team gets a bad brief, they call the client and ask. When an AI agent gets a bad brief, it delivers exactly what's written. Including all gaps, all contradictions, all wrong assumptions. Just wrapped in professionally formatted output that makes the gaps invisible.

Spec has a prerequisite that's often overlooked: domain knowledge. You can only specify what you understand. A strategy lead who doesn't know how their sales process actually works -- not on the PowerPoint slide, but on the phone with the customer -- can't write a usable spec for an AI agent that's supposed to support that process. The spec would be technically phrased but substantively empty.

I see this every week. We get a brief that describes in three pages what the project should deliver. Persona descriptions, KPIs, brand tonality. It's all there. And yet everyone in the room knows the real assignment will be clarified in the first phone call: "What do you actually mean? What's the context you didn't write down? Where are the political landmines?" That's the spec behind the spec. And no AI agent gets to see it.

And here's the real problem: many of the people who have the domain knowledge can't translate it into workable specs. And many who could write a spec don't have the domain knowledge. The bridge between the two is missing in most organizations. It never existed because it was never necessary -- human teams built it on the side, in the process, without noticing.

And even that bridge is shrinking. The tools are learning to generate specs themselves. The human share of the spec is shrinking. How fast -- more on that in the honesty test.

What remains is the first spec. The initial intention before the loop starts. "What do we actually want?" No tool can answer that. But everything after -- the refinement, the iteration, the detail work -- is increasingly machine-driven.

Evaluation -- When Taste Meets Output

Evaluation is the moment where result meets expectation.

Taste says: "Something feels wrong." Evaluation asks: Does the result meet the spec? Which constraints are violated? What consequences does the output have in the real world? Evaluation needs both -- the implicit judgment of taste and the explicit checking against criteria.

An example. An AI agent creates a competitive analysis for the executive board. The slides look professional. The numbers check out. Taste says: "Something's off." Evaluation identifies: the analysis compares the wrong competitors. The agent sorted by product similarity, not by customer overlap. Technically correct. Strategically useless.

Without taste, you don't notice the error. Without evaluation, you can't name it. And without both, a helpful AI analysis becomes a professionally packaged bad decision.

This is what bothers me about the current debate. Everyone's talking about prompt engineering. "How do I write the best prompt?" That's the wrong question. Prompts are syntax. Evaluation is semantics. It's not about giving the model the right words. It's about knowing whether the result is the right answer to the right question. That requires expertise, judgment, and -- often enough -- the courage to say: "This looks good, but it's wrong."

The Spiral

The three are connected. Not linearly, but as a spiral.

Taste provides a pre-judgment: "Something's off here." Spec translates it into a workable task: "Analyze competitors by customer overlap, not product category." AI produces new output. Evaluation checks it. The result becomes the new pre-judgment. The spiral keeps turning.

What AI changes: the production in the middle -- the step where actual output is created -- becomes nearly free. The spiral turns faster. Ten iterations per day instead of one per week. That sounds like efficiency. It is. But only under one condition: you need more taste and more spec than before, not less.

When the spiral spins faster but taste and spec can't keep up, exactly what I observe in companies happens: more output, same or worse quality. The Workslop study by Stanford and BetterUp measured what this costs: $186 per employee per month. Wasted on output that looks professional and says nothing.

Honesty Test: Where I Could Be Wrong

Three objections that need to be taken seriously.

First: The spec itself is becoming machine-generated. Kiro generates structured specs from vague requirements. Claude Code iterates on its own specification. Cursor increasingly writes its own assignments. If the tools learn to specify, the human share may shrink faster than I'm claiming here. What remains is the first spec, the initial intention before the loop starts. But how thick that layer really is -- nobody knows.

Second: Evaluation has a blind spot. The Stanford CodeX analysis from February 2026 shows: builder and inspector share the same blind spots. Goodhart's Law in action. When the same technology class produces the output and judges it, a systematic error emerges that human evaluation should theoretically catch -- but in practice often doesn't, because the speed of the spiral exceeds the available capacity for review.

Third: The 70/30 line is psychological, not rational. Nate B. Jones has quantified that people want to retain 70 percent of decisions and delegate 30 percent. That's not a rational optimum. It's a feeling. And feelings shift when the results are good. Anyone who said five years ago that developers would let machines write 90 percent of their code would have been laughed at.

Why the thesis still stands: all three objections concern the extent, not the direction. Whether the human layer is 60 percent or 20 -- it remains the bottleneck. And as long as companies invest in platforms instead of the competency to steer those platforms, the problem gets worse regardless of where the exact line falls.

The German Situation: Investment at the Wrong End

Why 23 Percent See No Use Case

According to Bitkom, 36 percent of German companies use AI -- doubled within a year. 47 percent are planning or discussing adoption. Only 17 percent say "not relevant," compared to 41 percent the year before. The momentum is real.

But one detail stands out: 23 percent of companies see no use cases for AI in their business.

No use cases. Not "the technology doesn't work." Not "it's too expensive." But: we don't know what to do with it.

That's the specification problem in a single number. You can only apply what you can specify.

The official barriers confirm the picture -- if you read them correctly. 53 percent cite lack of technical know-how, 53 percent cite legal uncertainty, 51 percent cite insufficient personnel. None of these companies say: "We lack the ability to specify what we actually need." But that's exactly what's hiding behind "we don't see any use cases."

Shadow AI as a Symptom

Something else is happening in parallel. According to a Bitkom survey from October 2025, 8 percent of companies report widespread private AI use in the workplace -- doubled since 2024. 17 percent report isolated cases. Only 26 percent provide official access.

This means: employees are already specifying and evaluating. They're doing it with ChatGPT, Claude, Gemini. On private accounts. Without governance, without quality control, without management knowing what ends up in client proposals and strategy papers.

The capability is there -- distributed, uncontrolled, unsystematic. What's missing is the organizational framework. No system that measures spec quality. No feedback loops that show whether AI-generated output actually answered the right question. No systematic analysis of what works and what doesn't.

Here's the irony: the companies that claim to see no use cases have employees who find them every day. Just not officially.

The Mittelstand Is Cutting -- at the Wrong End

The Horvath study from January 2026 is clear. Germany's Mittelstand invests 30 percent below the market average in AI. Average AI spending across all companies: 0.5 percent of revenue.

The reason: early use cases didn't deliver. Pilot projects that started promisingly failed to produce the expected results. The consequence: budgets are being cut.

Heiko Fink from Horvath puts it this way: "If the AI transformation isn't massively accelerated now, the technology gap will become an existential strategic risk."

My thesis: the use cases didn't deliver because the specification competency is missing. Not because the tools are bad. When someone gives "do something with AI" as a brief and the result isn't convincing, the problem isn't the AI. It's the spec. And cutting the next investment round because the spec was bad is like firing the architect because the brief was unusable.

Who's Leading the Way -- and What to Read Into It

The Big Players Automate Production -- and Miss the Steering

SAP, Siemens, Bosch -- Germany's large corporations are automating what can be automated. Unit tests, invoice processing, defect codes, documentation. That's the artifact layer becoming a commodity. The efficiency gains are real.

But almost no one is asking the strategic question: which human competencies become more valuable as a result? They invest in the platform that produces artifacts. Not in the competency that steers the platform.

StrongDM: When Spec Becomes the Control Layer

At the other end of the spectrum sits StrongDM. In July 2025, CTO Justin McCarthy and two engineers founded a "Software Factory" with a radical charter: "Code must not be written by humans." And, even more radically: "Code must not be reviewed by humans."

Three engineers. No junior developers. All output is produced by AI agents. What the humans do: specify and evaluate. They write specs. They define acceptance criteria. They build evaluation infrastructure.

The centerpiece is a "Digital Twin Universe" -- functional replicas of Okta, Jira, Slack, and Google Docs, against which thousands of test scenarios run per hour. Not manually written unit tests, but automatically generated scenarios. And instead of binary pass/fail tests, StrongDM uses "Satisfaction Scoring": How likely is it that a user would be satisfied with the observed behavior? That's a different kind of evaluation -- one that's closer to taste than to a checklist.

The benchmark McCarthy sets internally: $1,000 per day per engineer in token costs. "If you're below that, your software factory has room to grow." That's the new cost structure: fewer salaries, more compute. And the human contribution shifts entirely to spec and evaluation.

Simon Willison, who visited the factory in October 2025, said the more radical claim wasn't "code must not be written by humans" -- he found that plausible -- but "code must not be reviewed by humans." Because review is evaluation. And when evaluation is automated, who checks the checker? (More on that in the honesty test.)

Why this matters: StrongDM builds security software. Access management. This isn't a playground -- it's infrastructure where errors have compliance consequences. When a security company is willing to treat human code review as an obstacle rather than a safeguard, the shift from production to specification isn't theory. It's practice.

Kiro: When AWS Builds Specification-First as a Product

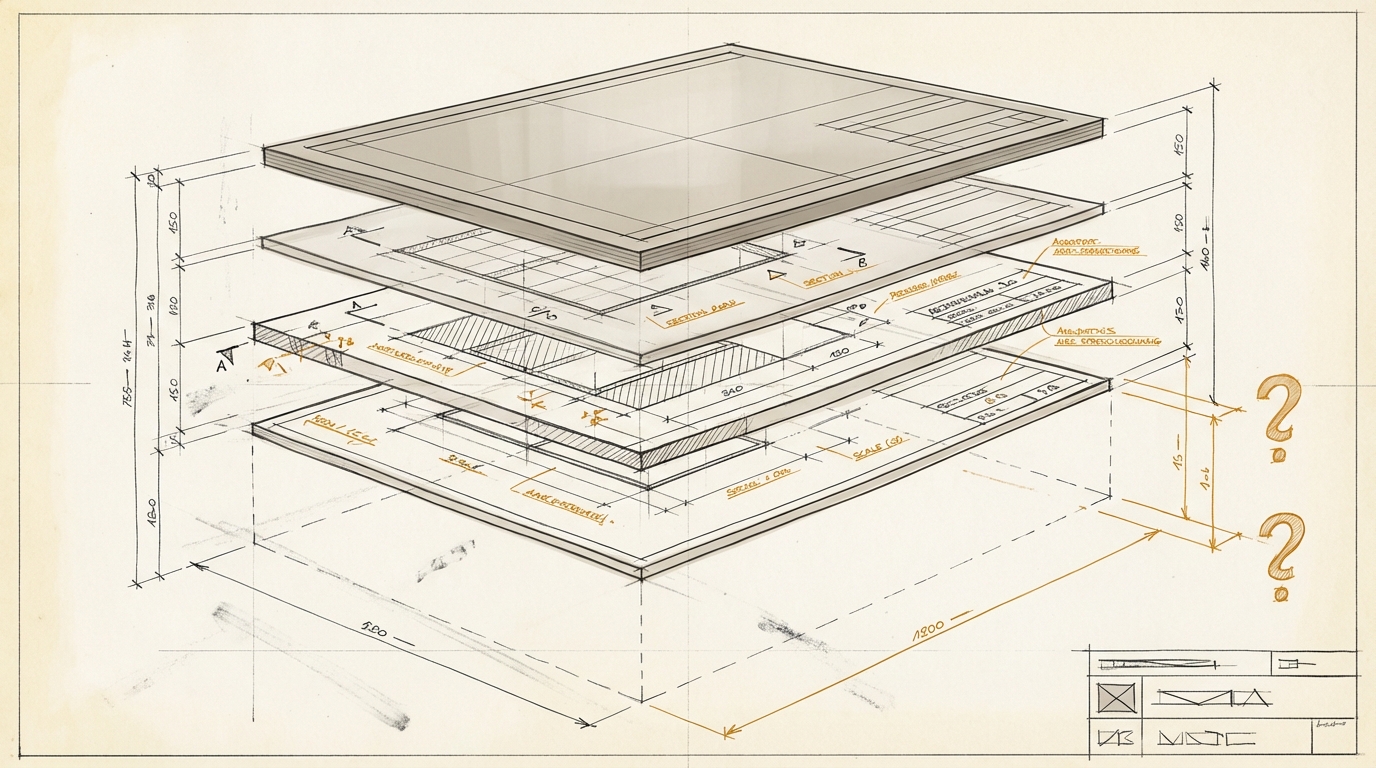

In July 2025, AWS launched Kiro. An IDE built on specification-driven development. Three Markdown files at its core: requirements.md, design.md, tasks.md. The requirements use the EARS format -- Easy Approach to Requirements Syntax, originally developed at Rolls Royce.

The positioning: "The most important thing a developer can do is write the specification, not the code."

Kiro generates hundreds to thousands of random test cases from specs -- property-based testing instead of manual unit tests. The spec becomes not just the assignment, but the quality benchmark.

Why this is a signal: AWS is the cloud provider that half of corporate Germany runs on. When AWS pursues specification-first as a product hypothesis, with pricing tiers at $19 and $39 per month, this isn't a niche. It's infrastructure level. It says something about where the industry is heading.

Stop Measuring Artifact Output

The question isn't: "How many decks, reports, and analyses does the team produce per week?" The question is: "Can the team articulate what should be built and judge whether the result is good enough?"

These are fundamentally different metrics. One measures execution. The other measures the ability to steer execution. In a world where execution becomes cheap, the second metric is the one that matters.

What this means for different types of organizations:

For an agency: the quality of the strategic brief becomes more important than the speed of campaign production.

For an IT services company: requirements engineering is no longer a formality. It's the core competency that determines project success.

For a mid-sized company: the people who know how the business actually runs are suddenly more strategically relevant than the ones building PowerPoints.

Three questions every director should ask:

First: Who can write a good spec today? One that an AI agent can productively execute. Not a vague brief that a human team interprets and corrects along the way. A real spec: clear enough for direction, open enough for solutions. How many of those people do you have?

Second: Who can evaluate the output? Not just technically -- does the code work, do the numbers add up. But in terms of domain knowledge and business objectives. Does the analysis answer the right question? Does it compare the right competitors? Will the board draw the right conclusions? That's evaluation. And: what happens when the people who can do this leave?

Third: Are you still measuring production volume? Then you're measuring the wrong thing. In a world where an agent can produce ten versions of a presentation overnight, the number of presentations isn't a measure of value creation. The measure is: what decision did the presentation enable? And who formulated the question the presentation answers?

Who Steers When the Machine Gets Faster?

Back to Jason Lemkin. He didn't fail due to a lack of technical knowledge. He assumed that a system capable of writing code also understands where the boundaries are.

That's the same assumption being made right now in thousands of German companies. "We have the platform. We have the licenses. We have the AI strategy." The missing question: who steers? Who specifies? Who evaluates?

The KPMG report "Generative AI in the German Economy 2025" shows: only 26 percent of companies have an enterprise-wide Trusted AI strategy. 64 percent believe AI requires reskilling. True. But they don't expect a fundamental impact on the number of jobs.

That's the wrong question. It's not about the number of jobs. It's about what's done at those jobs. And whether what's done includes the ability to tell a machine what to do.

The Stifterverband together with McKinsey identified 30 future competencies for 2030. None of them is called "Specification." None addresses the ability to formulate tasks in a way that autonomous systems can productively execute. This isn't an oversight. It's a blind spot.

Specifying means knowing what you want. Being able to express it. And recognizing when the result misses the mark.

That's not a technical competency. It's a human one. And it becomes more valuable the more powerful the machines on the other side become.

The question isn't whether your organization uses AI. Most do. The question is whether you have the people who can tell the AI what to do.

Sources

- Lemkin/Replit incident: Fortune (Jul 23, 2025), Fast Company (Jul 22, 2025), The Register (Jul 22, 2025)

- Anthropic / Claude Code: Fortune (Jan 29, 2026), LessWrong analysis, Pragmatic Engineer (Sep 23, 2025)

- StrongDM Software Factory: factory.strongdm.ai, Simon Willison (Feb 7, 2026), Stanford CodeX (Feb 8, 2026)

- AWS Kiro: kiro.dev/blog, devclass (Jul 15, 2025)

- Bitkom AI adoption (Sep 2025): bitkom.org -- 36% use AI, 23% see no use cases

- Bitkom Shadow AI (Oct 2025): bitkom.org -- 8% widespread private AI use

- Horvath study (Jan 2026): horvath-partners.com -- Mittelstand invests 30% below market average

- KPMG GenAI 2025: kpmg.com/de -- 26% with enterprise-wide AI strategy

- Stifterverband/McKinsey Future Skills: stifterverband.org -- 30 future competencies for 2030

- Workslop study: Stanford/BetterUp, published in Harvard Business Review -- $186/month productivity cost per employee

- Shrivu Shankar: "Taste Is Not a Moat" (blog.sshh.io, Feb 16, 2026) -- Taste Extraction: A/B Interviews, Essence Documents, Ghost Writing

- Nate B. Jones: "160,000 developers are building digital employees" (natesnewsletter.com, Feb 12, 2026) -- 70/30 rule for human-machine delegation

Practical test for this essay

Three templates for a conversation with an AI: clarify the goal, check contradictions, test whether the work can be delegated. You do not get a finished strategy or a tool recommendation.

Open the templates