Who Builds Your Judgment?

Most debates about AI inside companies still circle around the same questions: Which tools are good enough? Where can headcount be reduced? Which teams will move faster? Which processes can be automated? Those are real questions. But they miss the core management point.

Practical test for this essay

Three templates for a conversation with an AI: clarify the goal, check contradictions, test whether the work can be delegated. You do not get a finished strategy or a tool recommendation.

Open the templatesAI does not only change the cost of execution. It also changes the conditions under which judgment gets built inside organizations.

That is what this essay is about. Not another AI prophecy, but a diagnosis. It is meant to make three things visible:

- which tasks were in fact learning ramps

- why automating them is more than an efficiency gain

- which new leadership task follows from that

Anyone who sees this early does not just build faster teams. They build more resilient ones.

The defining management question in the age of agents is therefore no longer just: What can we automate?

It is: How do we still organize judgment once the old ramp disappears, the one through which people learned to distinguish good results from plausible but wrong ones?

Stay up to date

Get notified when I publish something new, and unsubscribe at any time.

The wrong center of the debate

Many AI debates still revolve around the wrong center. They treat productivity as the core issue and learning as a later side note.

That is understandable. Productivity is visible. A team delivers more. An analyst needs less time. An agent pulls information from several systems, writes a first draft, prepares a presentation. That looks like progress because it looks like output.

What is less visible is this: Which parts of the previous work were not merely work, but training infrastructure?

Preparation. Support work. First synthesis. Comparing variants. Commented corrections. Sorting material. Seeing edge cases. Failing a second or third time on the same thing. In many organizations that all looked like drudge work. But that was often exactly where people learned what quality looks like.

That is why it is too narrow to read AI adoption only as automation. If agents absorb the surrounding work first, not only hours disappear from the process. Part of the quiet mechanism may disappear as well, the one through which inexperienced people became reliable people.

And that is exactly why this is not merely a talent topic. It is a management topic.

What AI actually eats first

Most systems do not replace the core role first. They go after the work around the core role.

Claude Cowork is a good current example. Anthropic now positions Cowork explicitly as a general work tool for knowledge work, not just for engineering. The official descriptions focus on research, gathering material, competitive analysis, reports, spreadsheets, browser work, connectors, and structured deliverables.

The interesting part is not only that this is now possible. The interesting part is which kind of work becomes cheap first.

Not the final strategic decision.

Not the last conversation with the client.

Not the politically delicate tradeoff.

But the work before that:

- gathering information

- structuring material

- making first comparisons

- forming hypotheses

- building a first draft

- exploring the surface of possible solutions faster and more widely

That is the underestimated shift.

In many knowledge roles, that layer was not merely overhead. It was the zone in which people learned what matters, what can be left out, which exceptions count, which formulations hold, and which ones only sound professional.

AI does not eat mastery first. It often eats the ramp toward mastery.

That is not only true for software. It is true for research, strategy, analysis, account work, concept development, law, finance, operations, and part of classic management work.

As long as you only look at time saved, this appears to be a clear gain. Often it is. The question is what gets thinned out on the other side.

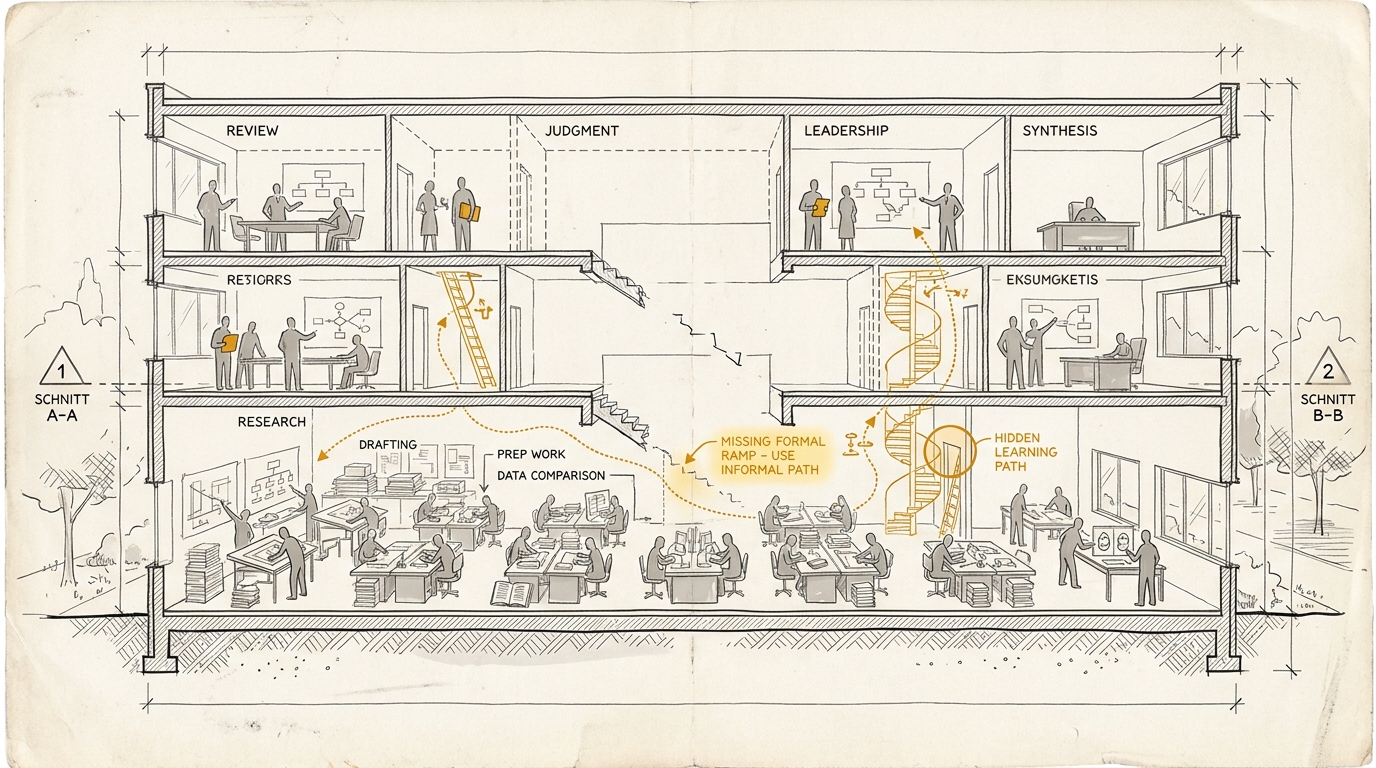

The old ramp was never labeled as a ramp

The problem is not new. Only its visibility is new.

Organizations have always lived from forms of learning that were never properly described as learning. This was the occult infrastructure of knowledge work.

Someone first got to research the background before later being allowed to make the recommendation.

Someone first wrote templates, notes, and drafts before later owning the actual document.

Someone reviewed cases, commented on variants, prepared a meeting, followed up, corrected material, and slowly learned which signals count and which ones do not.

That was not pedagogical romanticism. It was simply how competence emerged in many organizations.

Learning theory has had a name for this for a long time. Lave and Wenger describe with legitimate peripheral participation that learning does not happen only through knowledge transfer, but through gradual participation in real practice. People start at the edge, take on bounded parts, watch how the work is actually done, and grow into more complex responsibility.

That sounds academic. Inside organizations it is very concrete. Many people did not become senior because someone put them into a seminar room. They became senior because, over years, they stayed close enough to the material to see differences that no checklist can fully capture.

That is why the question is so delicate. If those peripheral zones of practice disappear faster than the organization builds new forms of learning, the reproduction logic of judgment becomes unstable.

This is measurable by now

You do not have to argue this in a fog.

The World Economic Forum’s report on jobs over the coming years identifies skill gaps as the biggest barrier to transformation. Sixty-three percent of surveyed employers name that as the central obstacle. Seventy-seven percent plan upskilling, while forty-one percent expect role reductions where AI automates tasks. That is the macro level: companies already know the capability question is becoming central.

The more interesting part appears one level lower.

The Thomson Reuters report on GenAI in professional services gives an exemplary case precisely because it does not come from software. Ninety-five percent of respondents believe GenAI will become central to their workflow within the next five years. At the same time, fewer than a third report that their organization offers GenAI training at all. The report states the resulting gap unusually clearly: some new hires are effectively “set up for failure” because organizations want GenAI to become central without hiring or training for the required capabilities in any systematic way.

That is not the language of a nostalgic defender of junior work. It is management failure in numerical form.

The shift becomes even clearer in law. Stanford Law is visibly redesigning curriculum and clinics because many tasks through which junior associates were previously introduced to practice are now being redistributed by AI. And at the University of Chicago the responsible faculty say the uncomfortable part out loud: the tools will improve, but the real professional value will move away from standard output and toward the judgment work above it.

The cases come from different domains. The diagnosis is the same: organizations are redesigning their work logic faster than their learning logic. That is exactly why the issue does not belong in a side chapter on talent. It belongs in the center of the management debate.

All of this leads to the same question:

If preparatory work, first drafts, and routine analysis become cheaper, where will the thing we later call experience still come from?

The fair counterposition

At this point the essay could be misread as a nostalgic defense of old junior work. That would be too easy.

Because there is also a fair counterposition. An important one.

The well-known QJE study in customer support shows that generative AI can help less experienced workers become substantially more productive. In that setting, average productivity rose by fifteen percent, with the largest gains going to less experienced and less skilled workers. The authors plausibly argue that AI spreads parts of the behavior of the strongest workers more broadly.

That is not a minor objection. It is a serious counterweight to any simplistic cultural pessimism.

So yes, AI can be a learning aid.

AI can partially compress experience.

AI can help newcomers reach usable results faster.

But that makes the management question sharper, not smaller.

Because if AI distributes parts of experiential knowledge, leadership has to distinguish more carefully:

- Which capabilities can be meaningfully supported by AI?

- Which capabilities still have to arise through direct practice, feedback, and edge cases?

This is exactly where many debates get sloppy. They move too quickly from “AI helps juniors” to “the old ramp no longer matters.” That conclusion does not follow.

What follows is something harder:

If natural learning ramps get thinner, management has to become more deliberate about which forms of capability should still grow organically and which ones should be supported through training, review, and tooling.

And that is where the new leadership task begins

In the old world, management could quietly delegate part of judgment to the organization itself.

Not consciously. Not perfectly. But effectively enough.

Processes were slower, handoffs were numerous, proximity to the material was high. People learned through repetition, observation, correction, and the sometimes frustratingly long routes through real work.

This incidental reproduction was expensive and often frustrating. But it had a side effect: it produced reliable people.

The more AI takes over the surrounding work, the less leadership can rely on that side effect.

That shifts the task.

Leadership is no longer only the function that sets direction, allocates resources, and carries conflict. It becomes more strongly the function that builds the conditions under which judgment can still emerge at all.

That sounds larger than it is. In practice it means something very concrete:

- Leadership has to explain more clearly what good work looks like.

- Leadership has to build learning loops more intentionally.

- Leadership has to distinguish between tasks that were pure routine and tasks that had a hidden training function.

- Leadership has to read pipeline health as a quality question, not merely a people question.

Put differently:

If AI makes operation cheaper, leadership has to make judgment more visible, more trainable, and more teachable.

That is the actual management shift.

Why taste suddenly stops being a luxury

This is where the essay touches a term that can sound soft but is operationally hard: taste.

In Artifact Production Just Got Cheap, taste first appeared as a scarce leadership resource. In Who Is Actually Specifying Here?, it turned into a more robust model together with Spec and Evaluation. What gets added here is another shift: once the old learning ramp gets thinner, it is no longer enough to possess taste. It has to be explained, shown, and passed on.

Inside organizations, taste is often what remains when quality cannot be named precisely enough. “This still does not feel right.” “This is not clean enough.” “The analysis does not go deep enough.” “The story does not hold.” Those sentences are often right, but they are a weak leadership instrument once the old ramp gets thinner.

As long as people spend years close enough to good work, a portion of those standards can be absorbed implicitly. Once that path weakens, implicit taste is no longer enough. That is exactly where The Vocabulary Gap connects: as long as an organization lacks language for good work, its judgment also remains hard to transmit.

This does not mean judgment can be turned fully into a checklist. That would be the next illusion.

But it can be made more explicit:

- through strong before-after comparisons

- through commented redlines

- through explanation instead of mere rejection

- through documented decisions

- through clear quality questions

- through making typical failure patterns visible

Ericsson made the idea of deliberate practice central to high performance: expertise does not emerge only through repetition, but through structured practice under feedback conditions. That is the point for leadership. When natural learning environments get thinner, organizations need more intentional conditions under which people can train sound judgment.

Then taste stops being a soft skill. It becomes leadership infrastructure.

What this means for executives in practice

At this point the essay is only useful if it can be translated into questions leaders can actually work with. Five are enough for a start.

1. Map your hidden learning ramps

Look at the tasks that are under AI pressure first:

- preliminary research

- first drafts

- material preparation

- comparing variants

- routine analysis

- preparation of decisions

Do not only ask: How much time do we save?

Also ask: Was this pure routine for us, or was it also a learning zone?

If the honest answer is that part of your future quality used to grow there, then full automation is no longer a pure efficiency question.

2. Separate output gains from pipeline health

A team can become much more productive in the short term and at the same time thin out its future judgment pipeline.

That is why AI success cannot be read only through throughput, time saved, or unit cost. You need at least a second lens:

- Who is still learning to judge results for themselves?

- Where do less experienced colleagues still encounter real edge cases?

- Where is reasoning being trained rather than mere operation?

If you cannot answer those questions, you already have a leadership problem, not a reporting problem.

3. Make good work more discussable

Many organizations have quality standards but no language for them. That is enough in a phase where people learn implicitly over long observation. It is no longer enough once the ramp gets thinner. This is the management version of the same observation behind The Vocabulary Gap: if you lack vocabulary for good work, you also lose the ability to train it well.

That is why more explicit forms are needed:

- commented examples

- review questions

- anti-examples

- justified approvals and rejections

- small decision logs

Not to mechanize taste. To make it teachable.

4. Give seniors a developmental task, not just an approval task

The more AI takes over the surrounding work, the greater the risk that seniority gets reduced to mere sign-off. That would be too little.

The new task of experienced people is not only to be the final gate. They have to explain, calibrate, comment, and build learning environments more actively than before. Otherwise judgment stays with them without spreading.

That is more demanding than simple approval. But it will probably become one of the most valuable parts of their future role.

5. Do not introduce AI only as an efficiency program

If AI rollout is framed only through the budget lever, it almost automatically produces the wrong questions:

- Where can we cut?

- Which tasks disappear?

- How much faster does this get?

The more important questions are:

- Which capabilities will become scarcer for us?

- Which learning zones do we want to preserve?

- Where do we need to build new forms of training?

- Which form of judgment is not optional in our business?

Any organization that skips those questions may modernize its tools, but not its operating logic.

Honesty check

There are at least three reasons to stay cautious.

First: Not all preparatory work was a valuable learning ramp. A lot of it really was waste, badly designed handoff, or administrative rubble. That does not need to be preserved out of nostalgia.

Second: Not every organization needs the same depth of judgment in every role. Some tasks can be standardized more heavily, others can be supported more strongly by AI, without losing real capability.

Third: We are early. Many of today’s learning paths will be replaced by new ones. It is quite possible that in two years new forms of training, simulation, review, and human-AI pairing will emerge that are more effective than the old ramp.

But none of those objections removes the core problem.

They only sharpen the right formulation:

The danger is not that AI destroys every learning ramp. The danger is that organizations redesign their work logic faster than their learning logic.

And that is a leadership question.

The real decision

Most companies over the next years will not fail because they picked the wrong model.

They will fail because they introduced AI as a productivity lever without deciding at the same time how quality, judgment, and reliable seniority are still supposed to emerge inside their organization.

That is not an abstract problem for HR, Learning, or Talent Acquisition. It sits in the middle of operations.

It sits in the question of which work you automate.

It sits in the question of what your seniors explain.

It sits in the question of whether good judgment becomes visible or stays locked in people’s heads.

And it sits in the question of whether you use AI only to accelerate work or also to look more honestly at your own learning architecture.

That is where the next generation of organizations will split.

One kind will become faster.

The other will become faster and more resilient.

The difference between the two is not the model.

It is whether leadership has understood that its task is no longer only to organize work.

It is to build the conditions under which faster work can still produce reliable judgment.

Practical test for this essay

Three templates for a conversation with an AI: clarify the goal, check contradictions, test whether the work can be delegated. You do not get a finished strategy or a tool recommendation.

Open the templates