The Vocabulary Gap

You can't specify what you can't name. And the people who could name it have the least incentive to do so.

Paul Bakaus wrote a vocabulary book.

Stay up to date

Get notified when I publish something new, and unsubscribe at any time.

Not a children's book. A tool for developers building interfaces with AI. Bakaus, formerly Google, built Impeccable with a "Vocabulary Layer": seven reference files with terms, definitions, and anti-patterns. No framework, no component library, no code. Just words.

Result: 1.59x better quality on the Tessl benchmark. Zero model change. Zero prompt engineering. Just vocabulary.

The mechanism is obvious once you see it. "Nice colors" produces generic output. "OKLCH tinted neutrals with warm base hue" produces a coherent palette with perceptual uniformity. "Good text" produces 16px Inter. "Fluid type scale with optical sizing and proper vertical rhythm" produces a responsive hierarchy that breathes. "Add some padding" produces arbitrary values. "8px baseline grid with optical alignment" produces a system.

The AI could do all of this already. Nobody asked for it, because most users didn't have the words.

The Universal Pattern

Sounds like a design problem. It isn't.

Strategy: A director says "we need AI transformation." What does he mean? Bolt-on tooling? Structural operating model change? Workforce restructuring? Context engineering in the sense of article five? Without the words to distinguish, he gets the generic package. And the generic package is Floor.

Marketing: Bain just showed that brands need to optimize for the agent economy. But most CMOs can't name the difference between GEO, SEO, and "agent readiness." If you can't name it, you can't commission it.

Product development: Nate B. Jones broke "prompting" into four disciplines: Prompt Craft, Context Engineering, Intent Engineering, Specification Engineering. Most companies optimize at Level 1, because they don't know the words for Levels 2 through 4.

The core observation: The capability gap isn't in the AI. It's in the prompt. And the prompt isn't bad because the human is stupid. The prompt is bad because the human lacks the domain vocabulary to describe what they actually want.

Remember the Floor/Ceiling distinction? "Nice colors" is Floor. "Tinted neutrals" is the beginning of Ceiling. The distance between the two IS the vocabulary gap. And the vocabulary gap determines where your commodity boundary runs.

Back to Waterfall

Anyone who's been in software development since the nineties recognizes the pattern.

Waterfall projects worked like this: plan everything upfront, specify, sign off, then build. The problem was never the process. The problem was that humans are terrible at knowing in advance what they want. And even worse at articulating it precisely. Every waterfall project that derailed during implementation didn't derail because of bad developers. It derailed because the specification was wrong.

Agile solved this. Not through better planning, but through shorter cycles. Iterate instead of specify. Build, show, correct. Scrum, Kanban, sprints: institutionalized processes to compensate for the human inability to specify precisely upfront.

Now we have AI agents that run through an entire sprint's worth of code in an hour. And suddenly we're back at waterfall. Because the agent needs a clear specification at the start. It can't ask mid-way "did you mean it like that?" It works with what's there. And what's there for most people is: vague.

That's the irony. Twenty years of Agile taught us we can't specify well. AI agents require exactly that. We have a tool that executes faster than ever before, and it fails at the same point as waterfall: at our inability to say precisely what we want.

There are two responses. One: better specs. Clarify everything upfront, let the agent run. Waterfall with faster execution. The other: shorter units. Planning done collaboratively, with the AI as a sparring partner that shows variants, opens up options, asks the right questions. Execution done autonomously. Interactive architecture, autonomous execution.

Both responses converge at the same point: you need the words. Either to write the spec. Or to find the right direction in dialogue with the agent. The vocabulary gap remains the bottleneck.

Rejection as Vocabulary Generator

Nate B. Jones wrote a sentence that opens the problem from the other side: "Your prompts are disposable. Your rejections compound."

The thesis: every rejection is a knowledge-creation moment.

A partner reviews a pitch deck and says: "Where's our proprietary insight on customer switching costs? Any firm with the same model could have delivered this framing." In that moment, she defined a constraint that didn't exist before. And she did it in words precise enough to encode.

Nate breaks this into three dimensions:

- Recognition: seeing that something is wrong. Requires domain knowledge.

- Articulation: explaining WHY it's wrong. Requires vocabulary.

- Encoding: capturing the constraint permanently. Requires infrastructure.

The vocabulary gap sits between Recognition and Articulation. You FEEL that the output is off. But without the right words, your rejection stays vague. "That's not right" instead of "you're treating all covenants the same, but DSCR monitoring has completely different triggers than interest coverage checks." Vague rejection evaporates. Precise rejection compounds.

This is the same principle as DDB's Bad Ideas Board from the previous article. The board defines the vocabulary of rejection: "generic," "obvious," "anyone would come up with that." Then it forces the team to find the words for what's better. The Bad Ideas Board is institutionalized rejection – a vocabulary generator.

The Cascade and the Vocabulary Gap

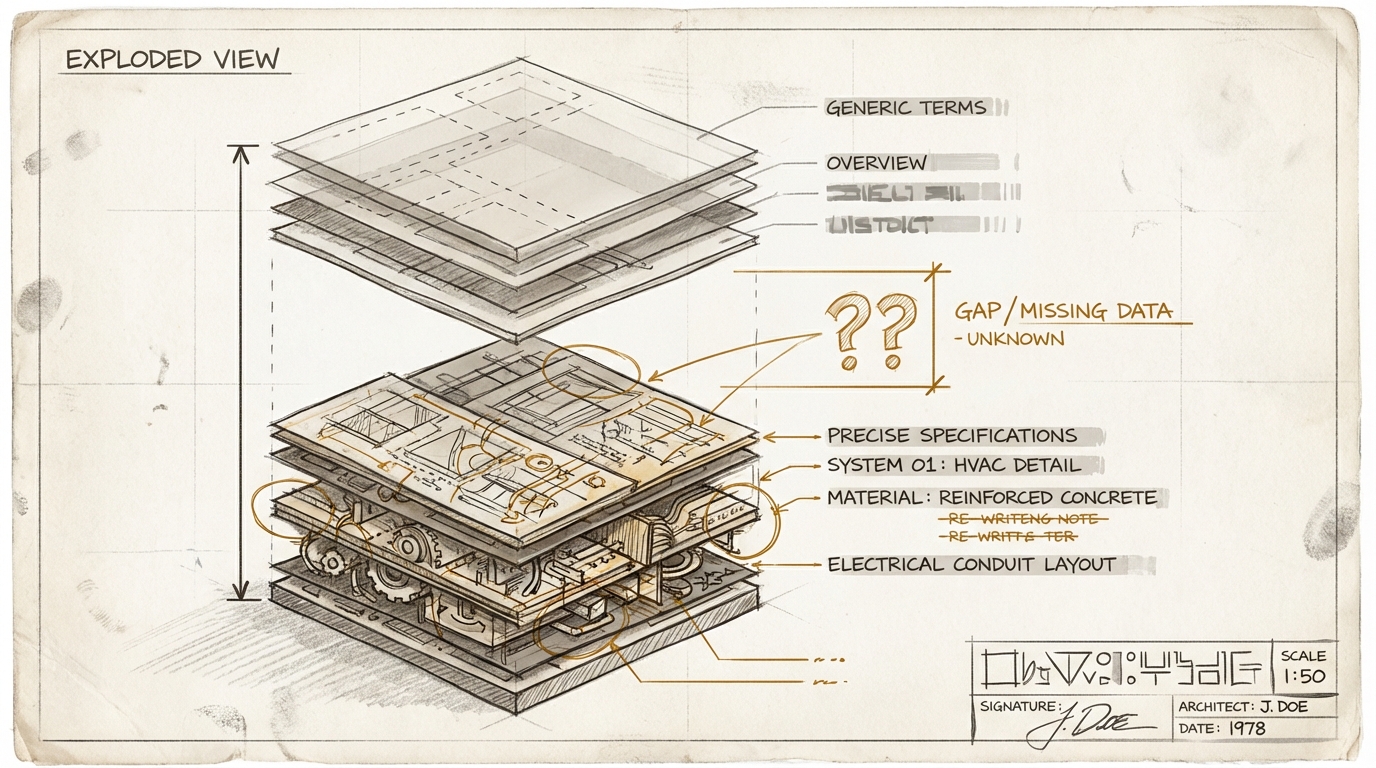

In the previous article, I described five capabilities that separate Ceiling from Floor: Terrain, Intent, Taste, Spec, Evaluation. The cascade that works as a flywheel.

The vocabulary gap affects every single level.

Without the words for your Terrain, you describe your market in platitudes. Without the words for your Intent, "we want AI transformation" remains an empty declaration. Without Taste vocabulary, you can't articulate why a result is off. Without Spec vocabulary, the agent produces generic output. And without Evaluation vocabulary, you can't check whether the result matches what you defined.

The rejection logic sharpens this. Rejection creates constraints. Constraints are vocabulary in encoded form. Encoded vocabulary lowers the cost of future specification. The richer your rejection vocabulary, the more precise your specs become, and the further your commodity boundary shifts. And the better your specs, the better the results you get – which in turn sharpens your judgment, because you recognize finer differences. The flywheel turns. But only if the words are there.

What Bakaus solved for design with seven reference files is fundamentally the same as Andrej Karpathy's program.md: a file with constraints and taste, against which an agent runs 700 experiments. Not "first A, then B, then C," but a web of rules that apply simultaneously and influence each other. Taste as a constraint system.

The Fear Thesis

Don Norman formulated a principle in 1988 in The Design of Everyday Things: good design externalizes knowledge. It places information in the world instead of relying on humans to keep everything in their heads. The door handle test: a well-designed door shows you whether to push or pull. A bad one forces you to guess.

Applied to organizations: good organizational design externalizes institutional knowledge. Documented, searchable, machine-readable. Bad design leaves it in people's heads. In Muller's head, in the partner's head, in the unwritten rules.

I described this in article five as the Muller problem. Here's where it gets worse.

The incentive structure rewards the opposite of externalization.

Muller doesn't get promoted because he documents well. He's needed because he's the only one who knows the special terms. The partner whose rejection vocabulary represents twenty years of compressed industry experience – if she encodes that in a constraint system, what does she do then? The senior developer who's the only one who understands the legacy code – his job security IS the non-documentation.

The more valuable the knowledge, the lower the incentive to share it.

The people with the richest vocabulary for precise rejection have the least incentive to encode it. Not out of malice. Because the structure rewards it. Share your knowledge, and you become replaceable. Hoard it, and you stay indispensable.

That's not a new pattern. But AI makes it expensive.

Nate B. Jones described the view from below in parallel: the junior pipeline is drying up because the entry-level tasks where juniors develop Taste are going to the machine. Fewer juniors learning through rejection. Fewer people building the vocabulary. That's the seed-corn problem.

The fear thesis is the view from above: seniors actively hoard their Taste. Two directions, same result. The vocabulary gap grows from both sides.

The Flywheel, and Why It Doesn't Run Automatically

If you work with AI agents today as a knowledge worker, you're essentially doing what a good manager does: set context, communicate intention, correct, steer. Just at a smaller scale, faster pace, and with a machine instead of a team.

And here a dynamic emerges that goes beyond individual work.

When you're forced to encode Terrain, Intent, and Taste so an agent can work with it, you simultaneously build artifacts that are useful for humans too. New team members can learn from them. Leaders can check against them. Cross-functional teams can align around them.

The invisible becomes visible. Good managers have always done this intuitively: set context, communicate intention, correct. But it was never systematized, because the effort wasn't worth the return. AI agents change that equation. Because encoded context is now directly actionable – not just as documentation, but as operational input.

Terrain feeds Intent. Intent sharpens Taste. Taste becomes the Spec. The Spec produces better output. Better output produces more precise rejection. More precise rejection expands the Taste vocabulary. And the expanded vocabulary improves the next Terrain.

Scale that to a team with their agents, to a department, to an organization, and it's not just agent quality that improves. Communication and alignment between humans improves. Because the same clarity that makes an agent work also improves human collaboration. That was the Lutke observation from article five: the discipline of framing problems with enough context that they're solvable without follow-up questions didn't make him a better AI user. It made him a better CEO.

Epic Systems proved this in practice. 45 years of encoding clinic workflows, rejection by rejection, hospital by hospital. 305 million patient records, near-zero churn. The moat isn't the software. The moat is the encoded judgment of generations of clinicians.

Where I Could Be Wrong

The flywheel can cement bad taste just as well as good. A manager's quality standard is often personal and inconsistent. When the flywheel runs, it accumulates what gets fed into it – and that isn't automatically right. It needs a governance layer: a way to challenge and evolve the Taste level over time, not just accumulate. Without that, you cement the biases of 2026 into the infrastructure of 2030.

The vocabulary gap isn't a skill gap you close with training. When I say "learn the words," it sounds like "send your people to a course." It isn't. Vocabulary in this sense is compressed experience. "Tinted neutrals" isn't a term you memorize. It's a concept you understand because you've seen and evaluated a hundred color palettes. The vocabulary gap closes through practice, not through seminars. And it closes through rejection: through repeatedly articulating why something isn't good enough.

The fear thesis isn't an accusation. It's rational behavior in an irrational incentive structure. Most AI transformation initiatives don't fail because of the technology. They fail because they ask people to externalize exactly the knowledge that constitutes their reason for existing. You buy the best tool in the world, and people feed it the minimum. If you want to change that, you need to change the incentives, not the tools.

What Follows from This

Not: "Everyone needs to learn vocabulary now." That's the naive version.

First: Vocabulary Layers as investment. What Bakaus did for design, every domain needs. Not as a glossary, but as a constraint set with anti-patterns. Prepare your domain vocabulary so an AI agent can work with it – and so new hires can learn from it. It's the same investment.

Second: Establish rejection as a core process. Not "quality gate" – that sounds like bureaucracy. Systematic articulation and encoding of rejections. What DDB did with the Bad Ideas Board for creative work can be transferred to any discipline: let the AI deliver the first draft. Then ask: what's wrong with it? And – this is the decisive step – write the answer down. Rejection shifts from byproduct to primary product.

Third: Interactive planning, autonomous execution. Don't use AI as a waterfall executor that you throw a spec over the fence to. Use it as a planning partner: have it show variants, compare options, ask the right questions until the spec is sharp enough. Then let it execute autonomously. The architecture is collaborative. The execution is autonomous.

Fourth: Ask the Norman question. Don Norman's principle, applied to your organization: is this knowledge in the world or only in someone's head? And what would need to happen for it to come into the world without the person whose head it's in feeling threatened? That's not an IT question. That's a leadership question.

The thinking tools for this article help you map your own vocabulary gap. The Vocabulary Scanner, the Rejection Archaeologist, and the Hoarding Test are three prompts you can apply to your own situation. Go to Thinking Tools ->

The vocabulary gap isn't just another skill gap you close with training. It's the visible expression of a deeper tension: between what organizations need (knowledge in the world, machine-readable, shareable, able to compound) and what individuals protect (knowledge in the head, exclusive, position-preserving).

AI doesn't create this tension. But it makes it expensive. Because the machine can't use the knowledge you don't speak. And because every competitor with the same tools reaches the same Floor.

What differentiates isn't the AI. What differentiates are the words.

Sources and Further Reading

Primary references in the text:

- Paul Bakaus / Impeccable: Vocabulary Layer for AI-assisted interface design. 1.59x quality uplift through seven reference files, zero model change.

- Nate B. Jones: "Your prompts are disposable. Your rejections compound." (March 2026) – Recognition, Articulation, Encoding as a cascade. Epic Systems as a 45-year rejection flywheel. – Nate's Newsletter

- Nate B. Jones: "Beyond the Perfect Prompt" – Prompt Craft, Context Engineering, Intent Engineering, Specification Engineering as four disciplines. – Nate's Newsletter

- Don Norman: The Design of Everyday Things (1988) – Good design externalizes knowledge.

- Andrej Karpathy: program.md – Taste as a constraint system against which an agent runs experiments.

Further reading:

- Shrivu Shankar: "How to Stop Your Human From Hallucinating" (November 2025) – Human "hallucinations" follow the same patterns as LLM hallucinations: jargon conflicts, missing naming conventions, hidden documentation. "Activation" means something different internally than in marketing. The vocabulary gap has always existed between humans – AI just makes it expensive enough to notice. – blog.sshh.io

- DHH / Jason Fried: Shape Up – "The Goldilocks Phase" of specification. The sweet spot between over- and under-specification. "Rabbit Holes" (what NOT to do) are structurally identical to Bakaus' anti-patterns: vocabulary for constraints. – basecamp.com/shapeup

Earlier articles in this series:

- dekodiert #5: Machine-Readable Context – The Muller problem, context engineering, making institutional knowledge machine-readable

- dekodiert #6: Floor/Ceiling – The five Ceiling capabilities (Terrain, Intent, Taste, Spec, Evaluation), DDB Bad Ideas Board

Put it into practice

This prompt kit translates the essay's concepts into concrete prompts you can use right away.

Go to Prompt Kit