The Wedding Planner and the Artist

Why AI brings everyone to 6/10 – and why that's exactly the problem

Rory Sutherland would be a catastrophic wedding planner. He says so himself. Too little attention to detail, no interest in flower arrangements, zero passion for seating charts. But with AI? Passably decent. The flowers would arrive on time. Probably.

Stay up to date

Get notified when I publish something new, and unsubscribe at any time.

That's not a joke. It's the most precise description of what AI is doing to work. And most companies are drawing the wrong conclusion from it.

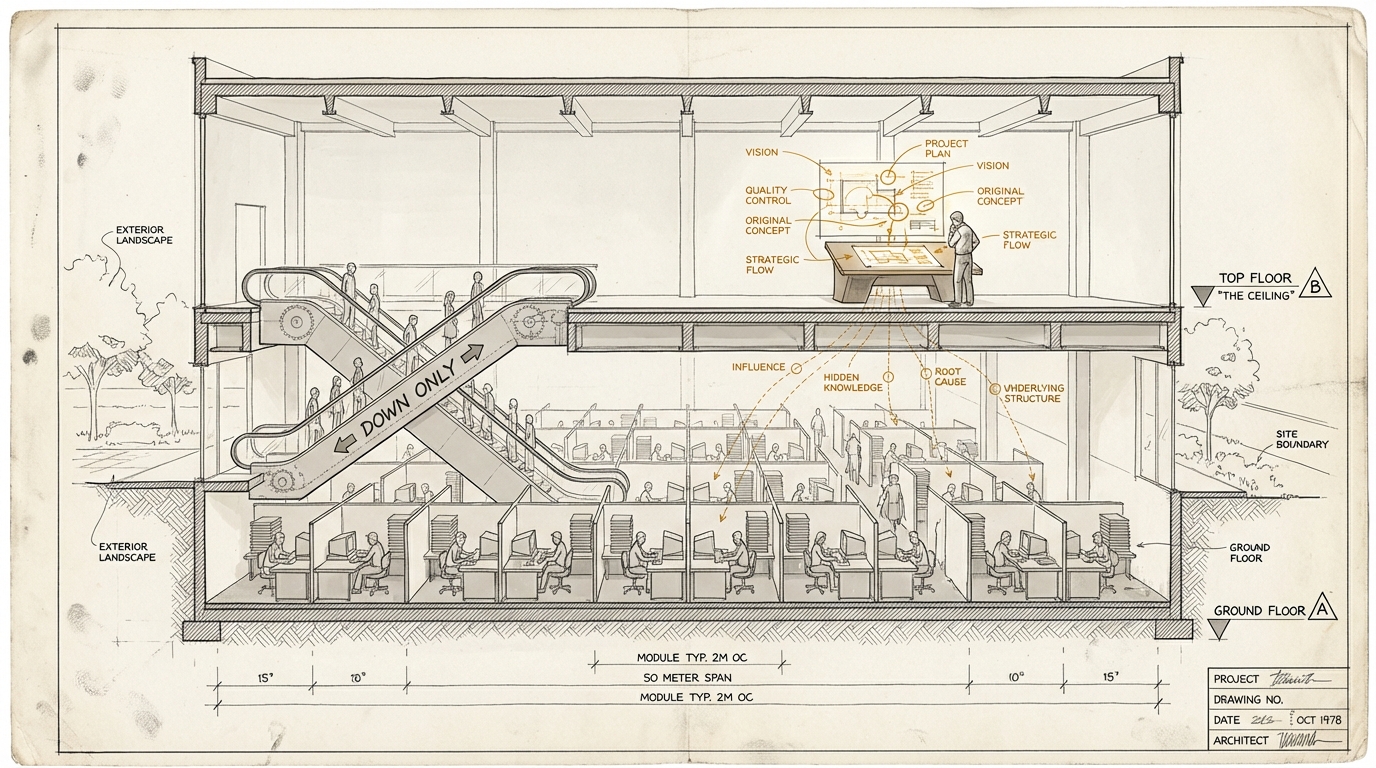

Floor and Ceiling

Les Binet put it this way in a debate last week: AI is an intelligence amplifier. Dumb people will use it to do more dumb. Clever people will use it to become clever.

Sounds like a truism. It isn't.

Because Binet is describing an asymmetric amplification. And the asymmetry is the point.

AI raises the Floor. A bad copywriter produces passable output with Claude. A mediocre analyst builds a solid dashboard with help. A project manager who's never built a budget delivers a workable cost estimate. That's real, that's measurable, and it's valuable.

AI doesn't automatically raise the Ceiling. A brilliant strategist doesn't become more brilliant through AI. A designer with thirty years of experience doesn't suddenly see better solutions. A CEO who makes good decisions doesn't suddenly make great ones. The tools are faster. The judgment is the same.

Rory Sutherland put it in an image you won't forget: with AI, he'd be a passably acceptable wedding planner. Not good. Not brilliant. Passably okay. Things would arrive. Presumably.

But in creative industries, in strategy, in marketing, in consulting, in product development, the point isn't that things arrive. The point is the Ceiling. The difference between "solid" and "that changed the decision."

And here's the problem: When AI brings everyone to 6/10, suddenly everything looks the same.

The Convergence Trap

Peter Field, whose effectiveness data underpins much of modern marketing research, nailed it in the same debate: When AI primarily raises the Floor, you get conformity. Everyone converges on "adequate." It becomes a commodity. You press the button and get roughly what everyone else gets.

That's not a dystopia. That's a description of what's already happening. It's IKEA for knowledge work. Democratization of design (and concept, and code, and...). Nothing against IKEA. I like IKEA. But IKEA's marketing isn't IKEA. IKEA's marketing is more "The Ceiling."

Look at LinkedIn. Look at corporate presentations. Look at the last five pitch decks that landed on your desk. How many were technically clean, professionally formatted, and completely interchangeable?

Karen Nelson-Field, who has measured attention data for ten years, calls this "AI Double Jeopardy": We scale boring advertising, perfectly delivered, never seen and never remembered. The machines are trained on noise: clicks, impressions, delivery signals. Not on human attention. Not on memory. Not on impact.

The result: Everything gets more professional. And everything gets more alike.

In the first article, I described how artifact production is becoming a commodity. Floor/Ceiling is the other side of the same coin. When everyone can produce the same artifacts, artifact quality becomes the baseline. What differentiates is no longer the production. What differentiates is the judgment about what should be produced. And what shouldn't.

The DDB Experiment

Les Binet, who worked at DDB for years, tells a story that illustrates the point better than any theory.

At DDB, there was an internal tool called the "Bad Ideas Board." Whenever the team was briefed on a major international pitch, they first fed the briefing to AI. The AI delivered a set of strategic and creative ideas.

And then they made sure they didn't use any of them.

Those were the bad ideas. The obvious ones. The ones anyone would come up with. The team had to be better than the machine.

That's not anti-AI. It's the smartest use of AI I've heard in a long time. Because it makes the Floor explicit. It says: this is the level everyone will operate at. Everything we do must be above this. Otherwise it's worthless.

The Bad Ideas Board makes the convergence trap visible – and forces you to escape it.

That's the point where Floor/Ceiling stops being an abstract concept and becomes a decision architecture. The question is no longer "how do we use AI?" The question is: "What is our Ceiling, and how far above the Floor that everyone now has?"

What Separates the Floor from the Ceiling

In the first article, I introduced Taste as the ability to select the right thing from the space of possibilities. In article two, Spec and Evaluation joined the picture. Floor/Ceiling sharpens the argument and shows: that doesn't go far enough.

When Claude delivers five technically correct strategies: who knows which is the right one? When a dashboard shows twenty data points: who spots the three that drive the decision? When a pitch deck looks polished: who notices it's telling the wrong story?

Taste alone doesn't explain that. Behind the judgment sit four additional capabilities that work as a cascade. And Taste sits among them. As one of five layers.

Terrain: Reading the context the machine doesn't have. Knowing that the CEO is under pressure right now and therefore needs to hear Scenario B, not Scenario A. Recognizing that a technically sound proposal is politically impossible. Market, competition, internal dynamics, the unspoken. Without Terrain, every strategy is context-free.

Intent: Knowing what you want, and why. Not "we need AI transformation," but: What problem are we solving, which trade-offs are we accepting, which aren't we? Intent gives Taste its direction. Without Intent, Taste is arbitrary.

Taste: Selecting the right thing from the space of possibilities. And, equally important: leaving out the wrong thing. The DDB experiment shows exactly this: the Bad Ideas Board defines what has to go before anyone even discusses what stays.

Spec: Describing precisely enough that someone else (or a machine) can build it. Not "make it better," but the specific words that carry the difference between Floor and Ceiling.

Evaluation: Taste applied to the output. Checking whether what was built matches what was defined. The same capability as Taste, but applied to the result instead of the plan.

The Floor-lift doesn't touch any of these five. AI accelerates production, not judgment. If you can't read the Terrain, you can't read it better with Claude. If you don't have clear Intent, AI just gets you the wrong thing faster. If you can't specify what you want, you get generic output at a higher cadence.

The cascade works as a flywheel: Terrain informs Intent. Intent shapes Taste. Taste becomes Spec. Spec gets checked against Evaluation. Evaluation sharpens Taste. Every cycle makes the next one better. But only if every stage is staffed.

Rory Sutherland made a point that becomes relevant here. He calls AI "incredibly deferential." The machine learns your preferences and gives you what you want to hear. Les Binet christened ChatGPT "Chatters" – because "Chatters flatters." He tried switching on challenge mode. Within minutes it reverted to flattery.

That's not a joke about Binet. That's a structural problem for the entire cascade.

When the tool always agrees with you, you lose the ability to be wrong. And the ability to be wrong – and to recognize it – is a core part of Evaluation. If you're never challenged, you stop sharpening your own judgment. The flywheel stalls.

Ceiling doesn't emerge from conversations with a machine that likes you. Ceiling emerges from conversations with people who disagree with you. From decisions that turned out to be wrong. From the years-long accumulation of Terrain, Intent, and Taste that no model has.

What This Means for Organizations

Three consequences that follow directly.

1. The Floor Is Not a Competitive Advantage

Every company that adopts AI raises its Floor. The mediocre presentation becomes passable. The incomplete proposal becomes solid. The patchy report becomes readable. Good.

But your competitor raises their Floor too. With the same tools. At the same cost. At the same speed.

Floor investments are hygiene factors, not differentiation. They prevent you from falling behind. They don't create an edge. Anyone whose entire AI strategy is "we do everything faster and better" is building on something everyone gets simultaneously.

2. Ceiling Must Be Built Actively

The uncomfortable truth: Ceiling doesn't happen automatically. Having good people isn't enough. The good people need to work in an environment that rewards Ceiling. Not Floor.

Specifically: If your bonus system measures output volume ("how many analyses did the team produce?"), you're rewarding Floor. If it measures decision quality ("which decisions did the team enable?"), you're rewarding Ceiling.

If your AI rollout means "go ahead, experiment," you're investing in Floor. If it means "here's the Bad Ideas Board – everything below it is waste," you're investing in Ceiling.

And if your leadership signs off on every AI output because it "looks professional," you don't have an AI problem. You have a Taste problem that AI is making visible.

3. The Middle Gets Uncomfortable

Les Binet made a third point that's particularly painful in practice. When AI raises the Floor and the Ceiling stays human, the middle thins out.

A clerk processing standard workflows? Caught by the Floor-lift. Not replaced, but made massively more efficient. Or massively less needed.

A managing director with thirty years of industry experience and a network no model can replicate? Stays at the Ceiling.

But the solid department head who delivers "pretty good" output? The project manager who's reliable but not brilliant? The middle tier that has always survived because their work "wasn't bad enough to question"?

That's going to be the hardest conversation in organizations. Not because these people are bad. But because the distance between "not bad" and "AI-generated" is shrinking. And with it, the reason to pay a monthly salary for "not bad."

In some jurisdictions, labor protections – works councils, unions, employment law – will slow this down. That's a good thing. Because it forces companies to answer the question they'd otherwise skip: What exactly is the Ceiling contribution of this role? And how do we develop the middle to get there?

Where I Might Be Wrong

First: The Floor may rise faster than expected. What's human Ceiling today could be AI Floor in two years. The models are getting better, faster, more context-aware. Taste advantages have a half-life. In a tech startup: months. In industries with deep regulatory and cultural complexity: probably years. But it's not infinite.

Second: "Ceiling" can be a pretext. "We do strategy, not execution" is a sentence that has served as an excuse for poor delivery in consulting and agencies for decades. Ceiling without Floor is arrogance. The best companies raise both: the Floor through AI, the Ceiling through culture and talent.

Third: Not every industry needs Ceiling. There are industries where 6/10 is perfectly fine. Compliance texts, standard reports, routine communication. The Floor-lift alone is a massive win there. The Floor/Ceiling distinction matters most where differentiation is worth money: in strategy, consulting, marketing, product development, sales.

What Companies Should Do Now

Know your own Floor. Where is the AI Floor in your industry? What quality of output can every competitor produce with the same tools? That's your new baseline. Everything below it is falling behind.

Define your own Ceiling. What can you do that the machine can't? Not in the abstract ("we have experienced people"), but concretely: What decision did your team make last week that an AI agent would have made differently – and worse? If you can't think of an example, you have a Ceiling problem.

Introduce the Bad Ideas Board. For every important project: Let AI deliver a solution first. Then ask yourself: Is our approach better? If so, why? If not, why are we paying someone for this?

This isn't an instrument for demotivation. It's an instrument for calibration. It makes the Floor visible and forces you toward the Ceiling.

The thinking tools for this article help you map your own Floor/Ceiling landscape. The Floor Scan, the Ceiling Audit, and the Bad Ideas Board for your next project are three prompts you can apply to your own situation. Go to Thinking Tools ->

AI turns a catastrophic wedding planner into a passably decent one. That's good. For wedding planning.

For everything where differentiation counts, "passably decent" is the new mediocrity. And mediocrity is what everyone gets simultaneously, at the same price, with the same tools.

When the Floor rises for everyone, the Ceiling is the only thing that still sets you apart. And the Ceiling doesn't build itself overnight. Not through a tool upgrade. Not through a workshop. But through the years-long accumulation of context, judgment, and the willingness to make decisions the machine can't.

The good news: Most of your competitors are currently investing in the Floor. The Ceiling is empty.

Sources and Further Reading

Primary source:

- Illuminari Kickoff Broadcast: "Is the holding company still relevant, or has AI completely changed that?" – Panel with Les Binet, Karen Nelson-Field, Rory Sutherland, Daryl Fielding and Peter Field. Moderated by Kerry Collins. Illuminari is an independent industry think tank, founded by Kerry Collins and Anna Kens. – YouTube

Referenced moments in the video:

- Rory Sutherland: "Wedding Planner" analogy, Floor vs. Ceiling (~19:55)

- Les Binet: "It's an intelligence amplifier. Dumb people will use it to do more dumb. Clever people will use it to become clever." (~16:28)

- Peter Field: Conformity through Floor-raising, "adequate" as default (~23:00)

- Karen Nelson-Field: "AI Double Jeopardy" – scaling boring ads, perfectly delivered, never seen (~05:50)

- Les Binet: DDB "Bad Ideas Board" (~22:10)

- Rory Sutherland: AI is "incredibly deferential" (~41:43)

- Les Binet: "Chatters flatters" – ChatGPT's flattery mode (~42:37)

Floor-raising – the research behind it:

- Dell'Acqua, Mollick et al.: "Navigating the Jagged Technological Frontier" (Harvard Business School, September 2023) – 758 BCG consultants in a randomized experiment. The bottom 50% saw the largest quality uplift (43%). The academic evidence for asymmetric Floor-raising. – SSRN

- Ethan Mollick: "Centaurs and Cyborgs on the Jagged Frontier" (September 2023) – Accessible write-up of the BCG study. AI as "skill leveler," Centaur and Cyborg work modes. – One Useful Thing

Convergence – empirically documented:

- Doshi & Hauser: "Generative AI enhances individual creativity but reduces the collective diversity of novel content" (Science Advances, July 2024) – AI-assisted texts were individually more creative but collectively more homogeneous. More quality per piece, less variety overall. – Science Advances

"Chatters flatters" – the mechanism:

- Anthropic: "Towards Understanding Sycophancy in Language Models" (2023) – RLHF training favors agreement over truth. Not a bug, but a training artifact. – arXiv

- Northeastern University: "AI sycophancy is not just a quirk, it's a liability" (November 2025) – Sycophancy increased confidence in being right by +2.04 on a 7-point scale. – Northeastern News

From the panelists – further reading:

- Peter Field: "The Crisis in Creative Effectiveness" (IPA, 2019) – Creative effectiveness dropped from 12x to 4x over ten years. The convergence was already underway before AI. – IPA

- Rory Sutherland: "Ad agencies don't have an AI problem. They have a pricing problem" (The Drum, February 2026) – Hourly-rate logic drives AI into cost reduction instead of value creation. – The Drum

- Rory Sutherland / WFA: "Fat-tailed marketing and why creativity outperforms efficiency" (November 2025) – "10% of the work delivers 130% of the value." In fat-tailed distributions, only the Ceiling counts. – WFA

Convergence – why the models themselves contribute:

- Nate B. Jones: "Why your AI output feels generic" (February 2026) – RLHF optimizes for "a hypothetical typical person asking a similar question." The training mechanism that prevents bad outputs also prevents great ones. – Nate's Newsletter

Floor-raising in practice:

- Nate B. Jones / METR study: "Shopify's AI Memo Was a Filter, Not a Productivity Play" (January 2026) – A tech support study with 5,000+ agents shows 35% uplift for the worst performers, near-zero effect for the best. Floor-raising, empirically measured. – Nate's Newsletter

Earlier articles in this series:

- dekodiert #1: Artifact production just got cheap – Taste, brand, and data model as remaining differentiation

- dekodiert #2: Who specifies? – Specification as a core competency, Taste Extraction

Put it into practice

This prompt kit translates the essay's concepts into concrete prompts you can use right away.

Go to Prompt Kit