Executive Briefing: The Invisible Input

What stands between your AI business case and a noble gas in the Persian Gulf

Helium is a noble gas. It cools superconductors in MRI machines. It purges the optical chambers of EUV lithography machines, without which no modern chips can be manufactured. No helium, no chips. No chips, no servers. No servers, no AI compute.

Stay up to date

Get notified when I publish something new, and unsubscribe at any time.

A plant in Qatar supplied a third of global production. Since March 4, it's been offline.

In not a single AI business case I've seen in the last six months does the word "helium" appear.

Why a special briefing

dekodiert normally publishes every two weeks. This briefing comes off-cycle because the terrain has shifted. Not just any terrain. The terrain on which every AI investment decision of the last two years was built.

The assumption was: compute gets cheaper and more available. The $420 billion that hyperscalers have committed in capex for 2026 alone is based on that. Your AI roadmap is based on that, whether you know it or not. My recommendations in earlier issues are based on that.

Since February 28, 2026, that assumption no longer holds without qualification. The implications are now clearer.

This is not panic. It's a terrain analysis. What changed, what it means, and what you should be checking now.

The Chain

On February 28, the US and Israel carried out military strikes against Iran. Iran retaliated, hitting among other targets Qatar's Ras Laffan Industrial City. Ras Laffan is the world's largest LNG export hub and the site where roughly a third of global helium is extracted as a byproduct of natural gas processing. QatarEnergy declared force majeure on all affected contracts on March 4. The Strait of Hormuz is effectively closed to Western-allied commercial shipping.

On March 18 and 19, the complex was hit again. 14% of production capacity is permanently damaged according to QatarEnergy, rebuild timeline: three to five years. The rest is offline until hostilities end.

This sounds like a geopolitical problem in the Persian Gulf. It's a compute problem in Frankfurt, Dublin, and Ashburn, Virginia.

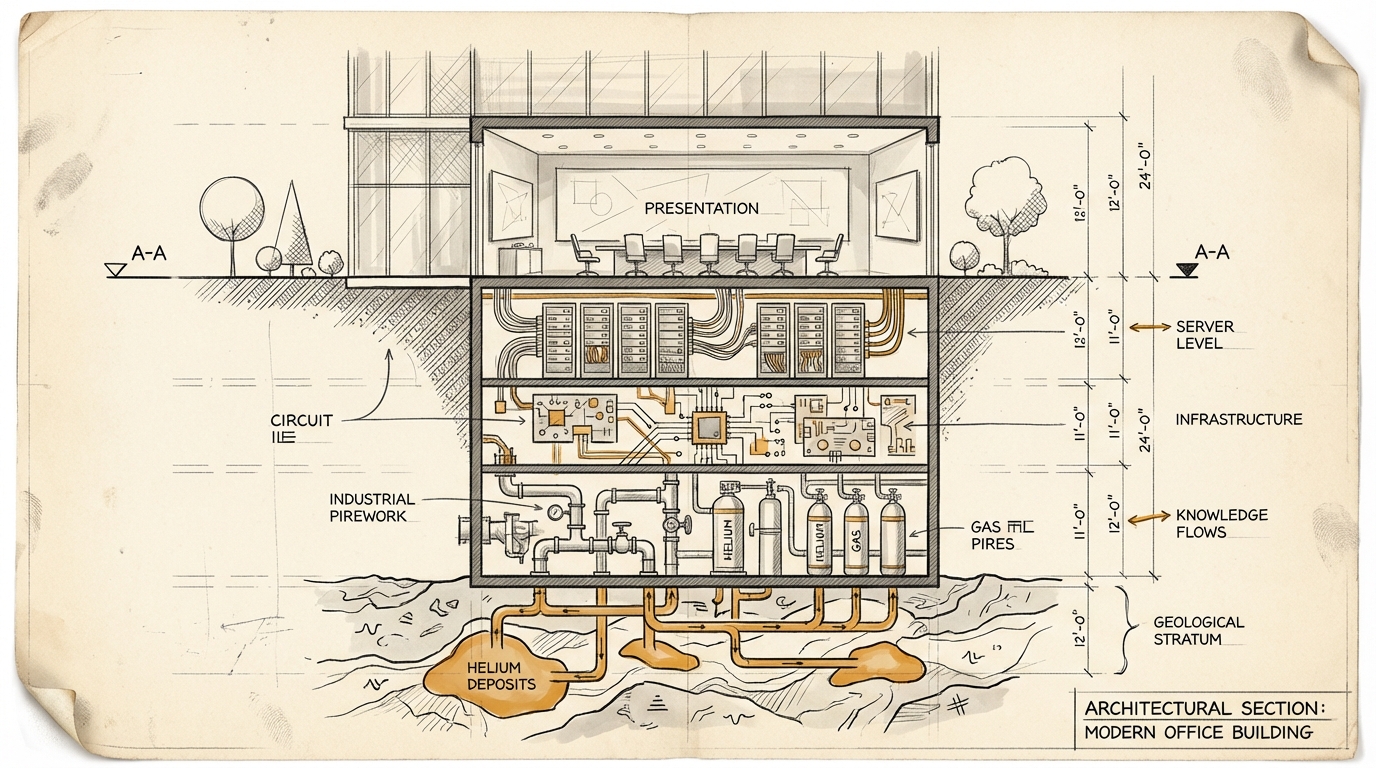

The transmission chain: Helium drops out, EUV lithography machines can't operate, chip production slows, memory prices spike (DRAM already +170%, DDR5 quadrupled), servers become more expensive and scarcer, cloud capacity gets rationed or repriced, AI workloads cost more, your business case no longer works.

At the same time: Brent at $126, European LNG prices doubled. The datacenters that are still running cost more to operate.

Not one crisis. Multiple crises. Simultaneously.

The Silent Premise

Every AI business case of the last two years has a line item "compute costs" with an implicit downward arrow. Nobody modeled the scenario "compute gets more expensive." That's not a disgrace. The entire industry didn't.

But it reveals something about how we plan AI investments: we plan on the surface of the stack. Cloud providers, API costs, GPU instance types. The physical chain underneath is invisible. Which chip, from which fab, using which raw material, from which region. Six links from your API call to the Persian Gulf, and no decision-maker had the chain on their radar.

In Issue #4 I wrote about industries that don't exist for AI agents because they have no API. Helium is the next layer: an industry that doesn't exist for most people. Almost no director-level decision-maker in the DACH region thinks about helium. But helium determines whether the hardware gets delivered on which their AI strategy depends.

The invisible economy has a physical layer. And it just broke.

Three Focal Points

Instead of an abstract industry analysis: three concrete perspectives that show how differently the chain hits, but how similar the pattern is.

An MRI scanner in a German hospital

A conventional MRI scanner requires 1,500 to 2,000 liters of liquid helium for initial superconductor cooling. Roughly 3,200 MRI units are installed in Germany (Deutsche Krankenhausgesellschaft, 2025). If 10% of them fail because no helium is available for refilling after a quench, that's 8,000 examinations missing per day. Two million per year. Delayed cancer diagnoses. Postponed surgical planning.

That's the direct helium dependency. Then there's the indirect one: the chips inside the MRI scanner need helium for manufacturing. The AI diagnostics built on top of the scanner need GPU compute that runs on chips that need helium. Triple chain, triple vulnerability.

Helium-free high-field MRI systems (3 Tesla, the clinical standard) are realistic no earlier than 2031 according to Fraunhofer IPA. Siemens Healthineers has BlueSeal, an approach that requires only 7 liters instead of 1,500, but so far it only works for low-field systems. For the installed base and high-field diagnostics, there's no alternative for three to five years.

The MedTech example shows the cascade most tangibly: from noble gas to cancer diagnosis in three steps.

A semiconductor fab in Dresden

A 300mm fab consumes 2,000 to 3,000 liters of gaseous helium per day. For leak testing (no other gas delivers the sensitivity), for backside cooling in etch tools (without helium, wafer temperature drifts, yield drops 15 to 25%), for CVD processes as a carrier gas.

The bulk tank holds 8,000 liters. Enough for ten to twelve days. Recycling infrastructure: rarely in place so far. Why? Because helium was cheap enough that the investment didn't pay off. A recovery system costs 2 to 4 million euros. At old prices, the ROI was beyond five years. At today's spot price, under a year. But installation takes twelve to eighteen months.

That's the difference between leading edge and mature node, which the public debate often conflates. EUV scanners consume 1,500 to 2,500 liters of helium per hour. A fab with ten EUV scanners needs orders of magnitude more than a DUV fab. German fabs (Infineon Dresden, Bosch Reutlingen) produce on mature nodes (28nm and larger) and are affected, but not existentially threatened in the short term. Long term, that's different.

Because: the Amur Gas Processing Plant in Russia (Gazprom) has a planned helium capacity of 60 million cubic meters per year. That's 37% of previous global production. This helium goes to China. Not to Europe. SMIC is building three new fabs for mature-node chips in parallel. With Russian helium, with industrial electricity 40 to 50% below European levels, with state subsidies.

An industry insider puts it this way: "Europe is investing 43 billion in fab capacity and forgetting to secure the supply of materials needed to operate those fabs. It's like building a highway and forgetting to plan gas stations." (SEMI Fab Forecast, BGR DERA Rohstoffinformationen Nr. 40, Silicon Saxony)

A retail group with a 47 million euro AI investment

Cloud costs at 3.2 million euros per month. GPU spot availability for training: down 60 to 70% since February 28. The personalization engine generates 4.2% uplift on basket value, on 2.3 billion in online revenue that's roughly 58 to 65 million euros in real terms. The business case was solid.

Now the double squeeze: compute costs are rising (Gartner: +8 to 12% for public cloud in Europe), and at the same time the pie to which the uplift applies is shrinking (ifo Institut: inflation forecast raised to 3.8%, GfK: consumer sentiment down 8 to 12 points for Q3).

On top: Temu and Shein operate with structurally lower infrastructure costs. Domestic cloud, own chip production, subsidized energy. When your compute costs rise and theirs don't, the competitive advantage shifts further.

The CDO of a major German retail group sums it up in one sentence: "We built our entire digital strategy on the assumption that compute costs keep falling. Nobody modeled 'compute gets more expensive.'"

The Real Shift

What connects the three focal points: it's not about helium. It's about the architecture of the system.

Charles Perrow described in 1984 in "Normal Accidents" why certain systems inevitably produce cascades. Not because people fail, but because the system architecture forces cascades. His criterion: systems that are simultaneously complex AND tightly coupled. Nuclear power plants. Petrochemistry. And, if you trace the chain: the global AI infrastructure.

Tightly coupled: every link depends directly on the previous one. Helium drops out, chips get scarce, servers get scarcer, cloud gets more expensive, your business case tips. No buffer in between. Fab stockpiles: one week.

Complex: energy affects fabs AND cloud simultaneously. Geopolitics affects helium AND oil AND shipping routes simultaneously. Memory prices affect servers AND embedded systems AND smartphones simultaneously. The interactions are non-linear, unpredictable, and not accurately captured in any risk model.

The reinsurance industry sees this most clearly, because modeling exactly these correlations is their business. And the message from there is uncomfortable: the correlation matrix that European insurers use for their portfolios is not calibrated for this scenario. Industries considered independent (automotive, medical technology, energy, retail, finance) are all connected through the same semiconductor supply chain.

$420 billion in committed hyperscaler capex. A third of the helium gone. The reinsurers' question: who carries the risk? Their answer: right now, nobody. Not consciously.

Honesty Check

Four points where this analysis could be wrong.

First: a ceasefire could come quickly. If hostilities end within weeks, supply normalizes within months. But: 14% of capacity is permanently damaged. Even in the best case, the insight remains: the infrastructure hangs by a thread. And the next disruption will come at a different point.

Second: technology compression could help. Google TurboQuant reduces memory requirements for inference by 70%. Quantized models, model distillation, more efficient architectures. That's real and relevant. But: these technologies primarily benefit companies already operating at scale. For mid-market companies just getting started, they're an option, not a lifeline.

Third: German companies are less cloud-dependent than American ones. Many DACH companies run their own datacenters. The cloud cost exposure is real, but not the same for everyone. However: the energy cost effect hits everyone. And the hardware for private datacenters is getting just as scarce and expensive.

Fourth: this topic might be too far from AI transformation. Helium geopolitics is not what you read dekodiert for. But that's exactly the point: knowing your TERRAIN means knowing the constraints on which the strategy is built. Those who don't know their terrain invest blind. And this constraint just shifted fundamentally.

What you should be checking now

Not: "Stop everything." That would be the panic response, and panic is just as wrong as ignorance.

1. Stress-test your business cases. What compute cost assumptions are baked into your AI business case? Run it at +30%, +50%, and +80%. Which projects survive all three scenarios? Those are the ones that should keep running. The rest need a Plan B.

2. Switch from expansion to allocation. When compute gets scarcer and more expensive, the question shifts from "Which AI projects do we launch?" to "Which projects are worth the compute cost?" That's a taste/evaluation problem. Prioritize instead of democratize. Double down on the five use cases with the best return per compute euro, pause the rest.

3. Make the chain visible. Which cloud provider? Which GPU? Which chip? Which fab? Which region? Which raw material? Most of you know the first two links. The other four are the terrain that just shifted. Knowing the API call isn't enough. You need to know the chain.

4. Re-evaluate local. Edge computing, on-premise inference, quantized models. When cloud gets more expensive, local becomes relatively more attractive. For the DACH region with its data privacy sensitivity, this could be a structural advantage. But: the hardware for it depends on the same supply chain. If you want to order, do it now, not in three months.

5. Think energy and compute together. AI compute is energy consumption. Energy costs are rising. If you separate your AI strategy from your energy strategy, you have two strategies that don't know each other. That's a luxury nobody can afford right now.

DIY dekodiert

Three thinking tools for this briefing. Copy them, paste them into the AI of your choice, and explore your own situation in the conversation. Details and the full prompts are on dekodiert.de.

Prompt 1: The Chain Finder. Have the AI uncover the physical dependencies of your AI strategy. From the cloud bill back to the atoms. By the end, you'll know which links in the chain carry your entire AI portfolio.

Prompt 2: The Business Case Stress Test. Take a specific AI business case and run it through three scenarios: +30%, +50%, +80% compute costs. Not whether AI works, but whether the economics still hold.

Prompt 3: The Perrow Test. Where is your system complex AND tightly coupled? Where does a single failure produce cascades that don't show up in monitoring? The most interesting couplings are the ones between systems owned by different departments.

Framing

This briefing is a special briefing. It comes off-cycle because the topic is time-sensitive. Regular issues of dekodiert continue on their usual schedule.

In previous issues, I've argued that AI shifts value from production to orchestration. That specification and evaluation are the bottlenecks. That machine-readable context is the prerequisite. That cheaper execution makes more ambition profitable, not less.

None of that has become wrong. But all of it has acquired a boundary condition that was previously invisible: the physical infrastructure on which AI runs is more fragile than assumed. And it just got a stress test that nobody had planned for.

The question is not whether AI works. The question is what it costs, who can afford it, and how long the infrastructure needs to deliver.

If you don't address this in your next board meeting, you haven't understood the terrain.

Sources: Gasworld, CNBC, Fortune, C&EN, Fitch Ratings, IDC, USGS, IEA, SEMI World Fab Forecast, BGR DERA Rohstoffinformationen Nr. 40, BVMed, ZVEI, Fraunhofer IPA/IPMS, DKG, OECD Health Statistics, Silicon Saxony, ifo Institut, Gartner IT Spending Forecast, GfK Consumer Climate, VDA, Gazprom/Neftegaz.ru, Swiss Re sigma, Lloyd's Systemic Risk Scenarios. Full source overview at dekodiert.de.

This briefing is based on a board research package with 18 named primary sources, supplemented by a DACH-specific analysis from six industry perspectives (automotive, MedTech, semiconductors, consulting, insurance, retail), each with its own source work.

Put it into practice

This prompt kit translates the essay's concepts into concrete prompts you can use right away.

Go to Prompt Kit