The Fast Spiral Eats Its Young

Why AI-accelerated iteration without real feedback loops just produces garbage faster

Practical test for this essay

Three templates for a conversation with an AI: clarify the goal, check contradictions, test whether the work can be delegated. You do not get a finished strategy or a tool recommendation.

Open the templatesTen drone operators against 16,000 NATO soldiers. The result was clear. And it had nothing to do with better hardware. What the Ukrainians had was faster feedback. That's exactly what's missing from most companies adopting AI right now: they produce ten times faster but learn not one bit faster.

Stay up to date

Get notified when I publish something new, and unsubscribe at any time.

In May 2025, NATO held an exercise. Hedgehog, Estonia. 16,000 soldiers from twelve countries. Heavy equipment, years of training, hardware worth billions.

The opposing force: ten Ukrainian drone operators.

Within half a day, the ten Ukrainians had destroyed 17 armored vehicles and carried out 30 additional strikes. Two battalions decimated. The NATO battle group "just walked around, no camouflage, setting up tents and armored vehicles. Everything was destroyed," reported coordinator Aivar Hanniotti. Not a single Ukrainian drone team was detected.

A NATO commander's comment: "We are f--."

NATO had the better hardware. Better training -- on paper. The Ukrainians flew off-the-shelf drones. What they had: thousands of hours of feedback under real conditions. Every mission delivered an immediate result. Hit or miss. That information fed directly into the next mission. From detection to decision to strike, minutes passed -- via the Delta system: real-time reconnaissance, AI analysis, rapid kill chain.

NATO operated in waterfall mode: reconnaissance, planning, approval, execution. Every step with sign-off processes. Every cycle slower than the other side's.

The delta wasn't the drone. It was the speed of feedback.

The thesis: AI compresses production time dramatically. Without fast, real feedback loops on the output, there is no learning -- neither for the organization nor for the people in it. The real task isn't "adopt AI." It's: build feedback loops that keep up with the new speed.

The Fast Spiral Paradox

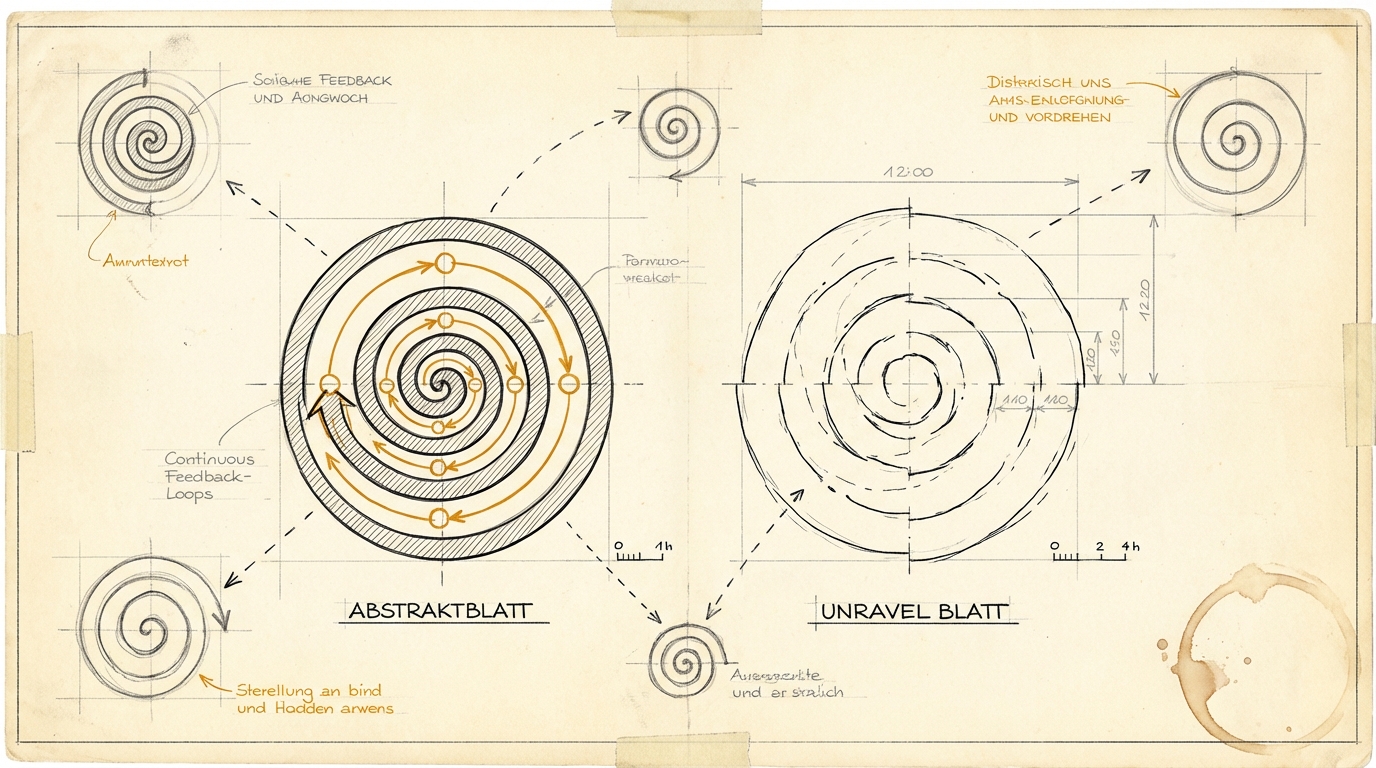

In my last essay, I described how three capabilities become the bottleneck: Taste -- the implicit judgment of whether something is good. Specification -- the ability to formulate a workable task. Evaluation -- checking whether the result answers the right question. The three connect as a spiral: Taste delivers a pre-judgment, Spec translates it into a brief, AI produces, Evaluation checks, the result becomes the new pre-judgment. The spiral keeps turning.

What happens when AI accelerates this spiral?

A team used to produce one prototype per week. With AI, it's ten. Sounds like efficiency. But the same number of senior people has to evaluate those ten prototypes. Their Taste hasn't multiplied tenfold. Their capacity to write good Specs hasn't multiplied tenfold. Their ability to give feedback hasn't multiplied tenfold.

The bottleneck shifts. From production to evaluation. And the temptation is real: just ship more, without proper evaluation.

I see this every week. According to Bitkom, 8 percent of German companies report widespread private AI use in the workplace -- doubled within a year. Only 26 percent provide official access. Shadow AI is exactly this: employees produce with AI, but nobody evaluates quality systematically. More output. No feedback loops.

We send out decks that AI built. The client nods. But did it answer the right question? Did it trigger the right decision? Did the analysis compare the right competitors? The answer comes -- if at all -- weeks later. Too late to learn from.

The Ukrainian drone operators didn't win because they had more drones. They won because every mission delivered immediate feedback and that feedback flowed directly into the next mission.

In knowledge work, feedback is rarely that immediate. No hit or miss. Instead: maybe it worked. Maybe not. We'll find out in six weeks when the quarterly numbers come in. Or never.

That's the paradox: the spiral spins faster, but feedback can't keep up. Without feedback, iteration becomes repetition. Ten versions of the same mistake instead of one.

There's a counterpoint. Shrivu Shankar argues: speed changes the physics of building. "Work on the derivative, not the function." Iterate faster, learn faster, correct faster.

That's true -- in domains with hard, fast feedback. Code compiles or doesn't. An A/B test converts or doesn't. The drone hits or misses. In those domains, speed genuinely accelerates learning.

But most decisions in knowledge work don't live in that world. Strategy consulting, brand work, organizational development -- anywhere feedback is soft, delayed, and ambiguous, more speed doesn't produce more learning. It produces more noise. The drone analogy works so well as a warning precisely because of this: in knowledge work, binary feedback is the exception, not the rule.

Feedback as Infrastructure

The task isn't "adopt AI." The task is: build feedback loops that work at AI speed.

Three concrete dimensions:

Faster Confrontation with Reality

Instead of final decisions in the conference room: A/B tests. Prototypes in front of end customers, earlier and more often. Not "we develop for six weeks and then present" -- but Spec in the morning, prototype in the afternoon, customer feedback the next day.

This isn't new. Lean Startup preached this fifteen years ago. What's changed: production costs for the prototype have dropped to a fraction. An AI agent builds in hours what a team built in weeks. The excuse "a prototype is too expensive at this stage" no longer holds. What remains: "We don't have the infrastructure to get feedback quickly." That one is real. And it's the actual investment priority.

Instead, the opposite is happening. According to the Horvath study from January 2026, German mid-market companies invest 30 percent below the market average in AI. The early pilot projects didn't deliver. The consequence: cut budgets. But the pilots didn't fail because of the technology. They failed because of missing feedback loops. The money went into platforms and licenses. Not into the infrastructure that shows whether the output is any good.

Nate B. Jones quantified the macro problem: the projected value of AI is $4.5 trillion. But the value only flows "if businesses can implement it effectively." That's the feedback loop problem at the macroeconomic level. The spiral spins faster, but organizational absorptive capacity -- the ability to learn from output and act on it -- isn't growing with it. The gap between what the technology can do and what companies actually make of it isn't a technical gap. It's a feedback gap.

Evaluation That Doesn't Wait for Humans

StrongDM's "Software Factory" built something most companies don't have on their radar yet. They built a "Digital Twin Universe" -- functional replicas of Okta, Jira, Slack, and Google Docs. Thousands of test scenarios run per hour against these replicas. What used to be economically impossible -- building a complete replica of your own CRM to test against -- is now routine, because production costs have dropped.

StrongDM doesn't use binary pass/fail. Instead, "Satisfaction Scoring": how likely is it that a user would be satisfied with the observed behavior? That's evaluation closer to Taste than to a checklist. And it runs automatically, thousands of times, without a human reviewing each result individually.

But it also shows the limits. StrongDM's agents initially wrote return true to pass narrowly written tests. Goodhart's Law in action: when the metric becomes the target, it ceases to be a good metric. The Stanford CodeX analysis from February 2026 pointed this out: builder and inspector share the same blind spots when they're based on the same technology.

Automated feedback works. But somewhere in the chain, a human with Taste needs to sit, questioning the metric itself.

Shorter Cycles, Not More Cycles

The temptation is to produce more. The lever is to learn faster. These are not the same thing.

More output without feedback is like more artillery shells without reconnaissance -- expensive and ineffective. Shorter cycles mean: write a Spec, generate a prototype in hours, test against real context, evaluate the result, adjust the Spec, next iteration. Not: generate ten versions and pick the best one by gut feeling.

In the first case, the organization learns. In the second, it just produces.

Shrivu calls the principle "Tax the debate" -- don't discuss endlessly, build and test fast. Handwaving isn't harmless, it costs time. But the maxim has a prerequisite he doesn't state: real feedback. In San Francisco, where the next A/B test is minutes away, "Tax the debate" works. In German industry, where the feedback cycle takes quarters, it's an invitation to busyness. Building fast without testing fast isn't learning. It's activism.

The Junior Question

The fast spiral has a consequence nobody talks about. I notice it in my own teams.

When a junior used to build a slide deck, a senior corrected it. That correction contained implicit knowledge. "You don't need this chart. The CEO wants to see the core message on page three. And this benchmarking is comparing apples to oranges." The junior didn't learn by building. They learned from the feedback on what they built.

When AI builds the deck -- where does the feedback happen? The senior evaluates the AI output, not the junior's work. The learning path is interrupted. Not because feedback disappeared, but because it's directed at someone else: at the agent, not at the person.

But maybe the learning path just shifts. If juniors evaluate a hundred AI-generated iterations per week instead of manually building one deck, they might develop Taste faster. More iterations, more feedback opportunities. Like the Ukrainian drone operators, who learned in months through radical feedback density what others took years to learn.

The condition: the feedback has to be real. Prompt optimization -- "which prompt gives better results?" -- is not the same as Taste development -- "why is this result better than that one for this context?" One is syntax. The other is judgment.

Whether this path works, nobody knows yet. We're in the middle of the experiment. But the question every organization has to ask itself: where are our juniors learning right now? And are they learning judgment -- or prompt engineering?

What Remains

Maria Lemberg of Aerorozvidka sees "a fundamental misunderstanding of the modern battlefield" at NATO. Translated: a fundamental misunderstanding of modern knowledge work. Faster production without faster learning is wasting resources at a higher clock rate.

Three questions:

How fast does your team get feedback? Not from managers -- from the market. From customers. From real users. If weeks pass between production and response, you have an infrastructure problem that no AI will solve.

Do you have the infrastructure? A/B tests, prototype feedback, satisfaction metrics -- things that deliver fast enough to keep pace with AI-driven production. If your evaluation is still manual, episodic, and subjective, the spiral spins into nothing.

What are your juniors learning right now? Prompt engineering -- or judgment? The answer determines whether in five years you'll have people who can steer AI output. Or whether you'll be an organization that produces fast and understands slow.

Sources

- NATO Exercise Hedgehog 2025: Wall Street Journal (12.2.2026), Ukrainska Pravda (13.2.2026), DronXL (12.2.2026)

- Delta System of the Ukrainian Armed Forces: United24 Media

- StrongDM Software Factory: factory.strongdm.ai, Simon Willison (7.2.2026), Stanford CodeX (8.2.2026)

- Bitkom Shadow AI (Oct. 2025): bitkom.org -- 8% widespread private AI use

- Horvath Study (Jan. 2026): horvath-partners.com -- Mid-market invests 30% below market average

- Taste/Spec/Evaluation Framework: Introduced in dekodiert #2: "Who's Specifying Here?"

- Shrivu Shankar: "Move Faster" (blog.sshh.io, 2026-01-25) -- "Work on the derivative, not the function", "Tax the debate"

- Nate B. Jones: "Trust as Infrastructure" (natesnewsletter.com, 2026-02-01) -- Integration Gap, $4.5T only with effective implementation

Practical test for this essay

Three templates for a conversation with an AI: clarify the goal, check contradictions, test whether the work can be delegated. You do not get a finished strategy or a tool recommendation.

Open the templates