The Most Expensive Conflation: Tasks and Jobs

Four out of ten companies cut jobs because of AI. More than half regret it. A third rehired the people they let go – and paid more than the savings were worth. The data shows a pattern more expensive than any wrong tool choice: companies analyze at the task level and decide at the job level. The analysis is usually right. The decision is not.

Practical test for this essay

Three templates for a conversation with an AI: clarify the goal, check contradictions, test whether the work can be delegated. You do not get a finished strategy or a tool recommendation.

Open the templatesSo the point of this essay is not just to criticize premature layoffs. It is to correct a conceptual confusion that is getting expensive in many companies. If you want to introduce AI sensibly, it is not enough to break tasks down cleanly. You need to understand what holds a job together beyond its visible tasks.

1,000 Business Leaders, One Lesson

The Orgvue study surveyed 1,000 business leaders. The numbers are clear.

Stay up to date

Get notified when I publish something new, and unsubscribe at any time.

Four out of ten decision-makers cut jobs because of AI. 55% regret the decision. 35.6% rehired more than half of the people they let go. A third spent more on rehiring than the savings were worth. These are not outliers. These are data points in a pattern that cuts across industries.

And the pattern repeats with different numbers from different sources.

Thomas Davenport of Babson and MIT surveyed 1,006 global executives for the Harvard Business Review. 60% reduced headcount because of AI potential. Not because of actual AI implementation. That was 2%. Two percent. The remaining 58 percentage points cut based on a promise. That is like clearing the warehouse before the new supply chain is in place. In theory, everything arrives on time. In practice, you are standing in front of empty shelves, calling the old supplier – who now charges a premium.

The Washington Times reports that companies are rehiring customer service roles they replaced with AI. The AI could handle every single request. Every task technically solved. Ticket open, solution found, ticket closed. But it could not understand the context in which the requests originated. Could not recognize when a frustrated customer was about to leave. Could not see why the same complaint came in for the third time and what that says about a systemic process problem two departments away. The tasks ran. The job did not.

The regret does not come from the wrong technology. It comes from the wrong granularity.

The companies analyzed at the task level: Can an agent handle this task? And decided at the job level: So we do not need the position anymore. The first question was answered correctly. The second question was never asked.

What Separates a Task from a Job

A task takes two hours. A job takes 18 months. The difference is not duration. The difference is context.

A task has defined input and defined output. It can be executed in isolation, repeated, standardized. An agent can handle it – often faster and more consistently than a human. That is the floor I described in the last piece. AI raises the floor. Every single task becomes faster, cheaper, more reliable. That is real. That is measurable. That is the part everyone sees.

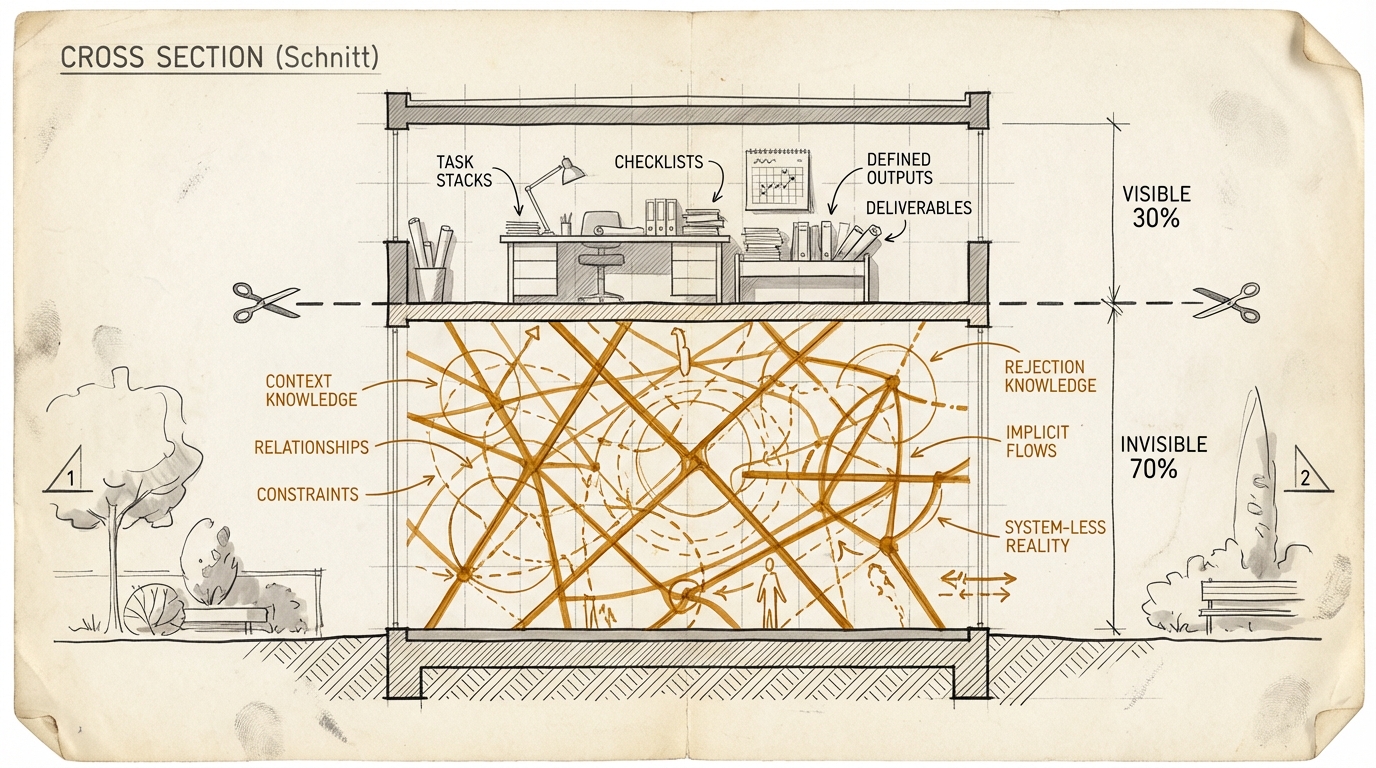

A job is something else. And let us be honest: we all know this. A job is a bundle of tasks, context, and judgment. The visible tasks might account for 30%. The other 70% are invisible – precisely because they do not fit into task lists. What belongs to these 70%: Knowing which tasks should happen in the first place and which ones are better left alone. Setting priorities when three urgent things land on the desk at once and the fourth has not been spotted by anyone yet. Recognizing exceptions that no rulebook covers. Escalating before a problem becomes a crisis. Reading the organizational context – who is under pressure right now, which project is politically sensitive, why the board is not the right audience for bad news this week.

This is not sentimentality about human work. This is a functional description of what separates a job from a task list. Tasks are floor. Jobs are, at their best, ceiling. An agent reaches the floor of every single task. But the job level – context, judgment, priority – that is ceiling. Cut there, and you cut at the ceiling.

The Buyer Who Exists in No System

An example I see in some version in almost every company.

Take the buyer. In theory, an agent could handle procurement. Trigger orders, compare prices, contact suppliers, evaluate quotes, pre-fill contracts – every single task doable. Some of it already today. But the buyer knows things that exist in no system.

He knows that Supplier B is more expensive but does not take four weeks for rush orders, because he has had a direct line to their dispatcher for eight years. That Supplier A missed specs three times last quarter but was never formally flagged, because the executive team needs the relationship for a joint venture next year. That the new colleague in quality management is stricter than her predecessor, and for certain parts it is better to go with the pricier supplier before there is trouble that ends up on the CEO's desk.

None of that is in a CRM. Not in an ERP. Not in a wiki. Not in a Confluence space. It is in the buyer's head. And the buyer is gone now. Along with everything he learned over the years but never wrote down – because nobody asked him to, and because he had no incentive to make himself replaceable.

Knowledge in the Head

Don Norman gave us a simple, useful distinction for this in 1988: "Knowledge in the Head" and "Knowledge in the World." In product design, the point is straightforward: good design puts knowledge into the world. Bad design makes the user keep it in their head.

Most organizations still do the latter.

What matters in many roles does not sit in a system. It sits in people. Terrain knowledge. Implicit constraints. The feel for when a process is still normal and when it is starting to tip. The stuff that was never properly documented, never made machine-readable, and often never even had a name.

When the person leaves, that knowledge leaves too.

In piece 5 I described why externalization so rarely happens cleanly. The incentive structure rewards hoarding knowledge more than sharing it. In piece 7 the focus was the vocabulary for it: rejection constraints, the quiet no-sentences, the implicit quality thresholds. That is exactly the knowledge people take with them when they leave.

And that is why the regret rate is so high. The person is gone. The non-externalized knowledge is gone too.

The Davenport data sharpens the point. 60% cut based on potential, not results. Many did not even verify whether the agent could actually take over the visible tasks. They let go of the people who held the context, based on a promise. And when the promise met reality, the context was gone.

Why the Regret Is Not Coincidental

Economics has known this pattern since 1865. William Stanley Jevons showed that more efficient steam engines did not lead to less coal consumption, but to more. Efficiency made coal cheaper, cheaper coal made new applications profitable, new applications increased total consumption. The paradox – efficiency increases total consumption – repeated with steel, computing, bandwidth, software. More on this in an upcoming issue, where I will apply the pattern to knowledge work in detail.

Here the short version is enough: When AI reduces execution cost, the need for human judgment does not decrease. It increases. Because cheaper execution makes new tasks profitable that nobody touched before. The report that was not worth the effort suddenly becomes feasible. The analysis that never had budget now costs a fraction. The customer support that only covered the top 20 clients can suddenly serve all 200. The personalization that was too expensive is suddenly viable for every segment.

More tasks, not fewer. And every new task needs someone to decide whether it should happen. Whether it has priority. Whether it fits the organizational context. Whether it creates more value than it generates complexity. That is judgment. That is context. That is the job, not the task.

The companies that cut at the task level see the efficiency. They miss the new demand that the efficiency creates. The 55% regret is empirical evidence.

Shopify understood this. Headcount reduced from 11,600 to 8,100 – sounds like classic cutting. Partly it was. But at the same time, Tobi Lutke hired 1,000 AI centaur interns. His internal memo was not a layoff letter. It was a redefinition of work. The question was not "who can we replace?" The question was: "Which tasks disappear, and which new jobs emerge that did not exist before?"

That is the task/job distinction in practice. Shopify automated tasks and simultaneously redefined jobs. Eliminated positions where there were only tasks. Created positions where context and judgment are needed – and more context and judgment than before, because execution became faster and cheaper. The 55% who regret their decision automated tasks and eliminated jobs. Without making the distinction. Without asking what lies beyond the visible task.

The Bitkom Paradox and the Works Council as Competitive Advantage

In Germany, things get interesting. Not because the problems are different. But because the regulatory framework creates a different dynamic.

The Bitkom numbers tell three stories at once. 27% of German companies expect job cuts due to AI. At the same time, 100,000+ IT professionals are missing. 42% expect AI to create additional demand. All three statements simultaneously, all three from the same economy. That is not a contradiction – that is the Jevons pattern in German numbers. Jobs disappear on the task side, emerge on the judgment side. See only one side and you make the wrong decisions. See both and you recognize a restructuring, not a contraction.

But here is the part I never read in US analyses. And the part that makes the real difference for the DACH region.

In the US, companies can cut fast. Hire and fire. That is what the 55% did. Decision on Monday, people gone by Friday. In Germany, you have to explain to the Betriebsrat – the works council, a legally mandated employee representation body with co-determination rights – which tasks are being automated and which functions of the role remain. That forces explicitness. You have to write down what the person does. Not just the visible tasks that look good on a slide, but also the invisible functions you only notice when they are gone.

If you cannot tell the works council which tasks are being automated and which job functions remain, you have not made the distinction. And the works council will not approve. Not out of spite or reflexive blocking. At least not when they understand their role and are not just playing politics. But because the question is legitimate and the answer is missing.

It is uncomfortable. It is slower. It costs hours in negotiations that feel like bureaucracy. Where someone asks questions you would rather not answer, because the honest answer would be: "We did not look closely enough."

And it is exactly the analysis that gets skipped in the US – and that leads to the 55% regret there.

Through Norman's lens: the works council process forces companies to at least name "Knowledge in the Head." To write down which knowledge would leave with a person. Which context functions the role has beyond the visible tasks. In the US, this step is skipped. The termination is faster. The regret comes faster. And the rehiring at a premium is more expensive than any works council negotiation would ever have been.

Those who see the works council as a brake do not understand that the brake can protect you from the cliff. Co-determination can force the task/job distinction that is otherwise conveniently skipped. Can – provided the works council does not block on principle – be an insurance against regret. And the Orgvue data shows the price of skipping: more than half regret it, a third pays more than before.

Honesty Check: Where I Could Be Wrong

Three points that argue against my own thesis. I take them seriously.

First: Not every cut is a mistake. 40-60% of current tasks are automatable. That is a conservative estimate, not fearmongering. Some roles were pure coordination overhead – they existed only because communication between two teams did not work or because a process was never properly set up. If a tool replaces that communication or makes the process obsolete, the role was genuinely redundant. Not every position has hidden context functions. Some positions were, honestly, just tasks in an employment contract. The distinction between tasks and jobs does not mean every job is more than its tasks. It means you have to look before you decide.

Second: Timing uncertainty. How fast agents move from the task level to the job level is unclear. Models are getting faster, more context-aware, more capable. What requires human judgment today could be agent routine in two years. If it happens faster than expected, some of the "premature" cuts were right after all. Anyone who says "agents cannot replace jobs" today must be willing to revise that statement in 18 months. I am willing. But as of today, we are not there.

Third: Survivorship bias in the data. The Orgvue study surveys those who regret. Not those who cut successfully and have no regrets. The 55% are real, but they are not the whole story. There are companies that automated tasks, eliminated positions, and got it right. They appear less often in studies, because "it worked" is not a headline and does not trigger a survey.

But – and this is where my argument holds despite all of this – the costs of error are asymmetric. Automating too late costs efficiency. Laying off too early costs context. Efficiency can be recovered. A tool rollout that starts six months late is annoying but repairable. Context not always. The laid-off employees may come back. Their knowledge does not come back fully. Someone who was out for three months missed three months of organizational context – decisions they were not part of, relationships that shifted, projects that went in a different direction. Someone who was out for six months has to relearn the terrain. And some terrain knowledge builds up over years, not months.

The asymmetric costs make the sequence clear: externalize, then automate, then – if necessary – cut. Not the other way around.

What This Means for You

I see the same sequence in these conversations again and again. First comes the question: Who can we replace? The other question either never comes up or comes much later: What do we lose when this person leaves?

If you are facing an AI-driven staffing decision, I would put three questions before the cut. Not after.

Make the Task/Job Distinction Explicit

Before you cut a role: Which tasks are automatable? Which context functions leave with the person? What exists in no system? That is the step the 55% skipped. If you cannot answer those questions for a works council, you have not done the analysis. And if you do not have a works council, you still have no excuse to skip them.

Name the Invisible 70%

The most valuable function in many roles is not the visible task. It is the invisible context work: knowing when a rule does not apply. Seeing when a process is drifting in the wrong direction. Calling the right people before a problem becomes a crisis. Noticing that a customer is not angry about the mistake itself, but about the feeling that nobody is listening. That is where the difference sits between a completed task and a functioning role.

Externalize Before Layoff

Before someone leaves whose role you want to automate: Has their knowledge landed anywhere outside their head? Is it documented why Supplier B is often the better choice despite the higher price? Is it written down which exceptions exist, which quiet quality thresholds apply, which risks everyone knows internally but nobody has bothered to document?

It sounds like common sense. It is. It still gets skipped because a headcount cut shows up in the next quarterly deck faster than the slower work of moving knowledge from heads into systems.

The Real Question

The Orgvue study, Davenport, the Bitkom numbers, and the customer service rehires all point to the same thing: the technology works. At the task level.

The real question is a different one. Did companies understand what they lose when they jump from an automatable task to a disposable role?

55% seem to have answered that question too quickly. Not because the technology is bad. But because they decided at the wrong level. They assessed the visible part and laid off the invisible with it.

The agent can do the task. The job is something else. If you do not make that distinction before the cut, you pay for it later. At a premium.

The thinking tools for this piece help you run the task/job distinction in your own organization. The Task/Job Scanner, the Knowledge-in-the-Head Audit, and the Externalization Checklist are three prompts you can apply to your situation. Go to the thinking tools

New issues straight to your inbox? Subscribe to the newsletter

Practical test for this essay

Three templates for a conversation with an AI: clarify the goal, check contradictions, test whether the work can be delegated. You do not get a finished strategy or a tool recommendation.

Open the templates