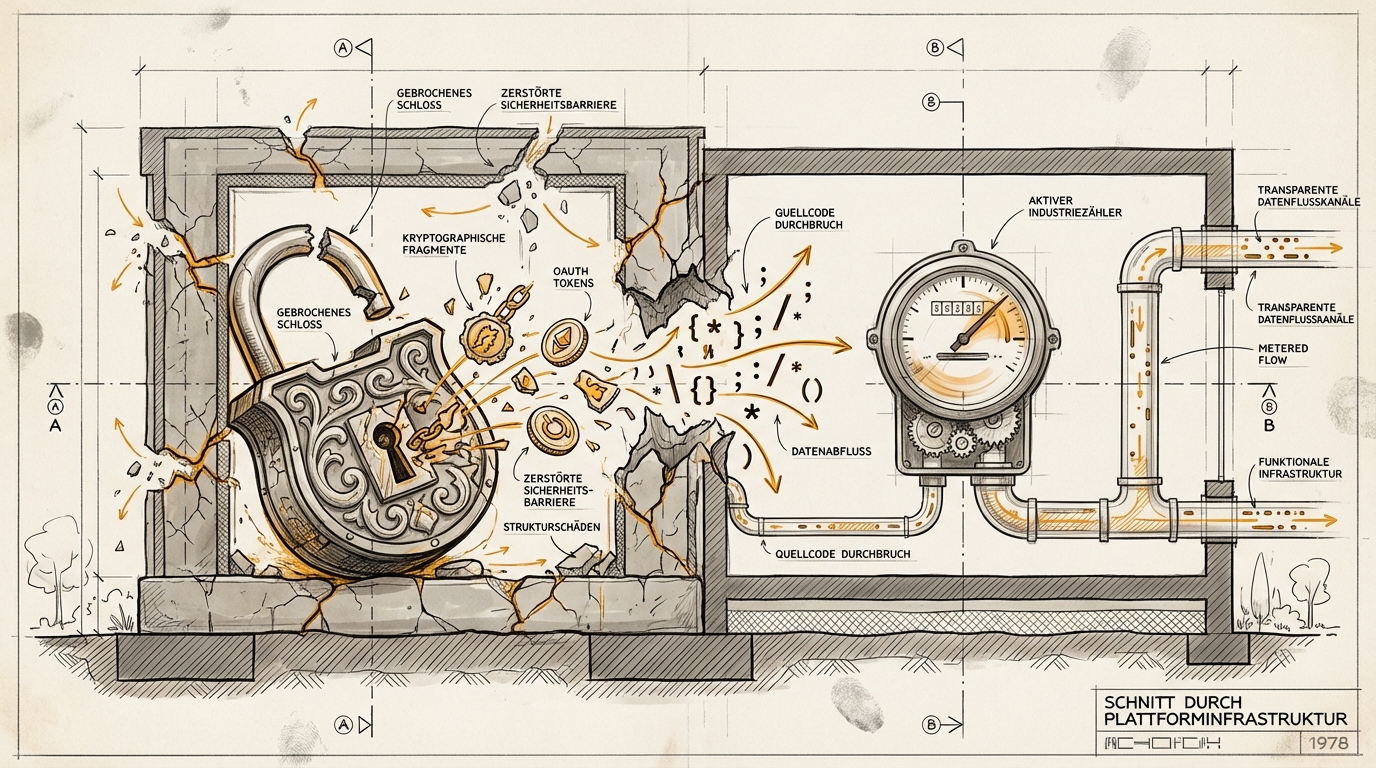

From Lock to Meter

paddo.dev documented the timeline that's quietly becoming a case study across the AI industry: how Anthropic burned through three enforcement strategies in four months before landing on the only one that works.

The short version: In January, Anthropic silently disabled OAuth tokens at 2 AM, cutting off third-party clients like OpenClaw from Claude subscriptions. No warning, no alternative, no explanation. Then came cease-and-desist letters, then a source code leak (512,000 lines of TypeScript, including the cryptographic attestation mechanism), and only now, in April: a transparent billing model with transition credits and discounts.

Stay up to date

Get notified when I publish something new, and unsubscribe at any time.

Anthropic's email to affected subscribers, shared on Hacker News today, reads like a case study in tonal recalibration:

"Your subscription still covers all Claude products, including Claude Code and Claude Cowork. To keep using third-party harnesses with your Claude login, turn on extra usage for your account."

And then the sentence that reveals the actual strategy shift:

"Capacity is a resource we manage carefully and we need to prioritize our customers using our core products."

Use whatever client you want. Pay for what you consume.

Four months, four escalation levels. Each failure forced the next attempt.

What paddo describes isn't an Anthropic problem. It's an architecture problem. And it shows up everywhere organizations try to control behavior through detection and blocking rather than economic design.

The January shutdown was a classic Phase 1 reflex: same process (subscription), new tool (technical block). The flaw: when your enforcement mechanism depends on secrecy (cryptographic attestation embedded in client code), a single leak renders it worthless. And leaks happen. The npm build accident on March 31 didn't just expose code – it exposed Anthropic's entire enforcement architecture.

What came next was the forced shift from "lock" to "meter." Stop asking: Who are you? Start asking: What are you consuming? The meter works because it's client-agnostic. OpenClaw, Claude Code, a custom-built wrapper: irrelevant. The resource is the resource, regardless of who calls it.

The pattern will be familiar to anyone who has ever managed a content strategy, API access, or internal tool policy:

Strategy 1: Policing. Detect, block, send legal threats. Works as long as the enforcement infrastructure stays opaque. Breaks at the first reverse-engineering attempt, the first angry developer, the first public thread. Doesn't scale, always escalates.

Strategy 2: Pricing. Make the economics transparent. Higher consumption, higher cost. No detection needed, no secrecy, no escalation. Works regardless of what the client does, because the control point isn't at the gate – it's at the meter.

paddo quotes one observer: "Anthropic crackdown on people abusing the subscription auth is the gentlest it could've been." True. But only because three harsher attempts failed first. The gentleness wasn't the first instinct. It was the last.

From the dekodiert perspective, this is a Terrain question: the environment (open protocols, developer communities, publicly leaked source code) determines which enforcement strategies are even possible. If your AI governance is built on detection and blocking, it's built on secrecy. And secrecy, in a world where code leaks through npm packages and developer communities build workarounds in hours, has a half-life measured in months.

The meter doesn't win because it's elegant. It wins because it's the only mechanism that works regardless of whether the other side has your source code.

Ask yourself (or ask your AI): Which of your internal policies are built on detection and blocking rather than transparent consumption metering? And what happens when someone understands the mechanism behind them?