Touched a thousand times. A thousand times, nothing happened...

Anthropic announced Project Glasswing today. Twelve corporations, a new, non-publicly available model called Claude Mythos Preview, a hundred million dollars in API credits. The PR take is: AI is changing cybersecurity. True. Not the interesting part, though.

The interesting part lives in two specific examples mentioned almost in passing in the announcement.

First: OpenBSD. For nearly three decades, this operating system has been the security-obsessed counter-example to the rest of the software world. Every commit is hand-reviewed. The code is deliberately minimalist. No unnecessary features, no abstractions that can't justify themselves. And right there, in this crown jewel of artisanal security culture, Mythos Preview found a flaw that had lived in the codebase for twenty-seven years. An attacker could have crashed any OpenBSD machine over a network connection. Nobody had seen it in twenty-seven years.

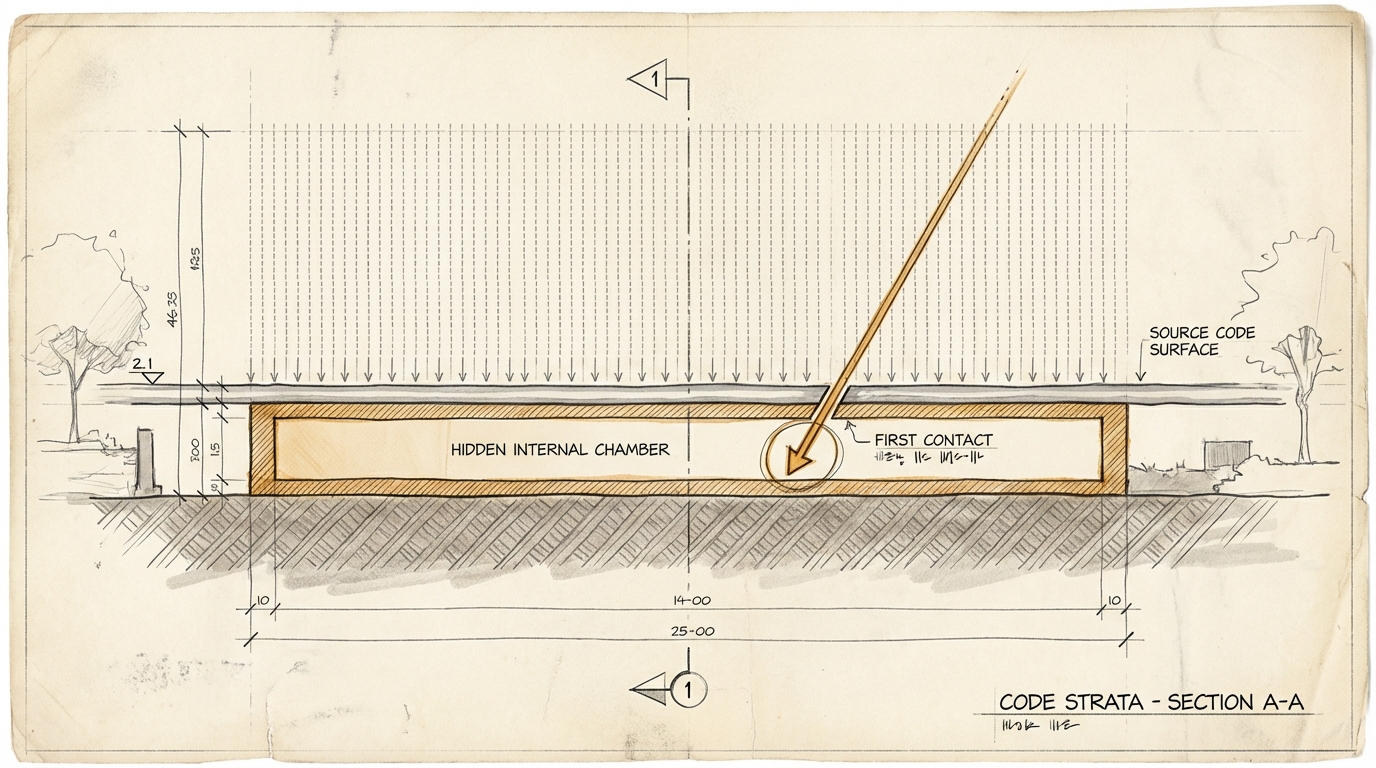

Second, and this is the harder number: FFmpeg. A library that encodes and decodes video inside countless products. A single line of code. Automated test tools had executed it five million times without finding the bug. Mythos Preview saw it on the first pass.

Stay up to date

Get notified when I publish something new, and unsubscribe at any time.

Touched five million times. Five million times, nothing happened.

The obvious takeaway is that AI is more attentive than humans and tools. That's boring. The interesting takeaway is subtler. The word "tested" has quietly changed meaning, and nobody noticed. What we've been calling "checked" was actually "looked at by tools that structurally couldn't find what they couldn't find". The seal of approval was real. The checking was not.

This doesn't only apply to code. Strategy papers that move through review loops where nobody asks the question that would take the paper apart. Compliance checklists ticked off because the checklist exists. Due diligence processes where the instrument is "we have a process". Any context in which "we checked" has become a ritual response rather than a verifiable statement.

Five million hits on the same line of code is an enormous number. It was still only ritual.

Ask yourself (or ask your AI): What in your stack, in your processes, in your decision memos counts as "checked" - and how would you notice if the checking were just an illusion?