Are Managed Agents Becoming a New AI Infrastructure?

Cloudflare published an AI blog post this week that at first sounds like normal product marketing: more models, more providers, one endpoint, routing, failover, cost control.

The interesting part is not the launch. The interesting part is what becomes visible through it.

The more agentic AI systems become, the less it is enough to simply buy the best model. At that point, a request is no longer one model call. It becomes a small chain: classify, plan, call tools, fetch data, execute the next step. And that turns a model problem into an infrastructure problem surprisingly fast.

Exactly there, something may be emerging that, for now, could be called managed agents.

Stay up to date

Get notified when I publish something new, and unsubscribe at any time.

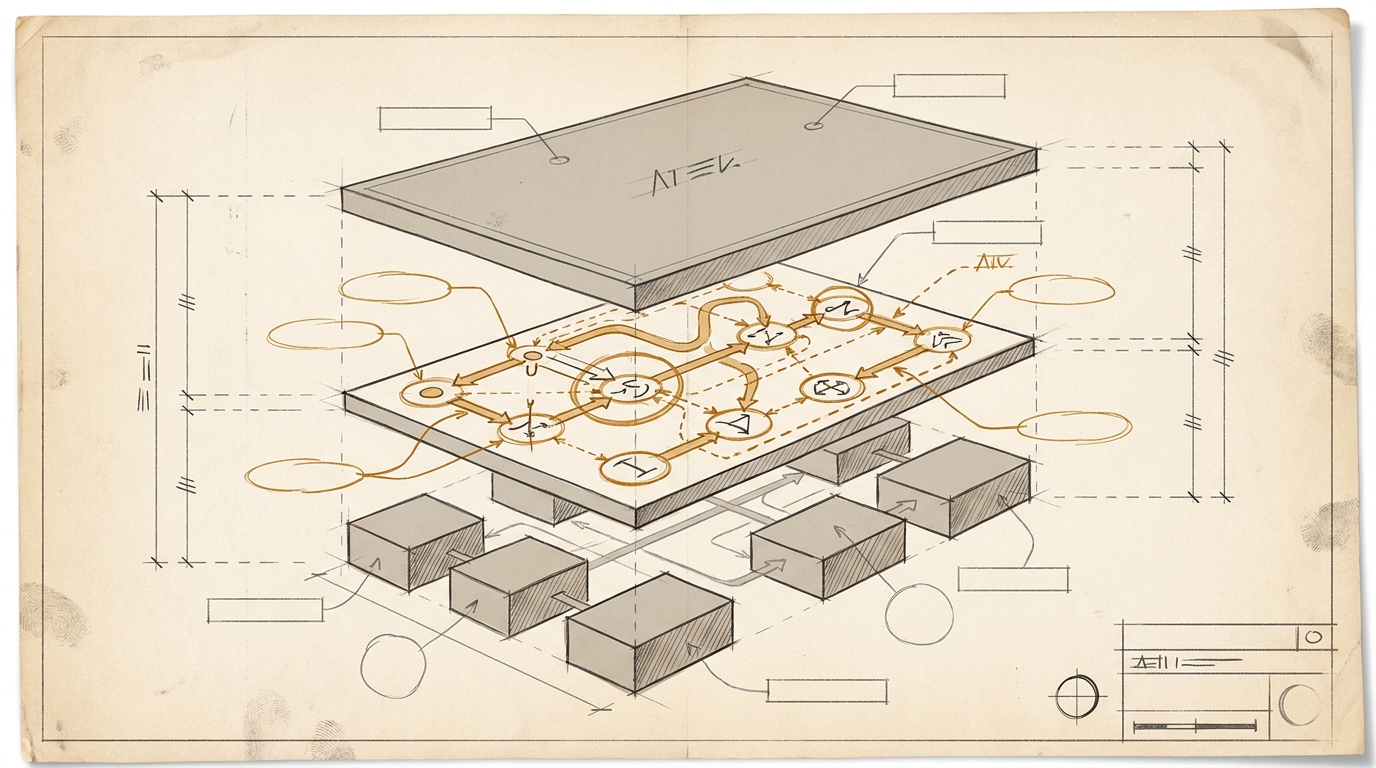

Not just the agent itself, but the layer around it: the layer that selects models, routes requests, catches failures, distributes cost, and promises portability.

That will likely become practical for customers faster than it sounds. If you still build AI as a direct relationship to a single model, agentic workflows make you surprisingly fragile. An outage is no longer just a stalled chat. A price change is no longer just annoying. And latency suddenly multiplies across several steps.

That is why this fairly invisible layer could become strategic: not because anyone finds routing sexy, but because that is where it gets decided how dependent a company really is on a provider, a region, or a pricing model.

From a competitive angle, that may be the more interesting shift. As models become more interchangeable, the winner is not automatically the one with the best model. It can also be the one that orchestrates the same model market more reliably, more cheaply, and closer to the use case.

Looking beyond AI helps here. In earlier platform waves, providers often wanted to become content companies and mostly proved that they had misunderstood their role. This time, the interesting position again seems to sit in between. Not in owning the content, but in controlling the traffic. Only now the scarce resource is not bandwidth, but reliable inference.

Maybe this is ultimately closer to serverless than it looked to me on first read. In the cloud, compute remained the commodity. Serverless turned it into a managed, more convenient, more expensive layer. If the same thing happens here, inference would be the commodity underneath. And managed agents would be the layer above it that turns it into a usable product.

I am not yet sure that this is the right picture. That is exactly why this note remains a marginal note for now.

Ask yourself, or ask your AI: Where in your company does a business-critical AI workflow already depend on exactly one model, one provider, or one price point, even though everyone talks as if you were flexible?