IBM Called It FUD. Microsoft Calls It Shadow AI.

Microsoft is taking Agent 365 out of preview and into general availability. According to VentureBeat, the platform costs 15 dollars per user per month and is meant to register, monitor, govern, and secure AI agents across the enterprise: inside the Microsoft ecosystem, in clouds such as AWS Bedrock and Google Cloud, on local Windows devices, and in SaaS environments.

At first, that sounds like a normal enterprise security product. Inventory, policies, telemetry, audit trails, access control. The kind of thing companies buy when experiments become infrastructure.

The interesting part is not the product. The interesting part is the framing: Shadow AI.

The cheap take would be: Microsoft is inventing a threat in order to sell a product. That is too simple. The threat is not invented. According to VentureBeat, David Weston describes three real patterns:

- Developers connect agents to backend systems and accidentally expose sensitive infrastructure.

- Attackers use cross-prompt injection through manipulated data sources.

- Agents access data sources that were never built for agentic access patterns.

That is plausible. Once you connect agents to tools, data, identities, and room to act, you are no longer building a better chat surface. You are building an execution layer inside the company. And execution layers need boundaries.

That is exactly why Microsoft’s framing is so effective.

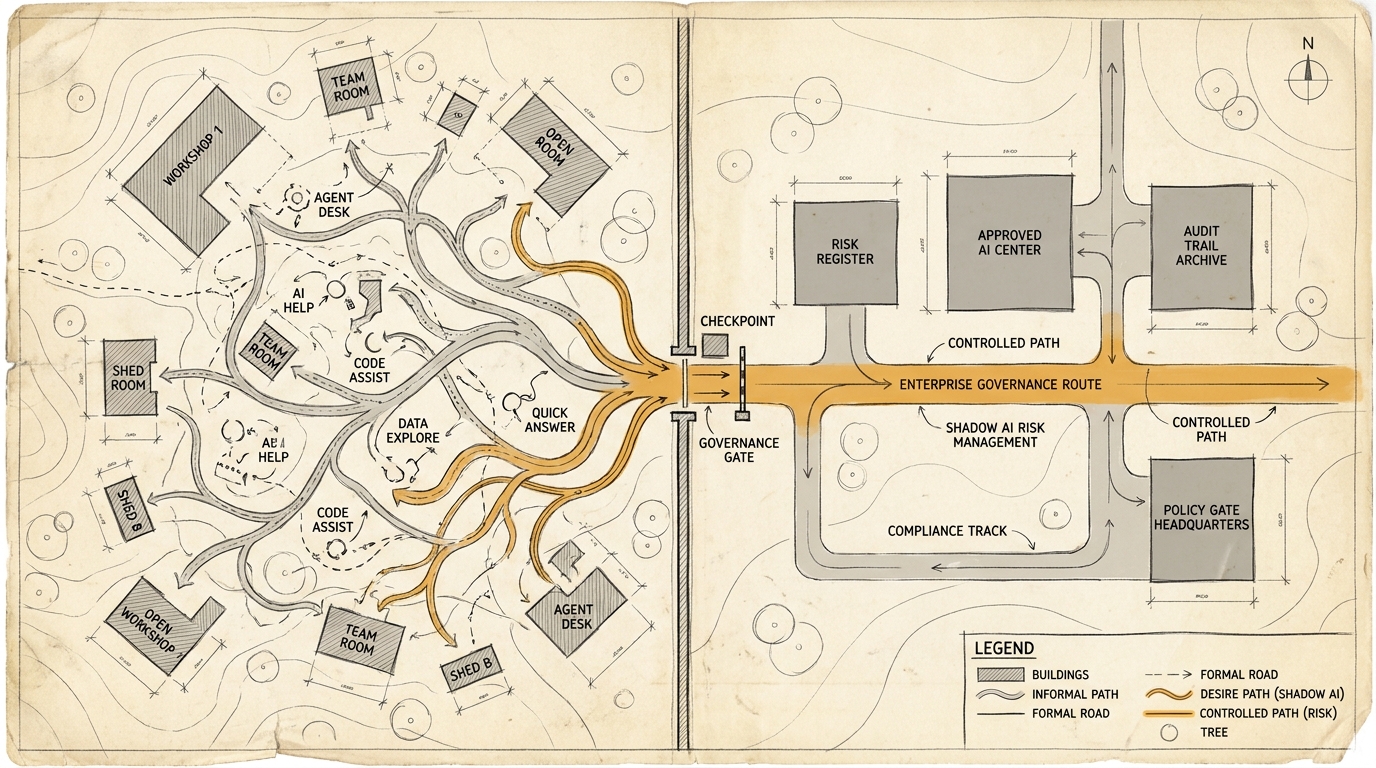

Shadow AI does not just describe a phenomenon. The term shifts ownership. Curious employees, local experiments, and informal automation become an enterprise risk. Productivity becomes attack surface. Decentralization becomes loss of control.

The budget logic is almost built in: if you have Shadow AI, you need visibility. If you need visibility, you need a registry. If you need a registry, you need policies. If you need policies, you need a central control surface. And if your organization already runs on Entra, Defender, Purview, Teams, SharePoint, and Copilot, the obvious answer arrives with the diagnosis.

This is reminiscent of the old FUD logic IBM became famous for: Fear, Uncertainty, and Doubt. Not because the risks are made up. That is the important difference. But because real uncertainty is structured in a way that makes one specific vendor answer look reasonable, professional, and almost unavoidable.

You can see this contrast everywhere right now: open agent ecosystems on one side, where people and teams quickly build their own workflows. Enterprise agents on the other, with telemetry, dashboards, agent IDs, role-based access, Zero Trust, and audit trails. The same underlying models can move in very different directions, depending on the structure people build around them.

So Microsoft is not simply selling better agents. Microsoft is selling the institutional permission to run agents at all without looking like a governance risk in the next security review.

That is psychologically stronger than any feature comparison. Hardly any director wants to say, “We’ll just let this grow organically.” Organic sounds good in a garden. In IT, it sounds like shadow inventory, data leakage, and a special audit later.

That is the real bet: companies are not only paying for control. They are paying for the feeling that they are not acting negligently.

That is not reprehensible. It is just not neutral.

Because whoever defines the risk category also defines the search space for solutions. If the problem is called “ungoverned agents”, the answer is almost inevitably a governance platform. If the problem is called “missing official paths for useful work”, the answer looks different: safe sandboxes, clear use areas, better specs, auditability, enablement, and an honest look at where employees are already more productive than the official strategy.

Shadow AI can be both: risk and signal.

The management question is therefore not: should we buy Agent 365 or not?

The better question is: Who gets to define whether informal AI use in your company is primarily a security failure, a productivity signal, or an organizational diagnosis?

That interpretation will matter more than the price per user.

Ask yourself or your AI: Where does your company already use the term “Shadow AI” as a risk label, even though it may also point to missing official paths, tools, or decision space?

Sources

- VentureBeat: Microsoft takes Agent 365 out of preview as Shadow AI becomes an enterprise threat, via user excerpt from 2026-05-05; direct fetch blocked by Vercel Security Checkpoint