Merchants of Complexity

Mario Zechner published a rant this week that's making the rounds in the developer community. Zechner is no bystander: he built the framework behind OpenClaw, one of the most widely used open-source projects in gaming. He knows large codebases.

His thesis: agents don't make us more productive. They make us faster. That's not the same thing.

Stay up to date

Get notified when I publish something new, and unsubscribe at any time.

A human, Zechner says, is a bottleneck. A human can't produce 20,000 lines of code in an afternoon. Even if that human constantly makes mistakes, there's a natural ceiling on how much damage is possible per day. The bottleneck doesn't just limit output. It limits chaos.

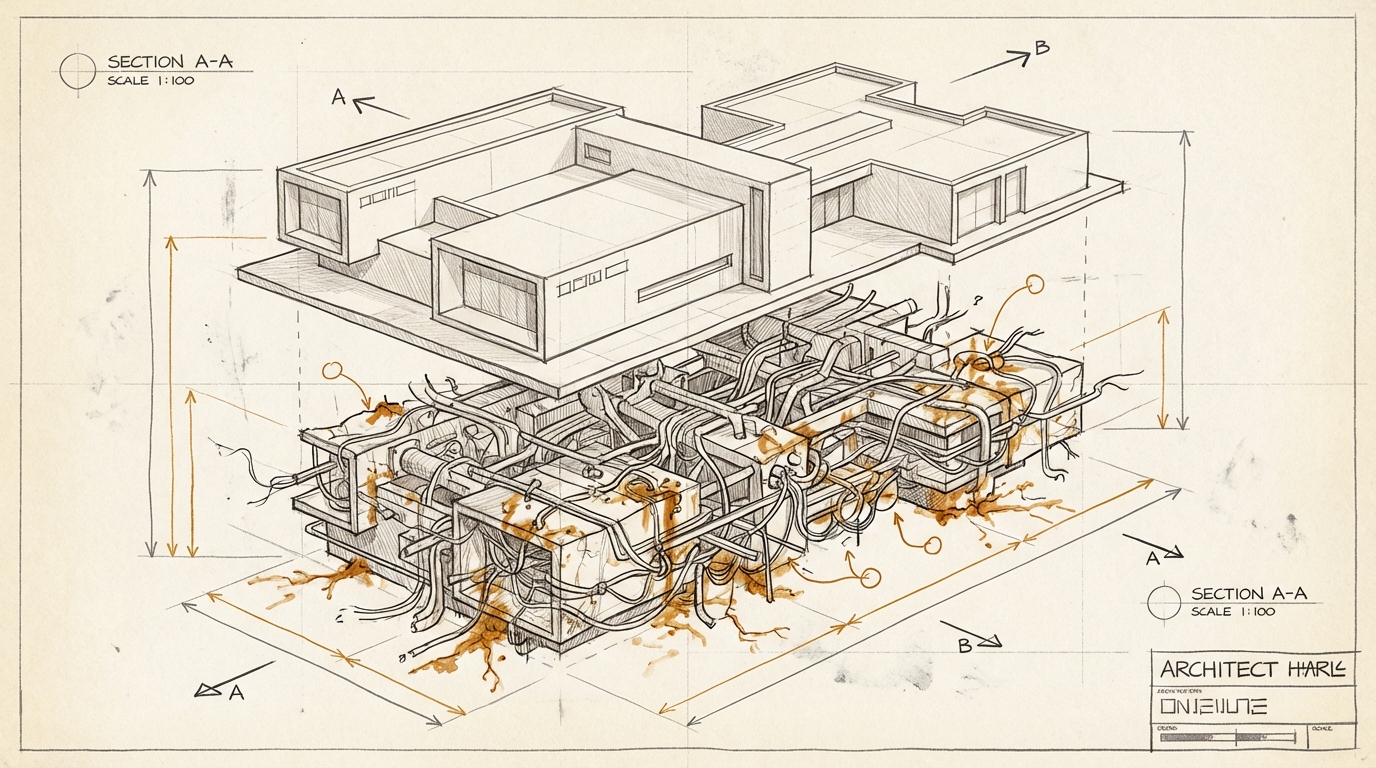

With agents, that brake is gone. The small, harmless errors, Zechner calls them "booboos", pile up faster than a human can detect them. And because you've removed yourself from the process, you don't notice until the codebase has already become unmanageable.

His phrase for it: "You let them run free, and they are merchants of complexity."

Simon Willison, one of the most prominent voices in the AI engineering scene, agrees. With one caveat. Willison doesn't believe the answer is to go back to writing everything by hand. But he says: we need a new balance between speed and intellectual thoroughness. Typing was never the bottleneck in programming. Now that this is obvious, we need to figure out what the actual bottleneck was.

I find this remarkable because it goes beyond software. Everywhere that AI accelerates production, the same question arises: was the bottleneck the problem, or was it the protection?

Strategy papers that take three hours instead of three weeks. Campaigns that go live in a sprint instead of a quarter. Decision briefs written faster than anyone can read them. Speed goes up. But the time to think stays the same. Or it shrinks, because the next output is already finished before the last one has been digested.

Zechner recommends: set limits on how much code you let agents generate per day. Write everything that defines the architecture by hand. Give yourself time to think.

It sounds obvious. It's still the opposite of what's happening right now.

Ask yourself (or ask your AI): Where in your organization has the slowness of production been an invisible quality filter – and what happens when that filter disappears?