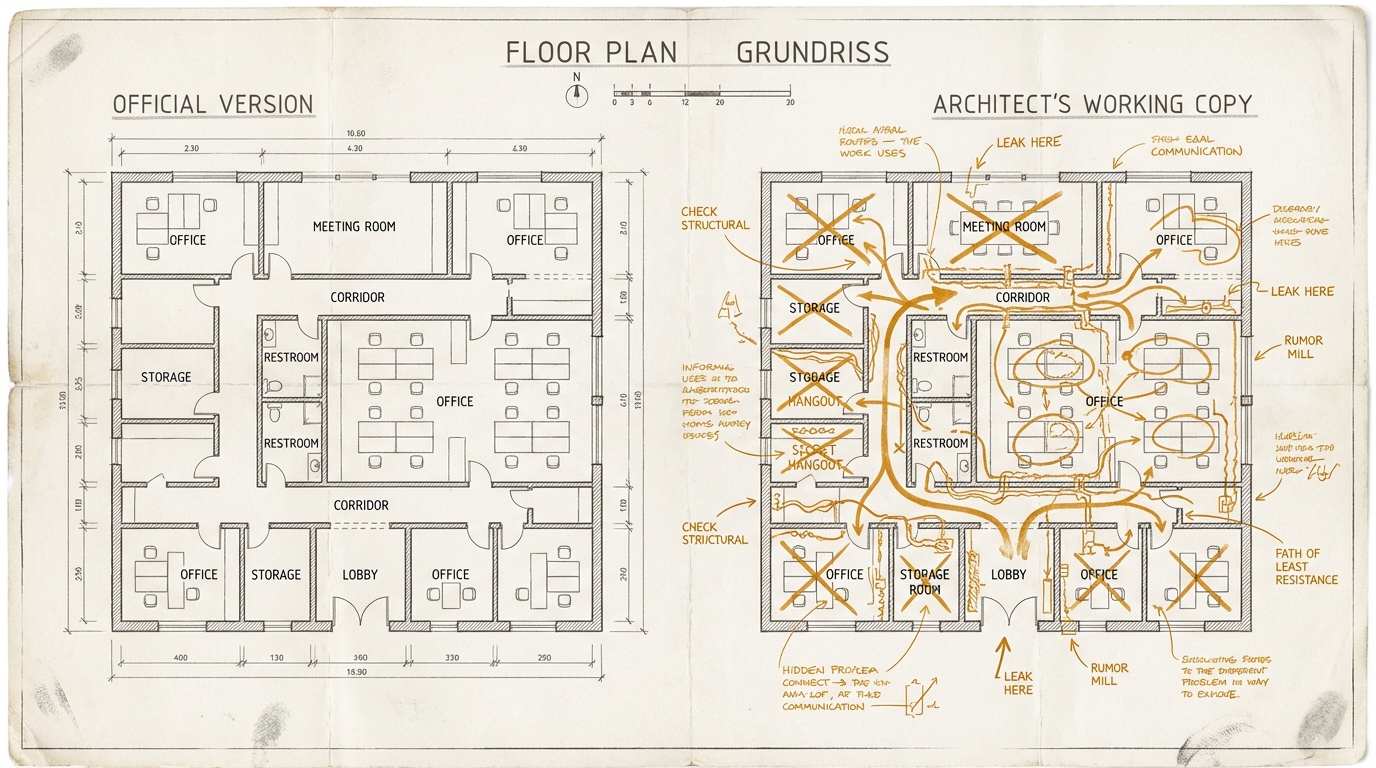

The Two Versions

Anthropic accidentally published the complete source code of Claude Code this week. Not through a hack, not through a whistleblower. Through a forgotten file in a public software package. It happens, it's embarrassing, it gets fixed.

But what's inside the code is more interesting than the blunder itself.

Stay up to date

Get notified when I publish something new, and unsubscribe at any time.

The source code contains a check: process.env.USER_TYPE === 'ant'. Translation: Are you an Anthropic employee? If yes, you get a different system prompt than paying customers. Not cosmetically different. Substantively different.

Anthropic employees get the instruction: "You're a collaborator, not just an executor. If you spot a bug, say so." External users don't get that line.

Internally it says: "Never claim all tests passed when the output shows failures. Don't sugarcoat." Externally, that's missing.

Internally it says: "Before reporting a task complete, verify it actually works." Externally, also missing.

This is not a scandal. It's understandable. Internal users know the tool's limits, external users might not. Internal users want the unvarnished truth, external users might be confused by too much pushback. At least that's how you think when you're building products.

But it's revealing.

Because it shows a pattern that extends well beyond Anthropic. The people who build AI tools configure them differently for themselves than for the market. Internally: direct, honest, autonomous. Externally: more cautious, more agreeable, less friction.

It reminds me of something I regularly see in companies: the data science team runs their own models with different parameters than the ones they unlock for the business team. Developers have access to debug information the end user never sees. The internal wiki has a different quality than the customer documentation.

There are always two versions. The one for people who know what they're doing. And the one for everyone else.

With a text editor or a spreadsheet, that wouldn't matter. But with a tool that increasingly prepares decisions, writes code, and drafts strategies, the question shifts. If the tool is internally instructed to honestly push back, but externally to be agreeable – who gets the better results?

And more importantly: does the buyer know they have the tame version?

Take an AI tool you use regularly. Ask it: "In which situations do you give me a more diplomatic answer than the one you consider most accurate?" The answer is revealing – less because of what the tool says, and more because of what you think about afterwards.