The App with the Factory Badge

In “The Boundary of Good Intentions” I wrote about providers quietly taking more rights than users consciously granted them. paddo is now describing the organizational version of the same problem. And for companies, that version may prove more expensive than the next model failure.

The case is not interesting because a model hallucinated somewhere. It is interesting because, according to paddo, the path into a company system did not run through a bad output. It ran through an AI tool that already had broad permissions. The output was not the problem. The identity was.

That is exactly where many AI debates go wrong. At director level, people talk about data protection, model quality, bias, and hallucinations. All of that is legitimate. But the operational damage often sits one layer deeper: in the OAuth grant, in the overly broad scope, in the uncontrolled MCP server, in the small tool startup that suddenly has access to mail, drive, GitHub, tickets, and deployments.

Put more bluntly: many companies buy AI tools as if they were just better browser extensions. In practice, they end up treating an external company like a new employee with a master key. Once you give those shadow IT tools that level of permission, you are also opening most of the doors in the company to them.

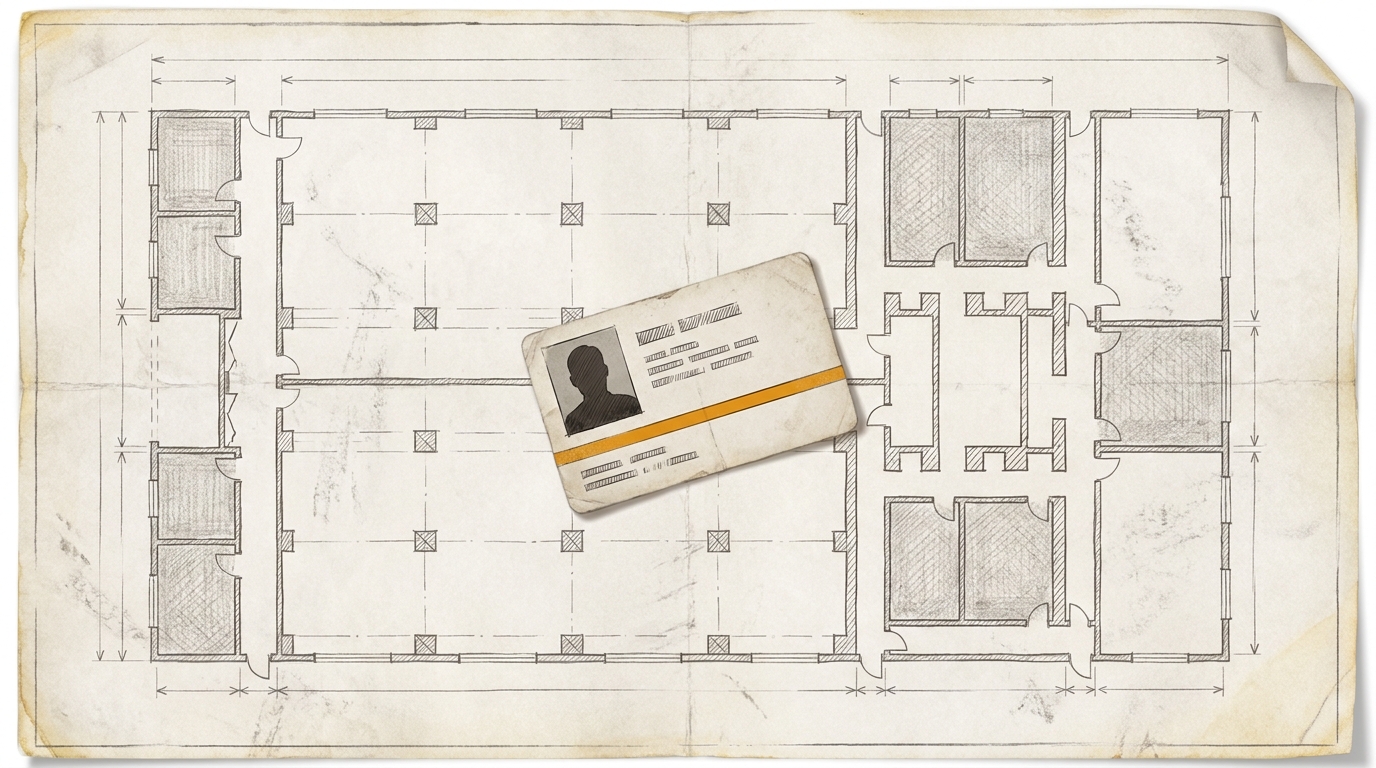

That is the German angle on the Vercel security incident. In a traditional factory, nobody would let an external service provider roam through every hall simply because it promised higher productivity. It would get a badge, defined zones, ideally an escort, and a clear path for revoking access. I remember that logic very clearly from Volkswagen: handing over my phone, taping over my laptop camera, and not being allowed to walk the site without an escort.

That same logic is often missing with AI tools. The app gets the factory badge before anyone has properly clarified where it should be allowed to go.

That is why this is not just a cybersecurity issue. It is an organizational issue. Who in the company is actually checking which external identities are being let in? Who looks at permissions, duration, revocation, accountability, and logging? Who decides whether a tool is really just a tool or whether it has quietly become part of critical infrastructure?

In the German corporate context, the honest answer is often: too few functions together. IT security looks at tokens and scopes. Data protection looks at data flows. Procurement looks at contracts. The works council gets involved as soon as tools, control, or process changes affect work. Internal audit asks later why nobody thought it through as one system. That is the real point. The problem often does not arise because nobody is responsible, but because each function only sees its own slice.

That is why paddo’s reframe fits so well into the dekodiert line. AI tools are not just software. They are also new organizational actors. They do not have employee numbers, but they do have rights. They are not on payroll, but they do sit inside the company’s circle of action. And once you look at them that way, the management question changes as well. Then it is no longer about feature lists first. It is about access logic.

The relevant distinction is not: Is the tool intelligent? The relevant distinction is: Which identity does it get? Which systems may it see? Which may it change? Who pulls the badge back when the vendor is compromised, acquired, or simply disappears?

That sounds cautious. It is. But it is not German defensiveness. It is architecture. If you keep treating AI tools like harmless productivity software, you are not building a modern operating model. You are handing out factory badges without looking at the site plan.

Maybe that is the most useful question for the next few months. Not: Which model is better? But: Which of your AI tools already have more rights than you would ever grant to an external service provider?

Ask yourself, or ask your AI: Which of your AI tools already have more rights than an external service provider would ever be given in your company?