Design Gets a Text Field

Google rebuilt Stitch into a full design tool this week. Infinite canvas, design agent, multi-screen generation, code export to six frameworks. Figma stock down four percent the same day.

The remarkable part is not the tool. It's the interface: text in, UI out. No drag-and-drop, no inspector panel as the primary interaction. A text field.

Stay up to date

Get notified when I publish something new, and unsubscribe at any time.

Stitch is not alone. Impeccable, a vocabulary layer by Paul Bakaus, argues from the other direction: developers don't fail because of model capabilities, they fail because of the language they use to design. "Make it look good" delivers 16px Inter on white. "OKLCH tinted neutrals with fluid type scale" delivers a system. Same model, same prompt slot, radically different output.

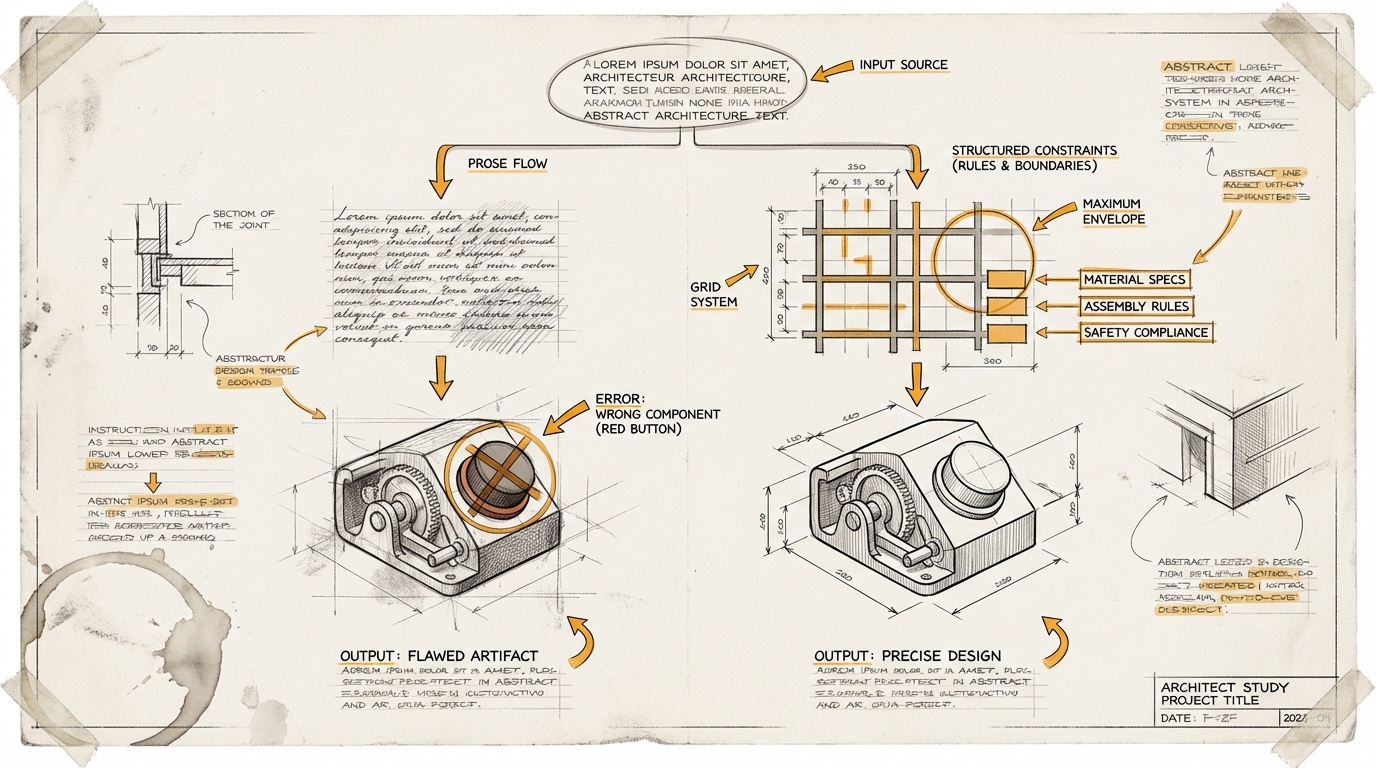

Both approaches arrive at the same point: design is converging on text. The question is how much structure that text needs.

Google's answer is a DESIGN.md – a markdown file the agent carries along to remember previous decisions. Prose meant to create consistency.

That works as long as the agent interprets the way the human who wrote the file intended. The moment it doesn't – and anyone who has pointed an agent at an existing design system knows the moment – the layer between intent and rule is missing. The agent knows blue is the primary color. It doesn't know red is only allowed for errors. So it builds a red button, because red is also defined and "looks good."

Prose describes intent. But agents need constraints.

The tools are getting better. The question of how to tell an agent not just what to build, but what to leave alone, is only just beginning.

Ask yourself (or ask your AI): Have an AI rebuild a page of your product using only your design system as context. Where does the result diverge? Those are the spots where your spec has data but no rules.