The Market Will Sort It Out

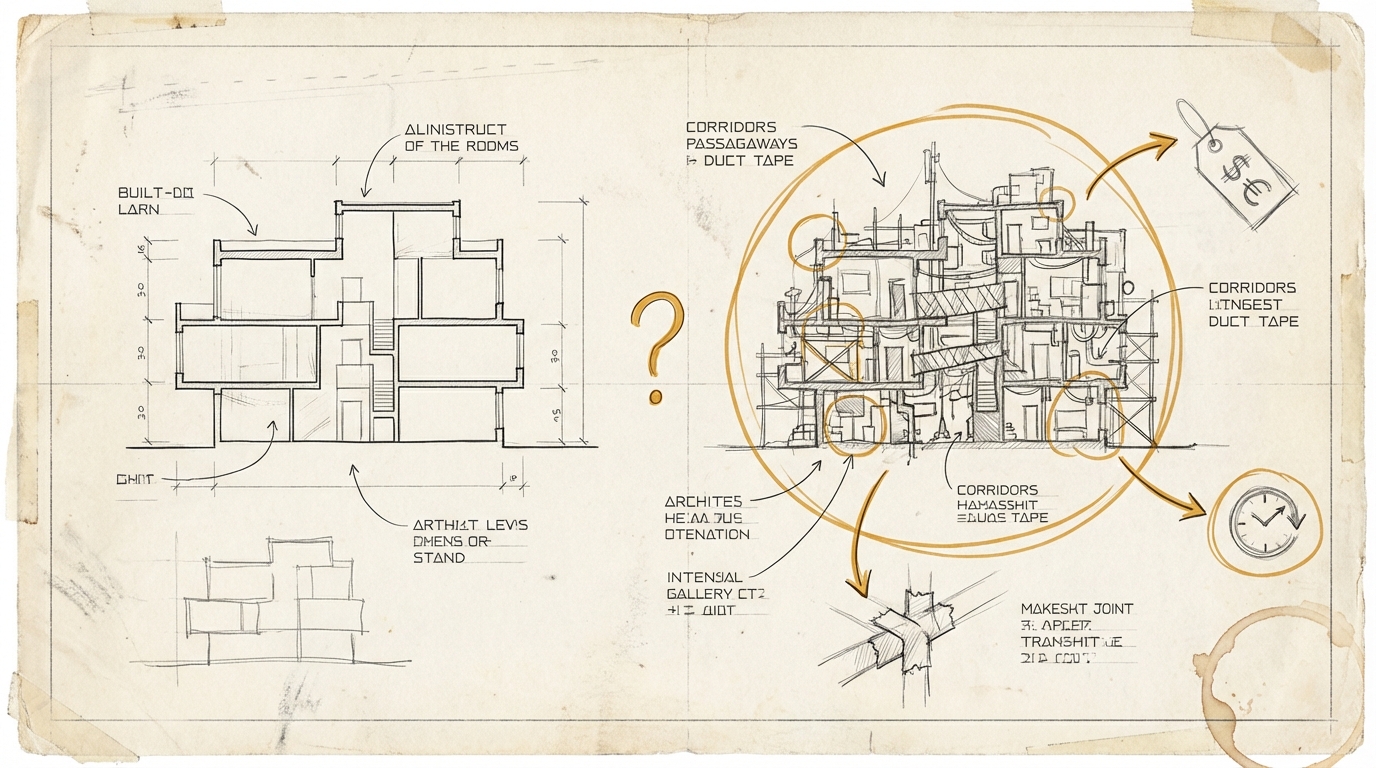

Soohoon Choi from Greptile published a thesis this week that's making the rounds: AI will write good code. Not because we want it to, but because it's cheaper. Good code requires fewer tokens to understand, fewer tokens to modify, less compute for maintenance. The market rewards simplicity. Slop doesn't scale.

Simon Willison shared the core idea. The logic sounds compelling. And in my opinion, it's still wrong – or wrong precisely because of how compelling it sounds.

Stay up to date

Get notified when I publish something new, and unsubscribe at any time.

The conclusion isn't necessarily wrong. AI-generated code may get better. But the reasoning – "economic incentives force quality" – has roughly 50 years of counter-evidence.

WordPress powers 43% of the web. Architecturally, it's an accident that grew into an ecosystem. The market didn't just tolerate it – it rewarded it. Because the market doesn't evaluate code quality. It evaluates delivery speed and feature completeness.

Or take the consulting industry. Anyone who has ever inherited an analytics implementation from a large service provider knows the moment you open the codebase and wonder whether this was a prototype or the final delivery. The answer: it was the final delivery. Signed off, paid for, handed over. Maintainability is either absorbed by the ongoing retainer, or in two to five years by the next service provider who rebuilds everything from scratch.

The market didn't punish this model. It made it the standard. Because "good enough to sign off" is economically more efficient than "good enough to maintain." The client often can't evaluate the quality, the provider has no incentive to think beyond the scope, and the downstream costs show up on a different budget line. NFRs – non-functional requirements, the kind that ensure quality standards rather than visible features – I've received exactly once as a formal requirement in my career. In over 20 years.

Choi's argument has a blind spot: he conflates the model's token costs with the market's decision criteria. That good code consumes fewer tokens is a technical argument. But purchase decisions for software and services aren't made on token efficiency. They're made on price, speed, and visibility.

If token economics forced quality, microservices architecture would have led to simpler systems. It didn't. Agile would have led to better software. It hasn't reliably. TDD would have achieved universal adoption. It hasn't.

Every decade has its version of "this time, the structural incentive will force quality." And every time, speed-to-market wins.

What might change: if AI agents have to maintain their own code, the equation shifts. Then token costs are no longer an external metric but internal operating costs. But that requires the same organization to write and maintain the code – and in the service industry, that's precisely not the case.

Ask yourself (or ask your AI): In which decisions at your organization does someone claim that "the market" or "the incentives" will automatically lead to better outcomes – and who benefits from that belief?