The Fault Is One Layer Up

Over the last few weeks, Claude Code suddenly seemed worse to a lot of people. More forgetful. Less reliable. More expensive. Just flatter somehow.

I know that feeling pretty well. You work with a tool for a few days, learn its quirks, build trust. Not blind trust. More the pragmatic kind. The kind you can work with. And then something shifts. The output feels different. Sessions feel thinner. Things that worked cleanly yesterday now behave oddly. You start doubting the model.

That is exactly why Anthropic’s postmortem is so interesting. Not because something broke again. That happens. But because the fault apparently was not where many people assumed it was. Not in the model. In the machinery around it.

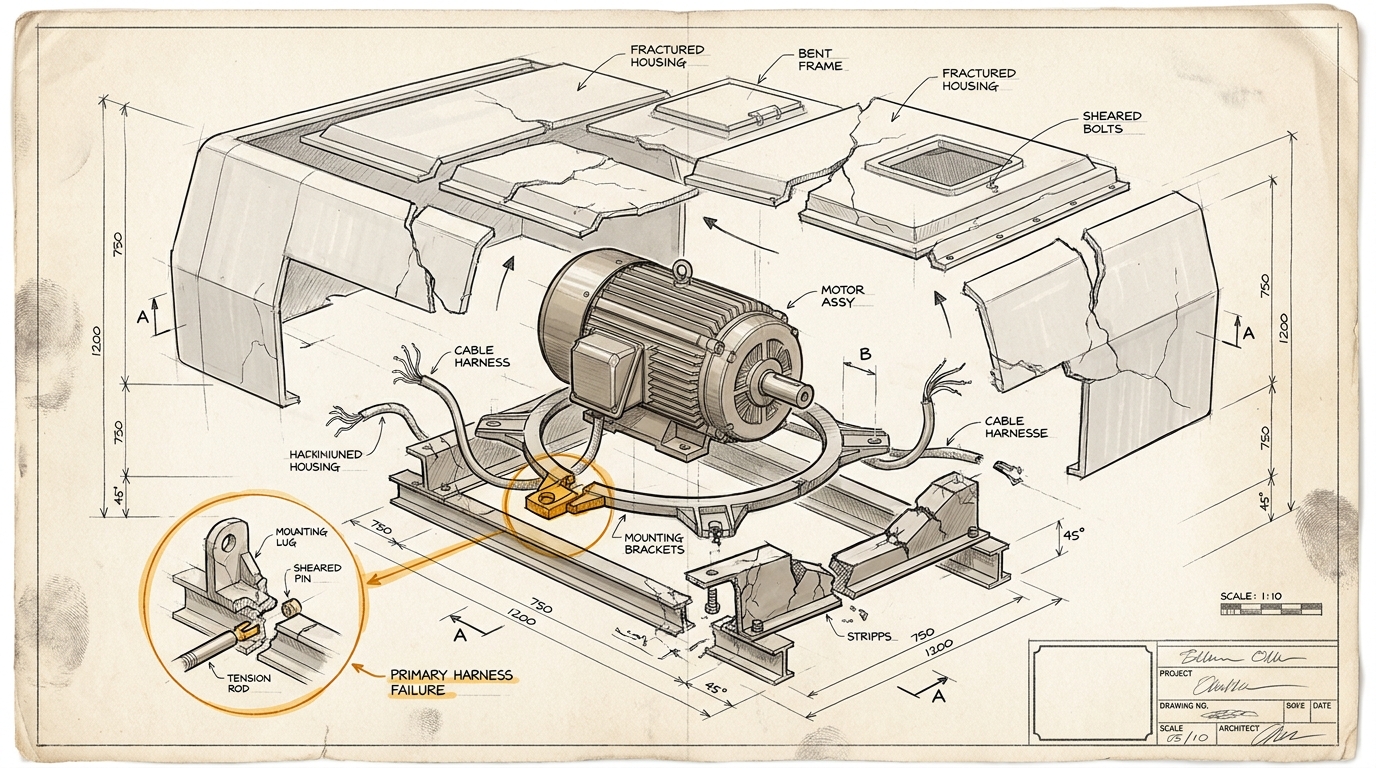

In the harness. In the cache. In the session logic. In the question of what gets preserved, discarded, or silently changed.

That sounds technical. It is. But only at first glance. Really, it is a management observation.

We still talk about AI as if the model were the place where quality either appears or disappears. As if the real magic lived there. Or the real problem. I believe that less and less. At least not in the practical reality where these systems are now being used.

Because what people experience as “better” or “worse” in day-to-day work is often not the naked model. It is the work system around the model. How much context survives. How reliably a tool can return to earlier decisions. How transparently limits, cache rules, or behavioral changes are communicated. Whether the working relationship feels dependable.

Simon Willison pulled that point out very clearly. And paddo sharpened it from another angle: one piece in the direction of trust and visibility, another in the direction of guardrails and testing logic. Those two are more tightly linked than they first appear.

If an agent writes code today and fails to hold the same thread tomorrow, that is not just a quality problem. It is a leadership problem for the system. Something is wrong with the guardrails. Or with how they were changed. Or with how nobody talked about those changes clearly.

And that is where this becomes interesting for companies. Because the operational question is no longer just: Which model is better?

It becomes: Which work system do I trust to remain predictable under load?

I can feel my own view shifting here. A few months ago, I probably would have filed an incident like this under tooling. Annoying, but peripheral. Now I think this is where the real product core starts to show itself.

Not output alone.

The reliability of the environment.

That also applies to the third piece in this small cluster, the one with the beautifully brutal phrase about the “idiot savant.” I think there is something real in that. Not as theater, but as a diagnosis of work. Once agents become productive, good prompts and a bit of TDD are no longer enough. Then you need different forms of checking. Different forms of monitoring. Different forms of guardrails. Not fewer tests. More serious ones.

Maybe that is the more mature way to see these tools. Not: The agent is smart, so it will know what it is doing. But: The agent is only as useful as the system that contains it, remembers for it, constrains it, and makes it observable.

That is not a minor correction. It is a different layer of responsibility.

And I suspect we are only at the beginning of that shift. The next wave of AI problems will not just come from hallucinations or wrong answers. It will come from work environments that feel like products, but are still, in truth, experimental setups.

Maybe that is the sentence that stayed with me after reading all three pieces:

The quality gap between two AI tools no longer sits only in the model. Increasingly, it sits one layer up.

Ask yourself, or ask your AI: Which of your AI tools do you currently trust at the model level, even though what really needs to be trustworthy is the work system around it?