From Risk to Product

Two weeks ago, Claude Mythos was still the model Anthropic, according to the leak, could not release into the world. Too dangerous for cybersecurity. Too capable in exactly the wrong areas. This week, that same capability has become a product: Project Glasswing, a program for security research under controlled conditions.

That is not surprising in itself. Of course every company tries to turn its strongest capability into a business. What is interesting is something else: how quickly the narrative flipped.

Yesterday, the same capability was the reason for restraint. Today, it is the reason for market entry.

The capability itself did not morally change in three days. The packaging changed. Instead of "too risky to release," it is now "valuable for selected partners within the right security framework." Same model, different frame, different audience, different language.

This pattern is likely to repeat over the coming months. Not only at Anthropic. Whenever frontier models hit a boundary where open availability is too risky, but no availability is too expensive, a third space emerges in between: controlled commercialization.

This is not just a safety story. It is a go-to-market pattern.

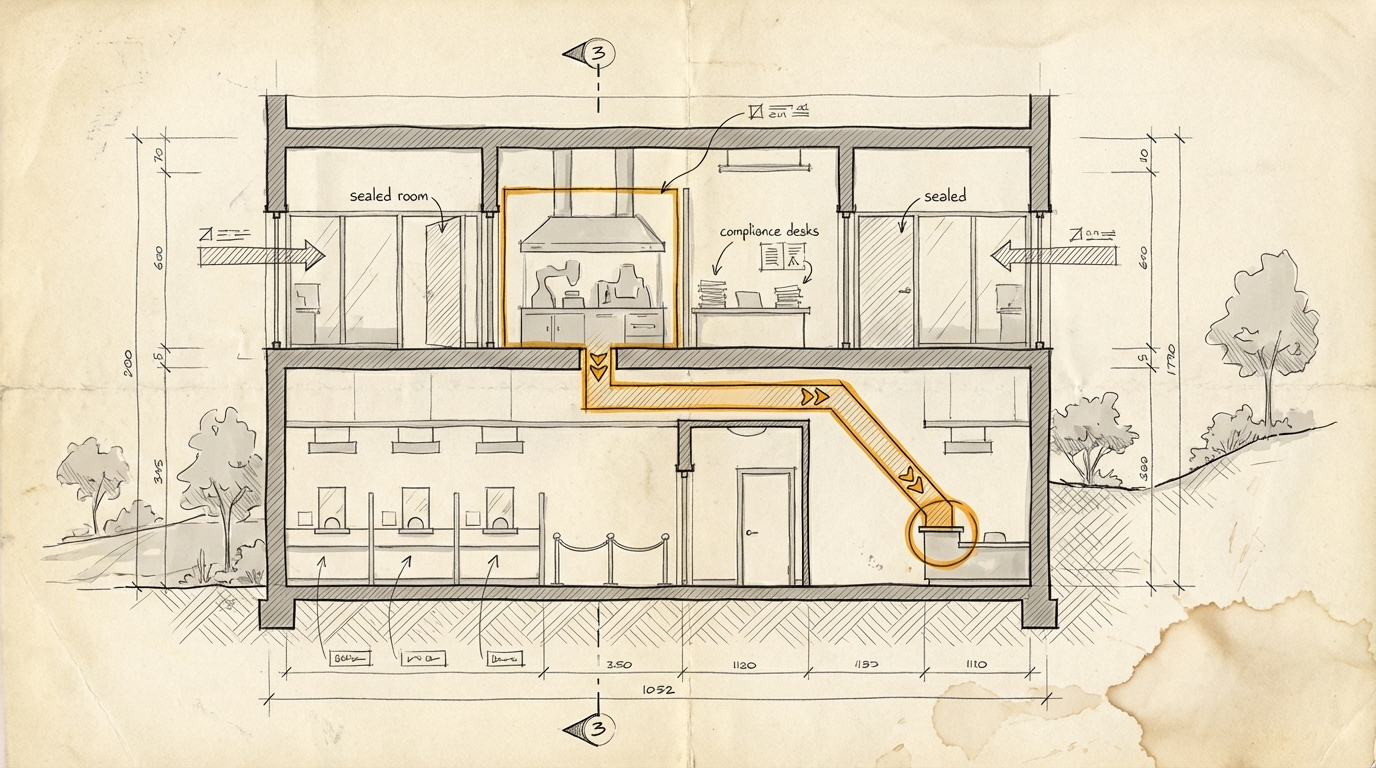

A company realizes that a capability is too difficult to justify publicly, but too valuable to leave unused internally. So it builds neither an open product nor a complete ban. It builds a program. Access for selected groups only, with guardrails, monitoring, and a narrative that translates risk into responsibility.

That is how a problem turns into a product category.

From our perspective, this is above all a terrain signal. The environment is shifting. Between "open release" and "closed lab," a market is emerging for capabilities that are too powerful, too sensitive, or too difficult to explain for the normal product channel. If you think about AI only as a model comparison, you miss this layer entirely. What matters is not just what a model can do. What matters is under which institutional conditions those capabilities are allowed to be sold. And, not least, who can afford early access.

You could also put it more bluntly: safety is no longer just the limit of the product. Safety becomes part of the product.

That does not mean it is cynical. It may be entirely sensible to make a powerful cyber model available only in tightly controlled contexts. But we should not pretend this is only about risk reduction. It is also about packaging, access control, and pricing logic.

So the more useful question for companies is not whether a model is "too dangerous." The more interesting question is: which capabilities in your organization are still officially treated as exceptional cases, while in reality they are already being prepared for a future product form?

Ask yourself, or ask your AI: Which sensitive capabilities in your organization are still being described as risks, even though they are already being treated like future products?

Sources

- paddo.dev: Project Glasswing: Anthropic Weaponizes Its Own Risk (2026-04-08)

- Simon Willison: Anthropic's Project Glasswing - restricting Claude Mythos to security researchers - sounds necessary to me (2026-04-07)

- Latent Space: [AINews] Anthropic @ $30B ARR, Project GlassWing and Claude Mythos Preview (2026-04-08)