Evaluation Is the New Leadership Work

A company proudly presents its AI KPIs. Adoption is high. Processing time is down. Output per person is up. Cost per task is falling. Everything seems to be moving in the right direction, at least if you look at movement.

Things become more interesting when you ask: does any of this prove value creation, or does it merely show activity?

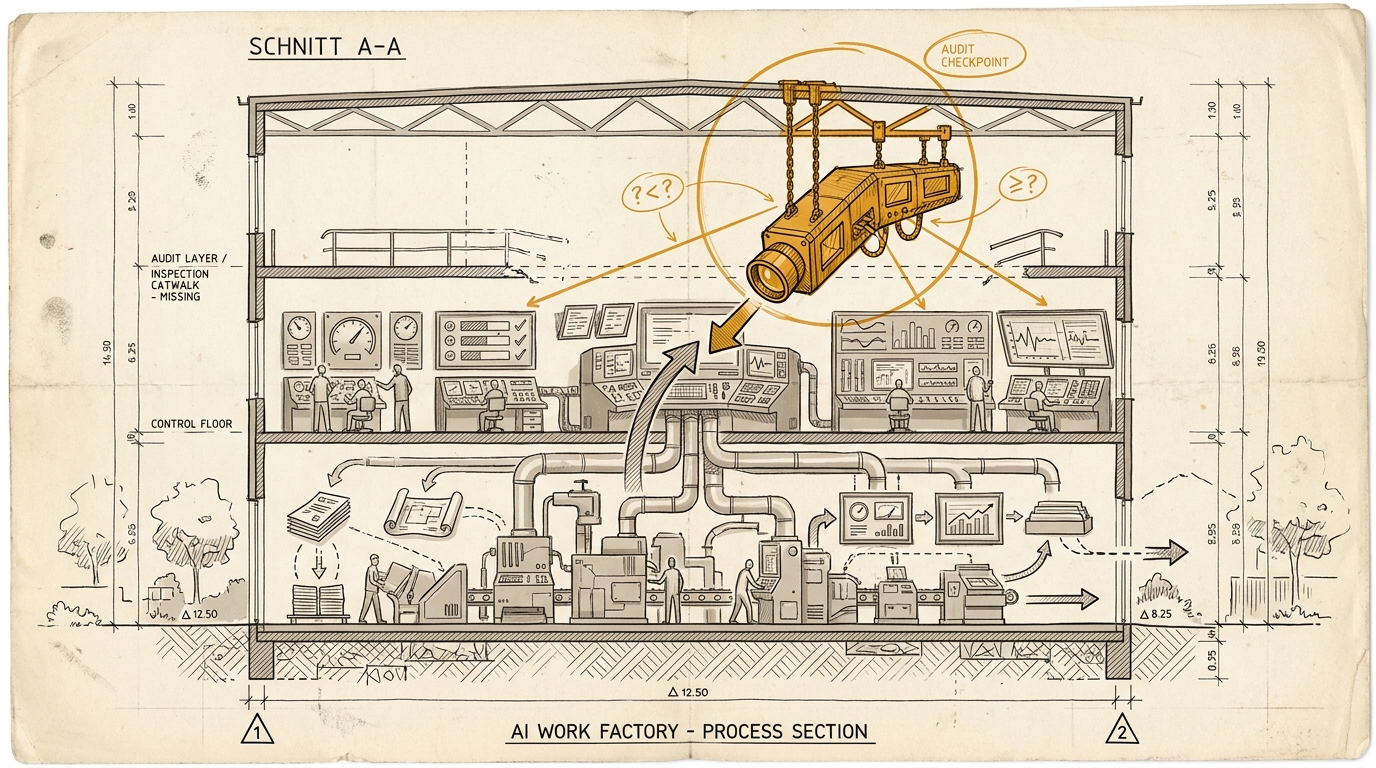

That is exactly where the issue turns. Many organizations measure very neatly how much their system is moving. They measure far less cleanly whether it is moving in the right direction. Not because they lack data. Because they confuse control with evaluation. They build dashboards, metrics, sign-off paths, and review steps. What is missing is the layer above all of that, the one that asks whether the steering apparatus is even measuring the right thing.

That is why evaluation, in a world of cheaper execution, stops being a quality topic and becomes a leadership topic.

We like talking about models, tools, and workflows. We talk less willingly about the fact that once execution gets cheap, the bottleneck does not automatically sit in better prompting. It often sits in something slower, scarcer, and politically less convenient: judgment.

Who checks whether an output is good enough? Who notices when a metric has become a proxy? Who pushes back when everything looks more efficient, while the organization is quietly learning to work more cleanly in the wrong direction?

That is not a side question. That is the new leadership work.

Control is not evaluation

AI control looks professional. It comes with dashboards, usage figures, token cost views, automation rates, review processes, sign-off steps, and escalation paths. The problem is that much of it first tells you that something is running. Not that it is right.

An organization can be excellently controlled and still poorly evaluated.

Then it may know:

- how often a tool was used

- how quickly a process was completed

- how many outputs were produced

- how much cost was saved

But it may still not know clearly enough:

- whether the output actually improved in substance

- whether the system is optimizing for the right goal

- under which conditions it fails systematically

- where human review capacity is no longer keeping up

- which metric is now comforting rather than explanatory

Control asks: is the system running? Evaluation asks: is it running in the right direction, and how would we know if it is not?

That difference is larger than many dashboards suggest.

The new bottleneck is judgment capacity

As long as execution was expensive, much of quality could be organized through production logic alone. Teams had to prioritize because they could not build everything at once. Feedback came more slowly. Loops were more expensive. Part of the quality logic was built into scarcity.

Now part of that limitation drops away.

AI makes it cheaper to:

- generate variants

- summarize analysis

- produce drafts

- deliver code, text, images, or reports at scale

At first that feels like a straightforward productivity gift. Often it is. But it also moves the bottleneck.

If the organization can produce five times more, it does not automatically also increase:

- who reviews that output

- who can distinguish useful from dangerous

- who notices that something is formally acceptable but strategically off

- who keeps the trade-offs straight

That is when three familiar patterns appear.

1. More output, same judgment capacity

The result is congestion. Either things get approved later, or they get approved more superficially. Both can look manageable in reporting for a while. But quality then bends either into delay or into shallowness.

2. Evaluation gets formalized into schema

To cope with the volume, review steps become more standardized. Checklists. Gates. Scorecards. Standards. That can be useful. It becomes dangerous once the organization forgets the difference between faster review and actual judgment.

Checklists are good at recurring errors. They do not replace the ability to recognize a new error as a new error.

3. Evaluation gets invisibly outsourced

Then implicit judge roles emerge. Individual seniors, leads, or domain heads carry the final evaluative burden without their criteria being visible, documented, or scalable. The company thinks it has evaluation logic. In reality it has people quietly plugging holes.

That can work for a while. It is not a robust architecture.

Goodhart moves faster than the board

Charles Goodhart gave us the familiar observation that once a metric becomes a target, it loses part of its value as a measure. That sounds abstract. In AI organizations it is painfully concrete.

The moment a metric becomes important enough, the organization starts adapting to it. Not necessarily out of cynicism. Often just rationally.

You can already see the pattern:

- high AI usage gets read as transformation progress

- time saved gets booked as value created

- lower headcount gets sold as a more resilient operation

- faster output production gets mistaken for better organization

None of that is automatically wrong. All of it can become wrong the moment it replaces the underlying question.

Then steering turns into self-soothing.

A high adoption rate may mean teams have integrated AI sensibly into their work. It may also mean people are opening the tool frequently because that usage is visible and rewarded. Lower processing time may mean efficiency. It may also mean risk has been quietly pulled forward or pushed downstream. Saved hours may be real relief. They may just as quickly be converted into more meetings or a tighter pace.

A lot of companies are not building learning systems right now. They are building metrically polished self-deception.

The audit layer

That is why operations and control are not enough. There has to be someone or something above them that does not only check outputs, but checks the review logic itself.

Operations produces work. Control measures, allocates, and monitors. Audit asks the more uncomfortable question: are these metrics and reviews actually showing us reality?

That is where the harder questions begin:

- Why do we believe this metric is measuring something that matters?

- How could it rise without real value rising with it?

- Under which conditions does our review model start to fail?

- Which type of error do we systematically see too late?

- Where is the trail outside the system itself?

At that point evaluation is no longer a purely technical concern.

Because the audit layer does not only decide how the organization checks things. It decides which forms of truth are allowed to count at all.

Why this is leadership and not just quality assurance

Evaluation sounds harmless as long as you imagine quality control. An output is good or bad. A model works or it does not. A test case passes or it does not.

Inside companies, though, evaluation decides more than that. It determines:

- which work counts as good

- which risks become visible

- which errors are tolerable

- which compromise between speed and accuracy is acceptable

- which form of judgment gets built or pushed out of the system

That is exactly why this is leadership work.

Because this is where the organization decides what it is actually going to learn toward.

If the target system is built badly, the company learns the wrong thing faster. If the review logic rewards reportability, reportability will grow. If review capacity is underestimated, production expands into a sign-off gap. If local metrics beat system effects, the organization produces clean local wins with a poor overall result.

Evaluation is therefore not the closing stage after the work. It is the mechanism that determines which work is likely to repeat.

The German advantage is not more bureaucracy

The DACH angle matters here because it is easy to misread.

German governance often feels like friction: works councils, data protection, internal audit, documentation duties, sign-off culture. Anyone who reads AI only as a productivity tool sees mainly drag. And yes, that drag exists, especially when those layers are used badly.

But that reading is too shallow.

Once execution gets cheaper, the value of serious evaluation rises. Then exactly the things that used to look like annoying control start becoming useful:

- an audit trail outside the system itself

- documented sign-off paths

- traceable evaluation logic

- institutionalized skepticism toward pretty dashboards

Of course this can harden into bureaucracy. There is no automatic virtue here. But it is still a real advantage compared with a pure speed narrative in which control scales while the steering logic itself never gets seriously examined. Suspicion second is not a healthy way to run leadership.

Co-determination often forces better questions than many growth decks do.

The moment work is accelerated, compressed, or algorithmically re-evaluated, a neat KPI deck stops being enough. Management then has to explain:

- what is actually being measured

- what quality depends on

- which risks are supposed to stay visible

- where human judgment remains non-optional

That is exhausting. It is also closer to reality.

Who is allowed to attack the metric itself?

That is the leadership question at the end of this piece.

- Not: Which metrics do you have?

- Not: Which tools are you using?

- Not: Which sign-off processes exist?

But: Who in your organization is allowed to challenge the review logic itself without immediately being treated as a brake?

Who is allowed to say that the green metric may only be measuring activity? Who is allowed to point out that more output with unchanged review capacity is not an efficiency gain, but a quality risk?

If nobody is institutionally holding that role, then you do not merely lack another tool. You lack the audit layer.

And that is where the new leadership work begins: with the question of whether your own steering apparatus can still tell the difference between activity and truth.

Put it into practice

This prompt kit translates the essay's concepts into concrete prompts you can use right away.

Go to Prompt Kit