Executive Briefing: Your Website Has a New Audience

Many companies still optimize their website for visits. At the same time, it is increasingly being used by systems that barely show up in those metrics. Any company that fails to adjust its goals, KPIs, and incentive logic will end up punishing the very teams acting strategically.

Most AI debates about websites are still stuck at the technical layer. Crawlers. Rendering. Schema markup. None of that is wrong. But it is not the real point either.

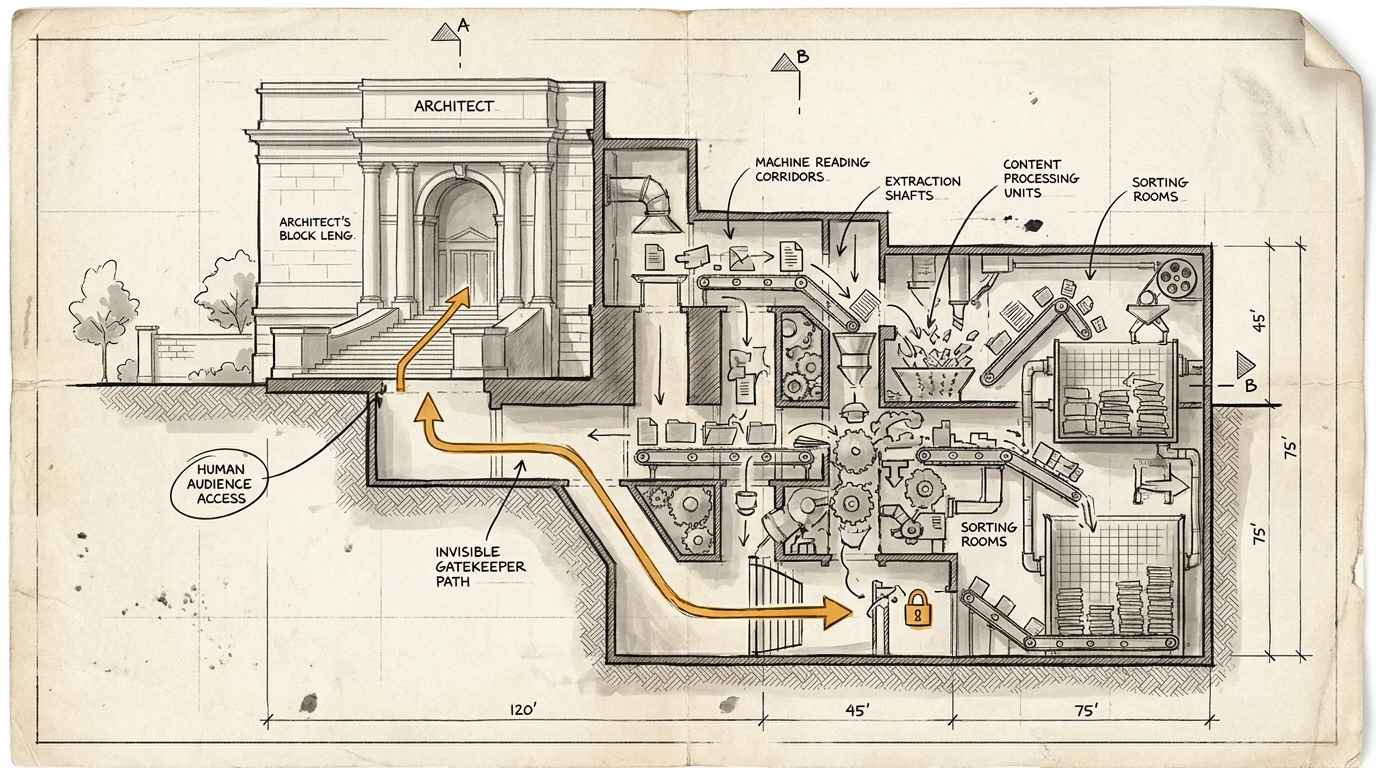

The real point is this: more and more often, a system now sits between your website and the human being on the other side, reading, comparing, and summarizing before that person ever arrives. Your website has acquired a second usage logic, while most KPI systems still only recognize the first.

That is how organizations start punishing the right teams for the wrong signal.

Why this briefing matters now

What is becoming visible here is not just another SEO trend. It is a change in the logic of the web itself.

Pew Research showed as early as July 2025 that Google AI Overviews were already changing click behavior in ways no serious team should still treat as a side note in 2026. Users were much less likely to click classic search links when an AI summary appeared. Only 8 percent clicked a regular result when Google showed a summary. Without a summary, the number was 15 percent. Users clicked sources inside the summary itself only 1 percent of the time.

At the same time, Cloudflare has turned AI Crawl Control into a dedicated product for making AI access to websites visible, manageable, and in some cases even monetizable. That alone is a signal. This new audience is not hypothetical. It has become operationally relevant enough that infrastructure providers now treat it as a separate control problem.

So the real shift is not: “People are disappearing from websites.”

It is this: more and more often, a gatekeeper now sits between your website and the human being, reading, classifying, and summarizing before that person ever gets there.

The sentence worth bringing into your next meeting

Your website has a new audience. Your annual bonus plan does not know it exists.

Yes, that is deliberately sharp. But that is also exactly how many steering errors are being made right now.

A website no longer has just one visible audience made up of people who click, read, scroll, buy, or fill out a form. It also has a machine audience: systems that retrieve content, compare products, extract brand signals, condense information into answers, and often send people to your site only later, or not at all.

The mistake would be to conclude from this that human audiences are becoming irrelevant.

The real point is something else: the website now has a second job, while most KPI systems still evaluate only the first.

Where the old traffic reflex starts to fail

Many teams still interpret falling traffic as automatic proof of falling relevance.

In the old logic that was often reasonable. If fewer people came to the site, that usually pointed to something real: lower visibility, a weaker campaign, softer demand, or stronger competition. To be fair, that logic had already started to crack once consent banners and tracking friction became normal.

In the new AI-mediated logic, the signal becomes decisively messier.

Fewer human visits can now mean several things at once:

- lower relevance

- or simply a more effective AI intermediary between you and the user

- or a shift in discovery from search and social toward LLMs and agents

- or better qualification before a human being reaches the site at all

That does not make traffic worthless.

But traffic alone is doing a worse and worse job of separating visibility loss from strategically useful pre-processing.

That is where existing steering systems start to tilt.

Two kinds of websites, two kinds of shift

To keep this concrete, you need to distinguish at least between two cases.

1. Conversion pages

These are pages or site areas built around a clear call to action: buy, register, request, book a demo, start a trial.

Here, the human stays central. Just later.

The machine increasingly takes over discovery, first-pass evaluation, comparison, and parts of the navigation. That makes the website less of an entry gate and more of a validation, trust, and closing layer.

For these pages, lower traffic is not automatically a disaster as long as the quality of the remaining visitors improves with it, along with conversion rate, average order value, or customer lifetime value.

The management question is therefore no longer just: How many people are arriving?

It is: How prepared are they when they arrive? What has a system already told them beforehand? And how well does the handoff from machine-mediated pre-sales into actual conversion work?

2. Brand and information pages without direct conversion

Here the shift is more radical.

Many brand websites without direct sales used to rely on a simple assumption: traffic itself was already a usable proxy for relevance. People came to the site, informed themselves, got a feel for the brand, and later moved on to another touchpoint such as retail, a platform, a distributor, or a physical point of sale.

If AI systems increasingly do that information work first, the website can remain strategically important, or even become more important, while direct human visits decline.

That is where the old KPI reflex flips into something actively misleading.

Because the question is no longer only:

“How many people visited the site?”

Increasingly, it becomes:

“Are the systems that pre-filter for humans understanding and representing the brand correctly?”

Why this is especially awkward for German companies

In the DACH region, this shift is colliding with a web reality that is often already badly organized.

A large share of corporate websites are still optimized mainly for three things:

- campaign reporting

- internal ownership boundaries

- polished surfaces for people who have already almost decided

That is enough in a world where the site mainly functions as a showroom.

It is less enough in a world where systems read, compare, and summarize first.

In Germany, this becomes visible especially quickly in three kinds of companies:

- manufacturers without direct sales, whose sites mainly exist to carry brand, product world, and trust

- regulated providers, where precise descriptions of services, liabilities, and product details actually matter

- B2B companies with complex offers, where humans previously needed a lot of implicit prior knowledge just to understand what was being offered

For these companies, the website is not just a piece of marketing real estate. It increasingly becomes reading material for systems that shape visibility, comparison, and preselection further downstream.

Put more bluntly: anyone who still treats this as a pure SEO issue is underestimating the problem.

The actual steering problem

The new audience is not in conflict with the old one.

The conflict only appears once it hits the steering system.

Because the website suddenly has to do two things at once:

- persuade humans, build trust, and convert where needed

- remain clear, accessible, extractable, and citable for machines

Those two logics overlap in part. But they are not the same.

A human reacts to dramaturgy, design, tone, social proof, friction, and timing.

A machine reacts to clarity, structure, completeness, freshness, and stable technical accessibility.

If you optimize only for the human journey, you lose machine legibility.

If you optimize only for machines, you will eventually damage the human experience.

So the right question is not:

“Should we optimize for humans or for machines?”

It is:

“Which parts of our website serve which job for which audience, and which metrics still capture that job in a meaningful way?”

This is not a tooling question. It is operating model.

Why classic analytics show too little

There is another problem. The new audience is often invisible exactly where marketing teams are used to looking.

Cookie-based analytics do not see many of these interactions properly. Text-based agents often do not show up there at all, or not in a useful way. Even where agentic visits become measurable, they are easy to misread as noise, direct traffic, or technical anomalies.

That creates a dangerous asymmetry:

- the website is doing real work for an additional audience

- CDN, server, and log data can detect it

- the classic marketing dashboard often cannot

That is why many companies notice the shift too late.

Not because it is not happening.

But because the instruments they use to look at the website were built for a different logic of the web.

What needs to change now

Do not rebuild everything. But do examine four things immediately.

1. Map the website by usage logic, not by sitemap

Which parts primarily serve conversion? Which ones build trust? Which ones orient the brand? Which ones mainly serve machine extraction?

2. Review KPI and incentive logic

Where are you still rewarding raw visit volume even though the strategic value is already being created somewhere else?

3. Look below the analytics surface

If all you see is GA4, Piwik Pro, or Adobe Analytics/CJA, you are not seeing enough. CDN, server, and log data are becoming the prerequisite for perceiving the new audience at all.

4. Separate metrics by website type

Conversion pages need different metrics than brand and information pages. If you keep looking at everything through the same traffic lens, you are not measuring efficiency. You are measuring convenience.

Honesty check

Three objections are fair.

First: Not every industry will feel this shift at the same speed. Businesses that sell mostly through installed relationships, field sales, or long-standing customer ties will tip later than digital comparison markets.

Second: Traffic still matters. Nobody should pretend visits have suddenly become irrelevant. The mistake is not measuring traffic. The mistake is treating traffic as the whole truth.

Third: The new measurement models are still rough. Many companies will have to work with messy proxies at first. That is not elegant. But it is still better than incentive systems that ignore an audience already reading along operationally.

Framing

Most website debates in recent years were debates about channels: search, social, paid, organic, CRO.

That debate is now shifting to a deeper layer.

It is no longer only about how people get to your website. It is about who is using your website before a person ever sees it.

That is why this topic does not belong in the SEO silo. And not in a technical AI task force either.

It belongs in management.

Because the strategic question is not:

“How do we get more traffic back?”

It is:

“How do we steer a website that has to work for humans and remain legible for machines when our current target system only sees the human half?”

Any company not asking that question now does not have a website problem.

It has a steering problem.

And steering problems inside organizations usually become visible only after the wrong teams have already been punished.