Open Standard, Open Entry Point?

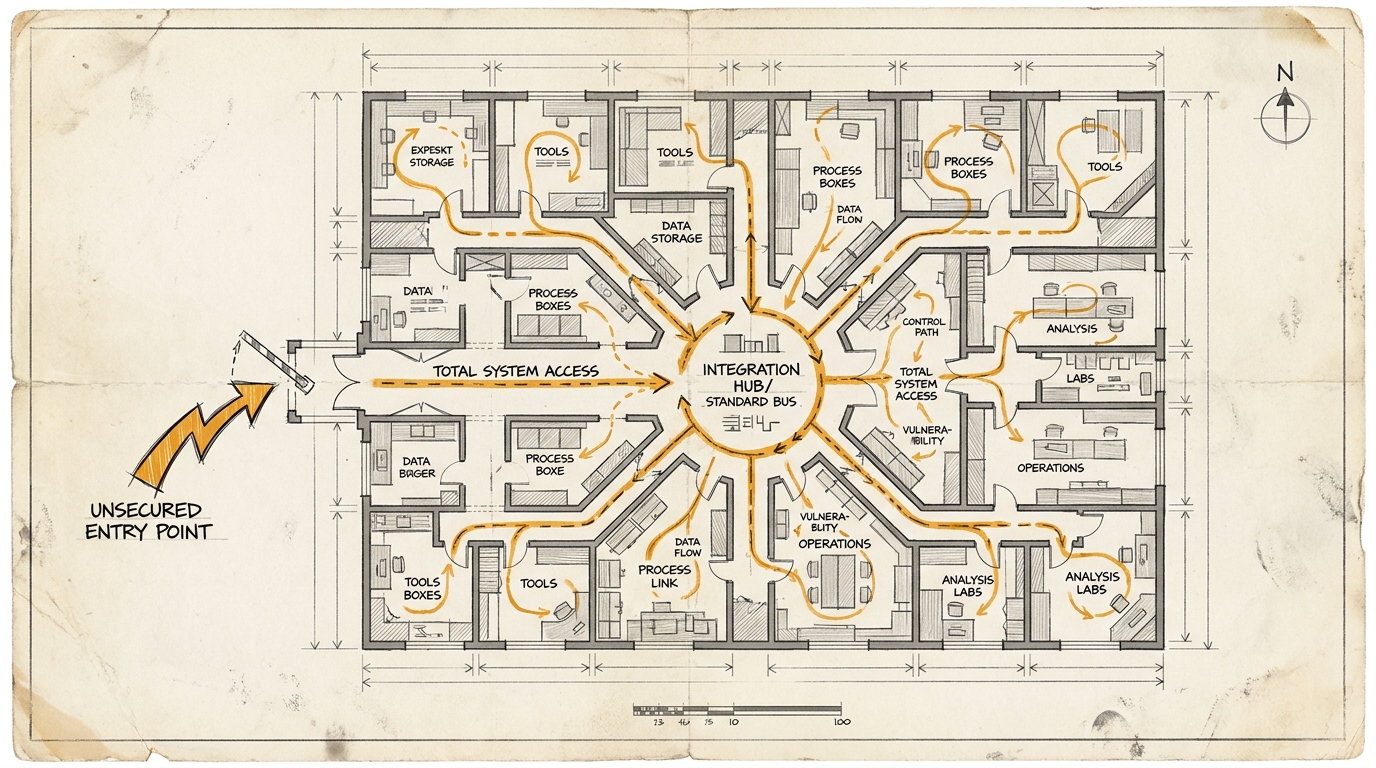

MCP is often framed as neutral progress. A common standard. A shared bus that tools, agents, IDEs, and external systems can all plug into. At first glance, that sounds reasonable.

From a security perspective, that is exactly where things get interesting.

paddo picks up a point that matters far more to decision-makers than the next tool demo: what happens when a standard is sold as a universal integration layer while most of the security burden gets pushed downstream?

The claims coming out of the Ox research are not minor. Several SDKs hand commands to the operating system through STDIO-style patterns without the protocol itself imposing capability boundaries, sanitization, or stronger protective defaults. In theory, that sounds like a transport question. In practice, it can quickly turn into remote code execution risks across downstream projects, IDEs, or marketplaces. That is why the interesting part is not only the individual CVE, but the pattern underneath it.

The pattern is this: the standard benefits from ecosystem effects, while the hardening work remains with integrators.

For management, that is not a nerd detail. It is an infrastructure question. Standards only become truly valuable when they provide not just interoperability, but dependable operating conditions. An open standard that delegates most of the security work to each downstream team does not only create innovation. It also creates distributed liability, distributed uncertainty, and very predictable patch gaps.

The MCP specification itself is revealing here. It describes STDIO as a standard transport in which the client launches the server as a subprocess. For HTTP-based transports, the specification contains explicit security warnings around origin validation, authentication, and binding to localhost. That asymmetry is what makes the debate uncomfortable. The security issue does not feel like an edge case next to the standard. It feels like something that was treated too late as an architectural question.

Of course there is a counterargument. Anyone who starts a process should know what they are starting. Not every insecure integration point is automatically a protocol flaw. That is fair as a form of technical minimalism. It is just not enough politically once the same standard is being marketed as the future convergence layer for AI tooling.

At that point the management question shifts. It is no longer just: is MCP elegant?

It becomes: do you want to rely on a standard whose openness and adoption may scale faster than the security guarantees you would actually want in production?

This is not an argument against MCP as such. It is a reminder that standardization alone does not create infrastructure quality.

When a standard simplifies connectivity while pushing the security burden downward, you are not only buying compatibility. You are buying governance work.

Ask yourself, or ask your AI: In which of your AI integration standards are you currently mistaking spread for maturity?