Ethical Until the Website Edge

Anthropic has invested heavily in a particular story about itself: safety, constitutions, responsible AI. That is exactly why Cloudflare's new metric matters. It does not measure the model. It measures the underside of the business.

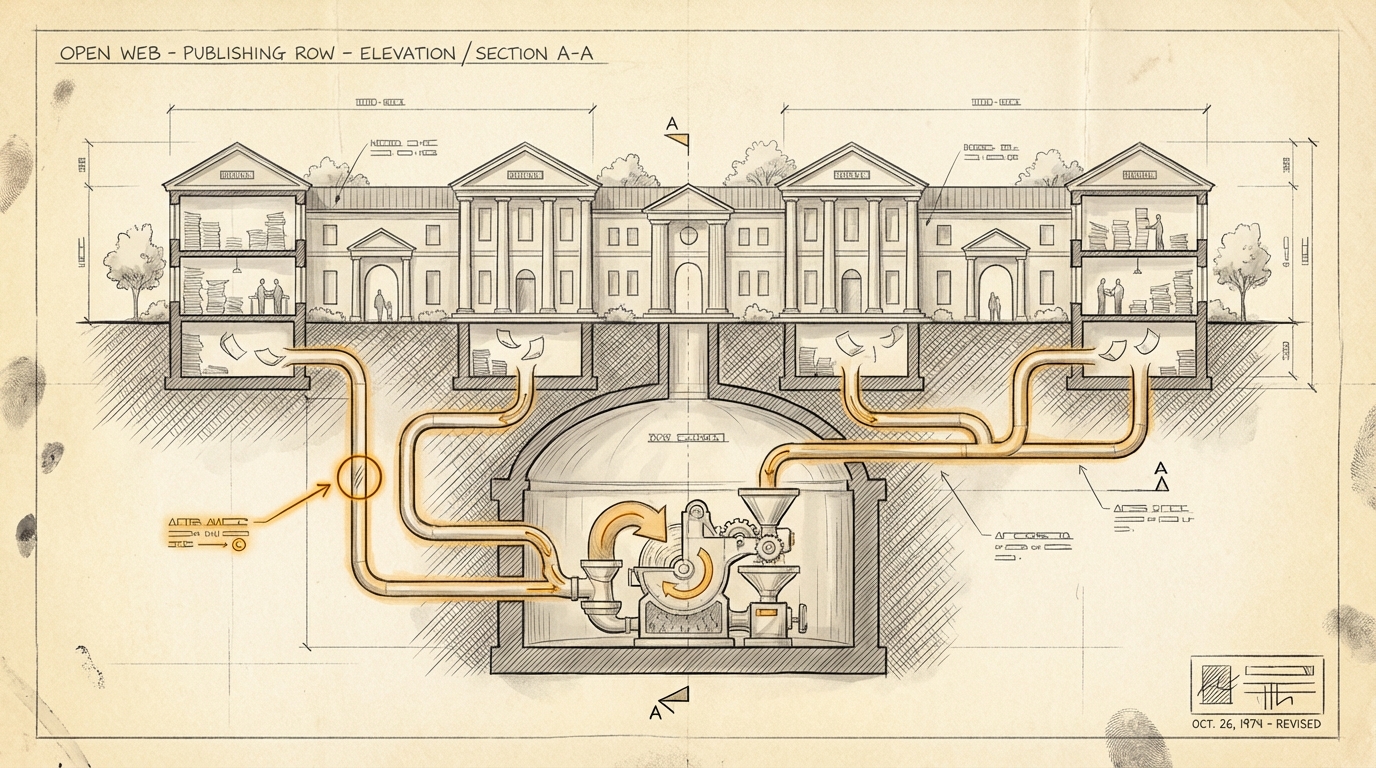

Cloudflare calls it crawl-to-refer: how often does a platform crawl HTML pages, and how often does it send users back? That used to be the basic deal of the web. Search engines could index content for free and sent traffic back in return.

With AI systems, that deal is breaking.

Cloudflare describes this unusually clearly: models consume more content, more often, while returning relatively less traffic to the original sources. Business Insider sharpened the point on April 12, 2026, pointing to Cloudflare data for April 1 through April 7. According to BI, Anthropic's ratio stood at 8,800 to 1. OpenAI followed at 993 to 1. Google, Microsoft, and DuckDuckGo looked far more balanced.

Stay up to date

Get notified when I publish something new, and unsubscribe at any time.

The point is not the number alone. It is the contradiction.

If a company positions itself around responsible AI, that responsibility apparently includes more than model behavior. Most AI ethics debates still focus on hallucinations, safety, bias, and alignment. Cloudflare's metric suggests a different question: How does an AI product behave toward the ecosystem it depends on?

The open web is not an abstract resource. It is publishers, trade blogs, forums, documentation, and people writing things down. If AI systems ingest that material, compress it, and answer directly without sending meaningful traffic back, this is not just a search issue. It is a compensation issue.

The caveat matters. Cloudflare states explicitly that traffic from native apps often does not include a Referer header. The ratios may therefore look higher than they really are.

Even so, the pattern remains. Cloudflare puts it plainly: legacy crawlers made publishing more economically viable, not less. Now models consume more content while sending the same or less traffic back.

That is the uncomfortable part. AI ethics does not end with the model. It may begin there. But it does not stop at product economics.

If even the companies with the cleanest ethics brand do not look meaningfully better when it comes to returning value to the sources, then a very practical question follows:

Who will still finance the open web in five years if AI systems continue to build their credibility on sources they barely repay?

Ask yourself, or ask your AI: Where in your own products or platforms do you already depend on an ecosystem that receives less back than you extract from it?