The Trust Tax

A few days ago, paddo.dev described a shift that looks like a pricing correction at first glance and like a new market logic at second glance. Anthropic stopped quietly blocking OpenClaw and started billing for it cleanly.

That sounds reasonable. It is. But that is not the most interesting part of the story.

Because even when the price becomes clearer, the problem does not disappear. It just moves up one layer.

TechCrunch describes the new rule plainly: Claude subscriptions no longer cover third-party harnesses like OpenClaw, usage now runs through separate metered billing. Shortly after that comes the next incident: OpenClaw creator Peter Steinberger gets temporarily banned while testing his own tool, then reinstated. At the same time, Anthropic publishes transparency figures that show the scale of enforcement already happening in the system: 1.45 million deactivated accounts in the second half of 2025, plus 52,000 appeals and 1,700 reinstatements.

Stay up to date

Get notified when I publish something new, and unsubscribe at any time.

Each individual point is explainable. Compute is scarce. Abuse is real. Frontier models are expensive. But that is exactly what makes the broader thought more interesting: when the economics of a system come under pressure, the real price does not only show up in the tariff. It shows up in predictability. How will my vendor behave when resources get tight? What can I expect, and more importantly, what must I expect?

At that point, pricing is no longer the whole story. Then the real issue is the operating relationship.

Billing, limits, appeals, policy enforcement, status communication: in software, that used to be admin surface. In AI platforms, it is moving into the product core. Because anyone pulling AI into operational workflows is not just buying model capability. They are also buying predictability. Clear billing. Stable boundaries. Clear availability. Clean escalation. The confidence that a suspension is not arbitrary and an appeal is not just formally present.

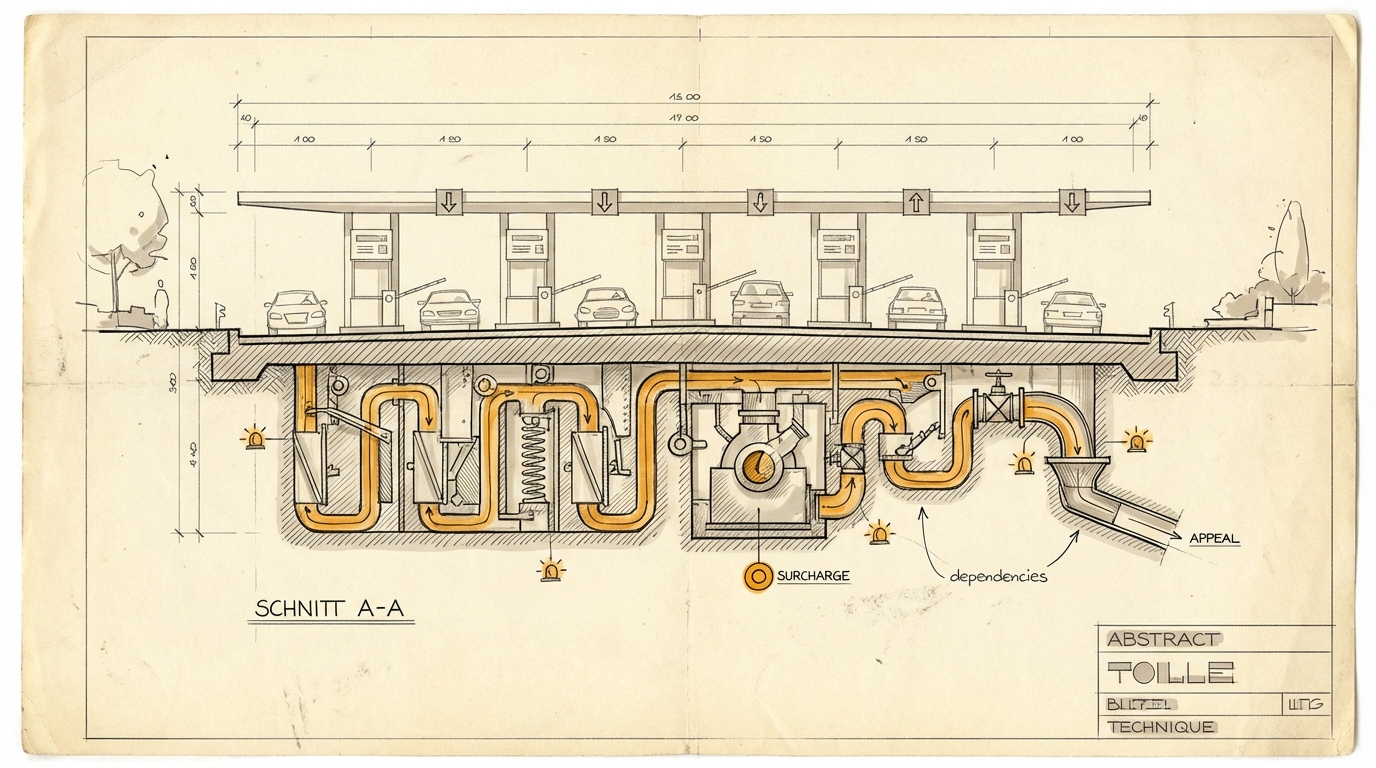

Once that layer starts wobbling, even the best model becomes more expensive organizationally. Not because the token price rises, but because uncertainty leaks into process design. Teams build buffers. Approvals become more cautious. Automation becomes more conservative. The hidden surcharge does not sit on the invoice. It sits in the company’s safety margins.

That is why “Trust Tax” is more than a polemical label. It describes a new kind of surcharge: not per token, but per unit of uncertainty.

That also distinguishes this observation from From Lock to Meter. The point there was that platforms move from policing to pricing because blocking scales worse than measuring consumption. The point here is different: even clean pricing does not solve the more important problem. If a platform wants to become the operating system for work, it must not only bill well. It has to feel institutionally trustworthy.

From the dekodiert perspective, that is a terrain signal. As models become more interchangeable, competition shifts into the layer above them. It will not just be won by whoever has the strongest capability. It will be won by whoever offers the more reliable operating relationship.

Put more bluntly: the next competitive advantage of AI platforms is not just intelligence. It is institutional trustworthiness.

And that is where things get uncomfortable. Because trust scales more slowly than revenue pressure.

Ask yourself, or ask your AI: Where in your own products or processes is scarcity already being managed through friction instead of clear rules and transparent pricing?

Sources

- paddo.dev: The Subscription Arbitrage Endgame (04.04.2026)

- TechCrunch: Anthropic says Claude Code subscribers will need to pay extra for OpenClaw usage (04.04.2026)

- TechCrunch: Anthropic temporarily banned OpenClaw's creator from accessing Claude (10.04.2026)

- Anthropic: Transparency Hub / System Trust and Reporting