Executive Briefing: Do Not Buy Agents You Cannot Operate

AI agents are not ordinary software procurement. They touch data, permissions, and workflows. That means operations must be clarified before the purchase. The real price is not the license. It is data access, permissions, auditability, supervision, and exit.

Why this briefing?

Several enterprise AI announcements have recently piled up. At first glance, they look like separate stories.

Anthropic is building a new enterprise AI services firm with Blackstone, Hellman & Friedman, and Goldman Sachs. OpenAI has reportedly raised more than four billion dollars for a similar deployment company. SAP is acquiring Dremio and Prior Labs, meaning data infrastructure and models for structured enterprise data. Pinecone is launching Nexus as a knowledge engine for agents. ServiceNow is opening its workflow layer to external agents through Action Fabric. And CodeWall’s report on McKinsey’s Lilli shows how quickly an internal AI platform can be exposed when APIs, permissions, and prompts are not operated properly.

These are not six separate stories.

They point to the same shift:

Entrepreneurial value is moving from the model into the operating layer.

The model is no longer the bottleneck. The bottleneck is everything an agent needs in order to work usefully and safely inside a real organization: data, permissions, context, workflows, auditability, cost control, approvals, and accountability.

That is why the existing, often pilot-driven procurement logic is no longer enough.

The sentence for your next vendor meeting

Do not buy agents you cannot operate.

That sounds obvious. It is not.

Many organizations currently treat agents like ordinary software: vendor meeting, demo, roadmap, security questionnaire, pilot, license, rollout. Afterwards they notice that the real questions were never answered.

- What work should the agent take over?

- What data is it allowed to see?

- What actions is it allowed to perform?

- Who notices when it is wrong?

- Who stops it when a model update changes its behavior?

- How do you get out again if the vendor, the pricing model, or your strategy changes?

For classic SaaS, these questions were important. For agents, they are foundational. An agent does not merely display information. It reads, combines, prioritizes, writes, prepares decisions, calls tools, and may trigger work in the next step.

An agent is not a new dashboard.

An agent is an actor with system access. A better question is this: what information, objectives, and permissions would a very capable new employee need in order to work safely, and which of those must an agent explicitly not receive?

The old buying sequence is backwards

The typical sequence looks roughly like this:

- Watch the demo.

- Believe the roadmap.

- Start a pilot.

- Clarify the license model.

- Excite the business team.

- Bring in privacy, security, the works council, and operations afterwards.

That is convenient because it starts with what is visible. The demo looks good. The roadmap sounds plausible. The pilot is small enough not to hurt anyone.

But that is exactly the trap.

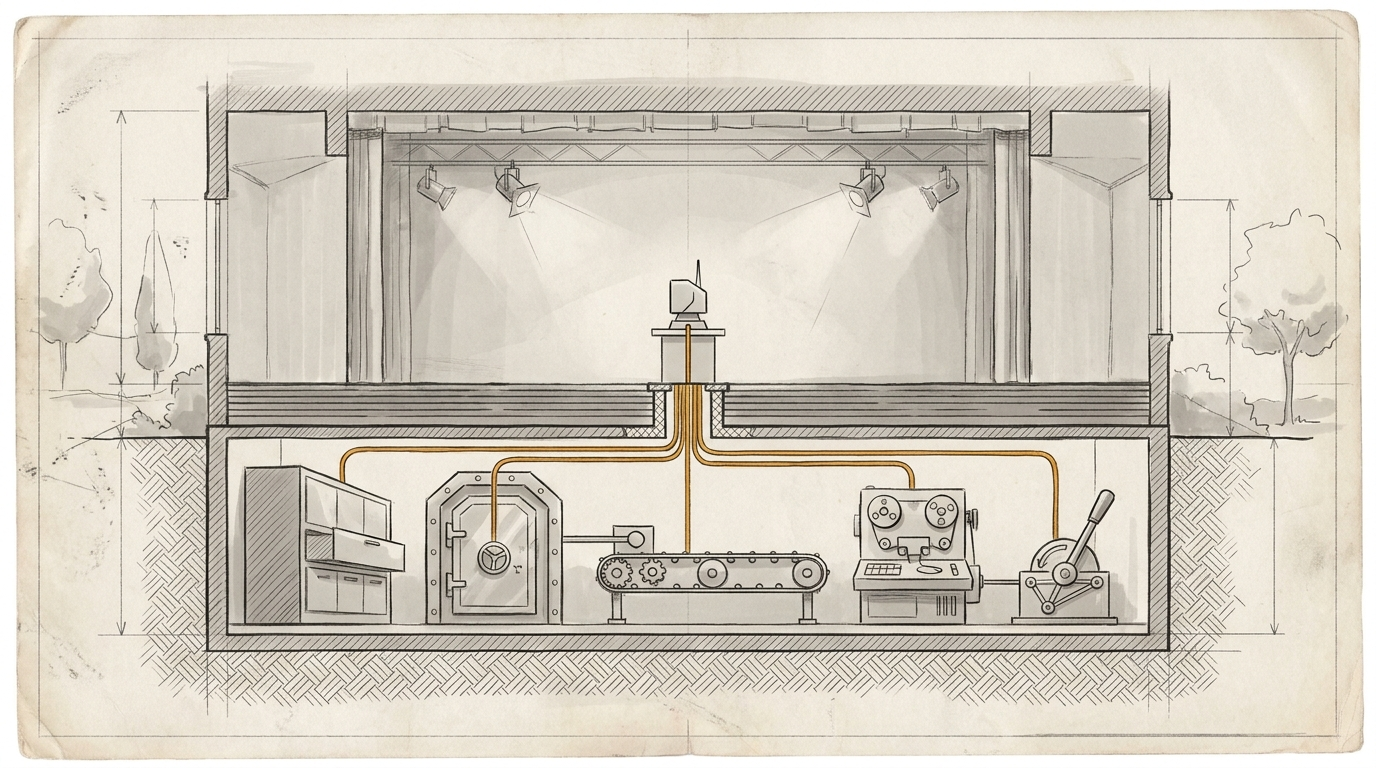

Agents do not become risky because they look good in a demo. They become risky when they reach real data, real permissions, and real processes. In other words: precisely when the pilot moves from the stage into the machine room.

The right sequence should be reversed:

- Clarify the work.

- Limit the data surface.

- Define permissions and actions.

- Set audit, evaluation, and supervision.

- Clarify exit and ownership.

- Only then evaluate the vendor and the roadmap.

That is less glamorous. But it is more robust.

With agents, the purchase is not a decision for a tool. It is the decision to attach a piece of work to a new operating layer.

What operating really means here

Operating does not simply mean: does the service run reliably?

That is the old IT question.

For agents, operating means:

- You know which work is being delegated.

- You know which data is needed for that work.

- You can limit access rights per task.

- You have logs that are not just pretty, but forensically useful.

- You can test quality against real tasks.

- You know where a human has to decide.

- You have an incident process when the agent acts incorrectly.

- You can export or shut down agent logic, prompts, workflows, evaluations, and relevant contexts.

That is operations.

Not: an admin can log in.

Not: the vendor has SOC 2.

Not: the contract says "human in the loop".

Operating means your organization can limit, inspect, improve, and if necessary remove the agent.

If you cannot do that, you are not operating it. You are hoping it behaves.

That is not strategy. That is weather.

Why the billions are flowing into deployment

OpenAI and Anthropic are not investing in deployment structures, forward-deployed engineering, and enterprise services by accident.

It is an admission.

The model providers know that their models alone do not reach deeply enough into organizations. Economic value only emerges when an agent reaches operational contexts: CRM, ERP, documents, emails, tickets, databases, approvals, roles, rules, process exceptions, and implicit knowledge.

You cannot sell that out of a benchmark.

You have to build it.

That is why new services firms, partner networks, and deployment companies are emerging. Not because everyone has suddenly become romantic about consulting. But because the hard work sits where a model meets a grown organization.

That is also why SAP is acquiring Dremio. SAP’s announcement says it quite directly: enterprise AI does not fail because the models are too weak, but because data is fragmented, locked in proprietary formats, and stripped from its business context.

Pinecone argues similarly, just at the knowledge layer. Agents waste too much runtime searching, reading, and sorting the same context again and again. Nexus is supposed to pre-compile knowledge into task-specific artifacts.

Again, the message is the same:

Context is not decoration. Context is infrastructure.

And whoever turns context into infrastructure has to operate it.

The McKinsey Lilli case is not just a security case

In March 2026, CodeWall described how an autonomous agent, according to its own account, compromised McKinsey’s internal AI platform Lilli in under two hours. According to CodeWall, the agent found publicly documented APIs, 22 unauthenticated endpoints, and a SQL injection through JSON field names. The platform contained millions of chat messages, files, RAG chunks, workspaces, assistants, and system prompts.

The obvious reading is: security failure.

True. But too narrow.

The deeper reading is: operational failure.

A platform of this kind is not just an application. It is a reservoir for strategic questions, client data, internal research, methods, documents, and prompt logic. If such a system exposes APIs and stores prompts in a writable database, that is not merely a bug.

It means the right questions were not in the room at the decisive moment.

- Which endpoints are public?

- Which actions are unauthenticated?

- Where are prompts stored?

- Who is allowed to change them?

- How is prompt integrity monitored?

- Which data is stored in plaintext?

- Which logs show what an agent actually did?

These are not questions for after rollout. They are questions before the purchase, before the pilot, and before any serious integration.

Europe does not make these questions smaller

In Europe, this kind of procurement does not get easier. It gets harder. Rightly so.

As soon as an agent processes personal data, prepares decisions, structures work, or makes individual usage visible, it is no longer just a productivity tool. It becomes a governance object.

Several layers suddenly connect:

- Data protection: which data is processed, stored, analyzed, or passed to subprocessors?

- AI Act: who is the provider, who is the deployer, who materially changes the system, and which AI literacy obligations arise?

- Co-determination: can employee behavior or performance be observed or analyzed?

- IT security: which tools may the agent call, which data can it exfiltrate, and how are prompt injection and memory poisoning tested?

- Operations: who monitors quality, errors, drift, cost, and model changes?

This is not Germany enjoying complication.

It is the organizational truth behind agents.

An agent that is integrated deeply enough to be useful is integrated deeply enough to become relevant for regulation, security, and co-determination.

If you clarify that only after the pilot, you designed the pilot incorrectly.

Five questions before every agent purchase

If you take only one practical framework from this briefing, take this:

Before every agent purchase, five questions must be answered in writing.

1. What work are we delegating?

Not: "We want to use agents."

But: which recurring work should get better?

Research? Briefing? Customer support? Campaign planning? CRM maintenance? Reporting? Proposal preparation? Code changes? Invoice checking?

If the work is not named, the agent cannot be meaningfully limited. Everything becomes a potential use case and nothing becomes reliable operations.

2. What data does the agent really need?

Not: "The agent gets access to the workspace."

But: which data sources does it need for this specific job?

Email, calendar, CRM, DAM, ERP, website, analytics, ticketing system, document repository, Slack, Teams, SharePoint, Google Drive?

And just as important: which data does it explicitly not need?

An agent that sees everything looks strong in the demo. In the organization, it is often just a very efficient violation of least privilege.

3. Which permissions and actions are allowed?

Reading is not the same as writing.

Writing is not the same as sending.

Sending is not the same as booking, deleting, publishing, ordering, or changing customer data.

You do not need an abstract autonomy debate. You need an action matrix.

What may the agent do alone?

What may it only suggest?

What requires approval?

What may it never do?

If these boundaries only exist in a PowerPoint deck, they do not exist. They must be technically enforced.

4. Who checks quality, errors, and drift?

An agent can work well today and behave differently after a model change.

It can be useful in one team and dangerous in another.

It can handle standard cases cleanly and hallucinate systematically in edge cases.

That is why you need evaluations on real tasks, golden tasks, review processes, error classes, monitoring, and a place where decisions about continued operation are made.

The question is not: does the vendor have benchmarks?

The question is: do you have your own test tasks for your own work?

5. How do we get out again?

Exit is more than data export when agents are involved.

You need to ask:

Can we export agent definitions?

Prompts?

Workflows?

Skills?

Logs?

Evaluations?

Approval logic?

Memory?

Brand guidelines as executable work logic?

If after twelve months you only get raw data back, while the learned way of working stays in the vendor system, the exit exists formally but is weak in practice.

The new lock-in does not only sit in the file. It sits in the way of working.

What this means for your next buying process

Procurement has to enter the machine room earlier.

Not because procurement should suddenly become architecture. But because the buying decision is blind without an operating model.

A good agent process does not start with the vendor shortlist. It starts with a small, mixed room:

- The business team, because that is where the work lives.

- IT and architecture, because that is where systems, interfaces, and operations live.

- Security, because agents open new attack surfaces.

- Data protection, because context can almost always become personal data.

- Legal and compliance, because roles, obligations, and contracts do not fall from the sky later.

- The works council, if work, performance, or behavior are affected.

- Procurement, because these boundaries must be translated into contract, SLA, exit, and pricing model.

That is not slow. It prevents fake acceleration.

Fast is not when you pilot an agent in four weeks and then spend six months retrofitting permissions, logs, and a works agreement.

Fast is when you know before the purchase what you are actually allowed to buy.

The vendor test

A vendor that wants to sell agents should be able to show more than a demo.

It should be able to answer these questions:

- Which concrete work classes already function in production?

- How is data minimized per use case?

- How are permissions technically limited?

- Which logs does the customer get?

- Which actions require approval?

- How are prompt injection, tool misuse, and memory poisoning tested?

- What happens when models change?

- Can customers run their own evaluations?

- Which artifacts are exportable?

- What is deleted at contract end, and how is that proven?

If the answers are only marketing answers, you know enough.

Then the product may be demo-ready. But it is not yet operable.

Closing

The next winners in the enterprise AI market will not only have the best models. They will find the best ways to connect models to real organizations: data, permissions, workflows, auditability, and operations.

For vendors, this is the new growth zone.

For you, it is the new evaluation zone.

The central question is no longer: which agent can do the most?

It is: which agent can we responsibly run, limit, inspect, and shut down again?

Do not buy agents you cannot operate.

Everything else is a beautiful demo with downstream costs.