The New Lock-In Lives in Memory

Most companies still discuss vendor lock-in as if we were in 2018. Then the debate is about APIs, file formats, export functions, contract terms, and the usual procurement folklore. None of that is wrong. It is just incomplete.

Practical test for this essay

Three templates for a conversation with an AI: clarify the goal, check contradictions, test whether the work can be delegated. You do not get a finished strategy or a tool recommendation.

Open the templatesThis essay does three things.

- It shifts the debate from models and interfaces to the real new power question: who holds your agents' memory.

- It shows why this is not just a technical issue in Germany, but an organizational and regulatory one.

- It does not stop at diagnosis. It ends with four concrete questions you can use to test your own AI architecture.

By the end, you should see three things more clearly than before: why open standards are not enough on their own, how the next AI dependency differs from classic software lock-in, and which decisions you should make now before convenience hardens into infrastructure.

The defining question of the next few years is no longer just: Can we take our data with us?

It is: Can we take our agents' memory and our employees' routines with us?

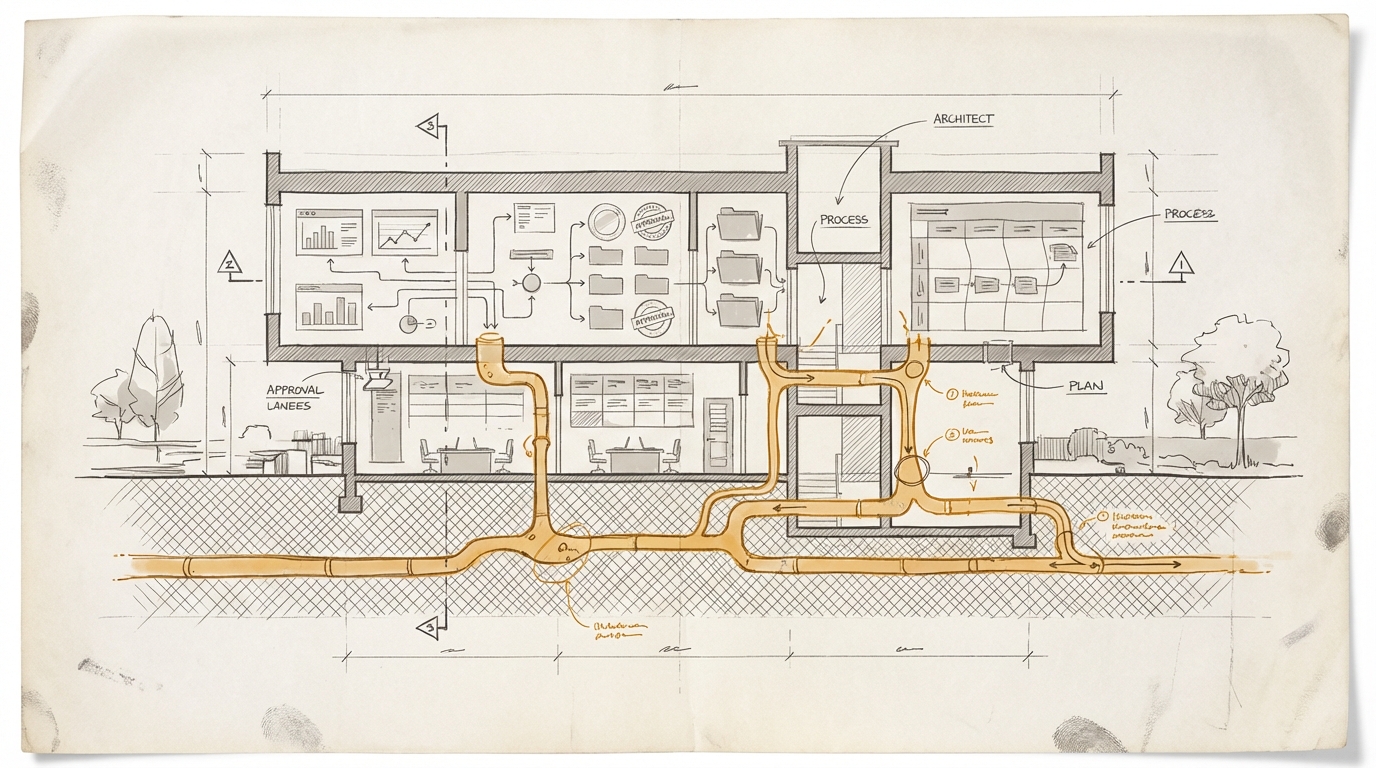

That sounds abstract at first. It is not. As soon as an agent is not just answering on demand, but sits inside real workflows for weeks and months, something starts to accumulate. Preferences. Approvals. Exceptions. Sequences. Quiet shortcuts. Knowledge about how your team actually works, not how the process manual says it should work. Your AI agents learn. They remember processes, exceptions, and parts of the operating reality around them.

And that is where the new form of lock-in begins.

Not in the model. In the memory.

The Wrong Debate

Open standards like MCP are useful. They standardize how agents talk to tools. That matters. If you want to connect systems, you need exactly those protocols.

Stay up to date

Get notified when I publish something new, and unsubscribe at any time.

But this quickly turns into a convenient self-soothing story. Open standard, problem solved. No walled garden. Everything portable.

This is the same mistake we have seen in other technology waves. An open protocol does not automatically prevent economic power from shifting into the layer above it. The lower layer can be open while the real pull sits at the surface: in the default, in the ecosystem, in distribution, in habit.

The web is open. Market power still clustered around a few browsers, search engines, and platforms. In the Google case, the US Department of Justice argues very directly that default positions are incredibly valuable and that users rarely switch away from them. The default is not the whole market. But it is often the place where habit begins.

MCP answers one question: How does an agent reach a system?

MCP does not answer the more important one: Who owns the context that accumulates through that work?

The plug is open. The habit is not.

What Is Different This Time

Software lock-in used to be painful, but visible. Microsoft held your files. Salesforce held your customer data. Slack held your communication history. SAP held your process logic, your customization, and large parts of your operational truth.

Agentic lock-in sits deeper.

When a system learns over months

- which exceptions your team regularly makes,

- who really needs which approval,

- which internal formulations work,

- which quality patterns get accepted or rejected,

- which workflows run well in the morning and routinely stall in the afternoon,

then it is no longer just storing data. It is storing behavior-shaped operating experience.

That is the real asset. Not the file. Not the message. Not the connector. But the condensed model of how you work.

And that is hard to port.

If ERP systems tied companies to their processes, agent systems will tie them to their lived routines. SAP held your master data. The next platform wants to hold how your organization actually works.

Why Open Protocols Are Not Enough

The fair counterargument is obvious: If tool access is open and memory is cleanly separated, this dependency can be limited.

That is true. Technically, it is possible. Microsoft now argues rather explicitly for user-scoped persistent memory, meaning memory should not quietly disappear inside the runtime but be treated as its own controlled layer. The important point is not only the product, but the framing: in enterprise settings, persistence is not just convenience. It is a question of privacy, data isolation, governance, and compliance.

There are practical counterdesigns too. Ideas like Open Brain describe exactly this pattern: your own memory layer, connected through MCP, usable across different clients and models. The architecture is sound. Memory should travel. It should not stick to the tool.

That is the good news.

The bad news is that good architecture does not automatically beat convenience. Or enterprise procurement.

Daily operations almost always prefer the polished system that is simply there. The product that gives you marketplace, admin UI, standard integrations, billing, permissions, and support from one hand. The tool that is not only connectable but quickly feels like home. Or as people used to say: "Nobody ever got fired for buying IBM."

That is why the pure protocol debate is too narrow. The decisive level is not only interoperability. It is operational proximity.

Everyone Is Building the Same Layer

Anthropic points with enterprise agents, plugins, and marketplace toward a world in which Claude does not just answer, but grows into the active work context. One detail matters in particular: inside the Claude Marketplace, companies can apply existing Anthropic commitments to partner solutions. That is not a technical detail. That is distribution, procurement, and lock-in logic in one sentence.

OpenAI mirrors this with its talk of a unified operating layer and an AI superapp for enterprises. Microsoft uses more governance-heavy language, but aims at the same layer in which individual model calls become an operational system.

The vendors are no longer only competing over which model writes a little better or thinks a little cheaper.

They are competing to become the operating layer of knowledge work.

That is the strategic shift.

The Real Risk Is Operational Amnesia

For decision-makers, the problem is not that switching becomes technically impossible.

The problem is more subtle. A switch can remain possible on paper and still be unattractive in practice because it produces operational amnesia.

Imagine you are not only replacing a tool, but a system that now knows

- which requests your CFO always wants to review once more,

- which customer cases get escalated immediately,

- which tables Controlling trusts,

- which formulations trigger Legal,

- which exceptions Sales quietly accepts although nobody has documented them properly.

In many organizations, that knowledge already exists today in diffuse form. It lives in heads, chats, habits, and the quiet margins of processes. Agents can, for the first time, collect that layer, condense it, and make it operational in daily work.

That makes them useful.

It also makes them dangerous if ownership remains unresolved.

Because then the loss in a switch is not just friction. It is the loss of accumulated operational intelligence.

You are back to a brilliant stranger.

The German Version of the Problem

In the German management debate, AI dependency is often framed as a procurement or data protection issue. Which vendor, which server location, which clause, which compliance layer?

All legitimate. Not enough for this issue.

The real German question should be this:

Which parts of our agentic memory may remain merely learned, and which parts must belong to us as an organization?

That is not a technical subtlety. It is governance.

If approval logic, preferences, and work routines function only because a vendor carries them for you, then you did not just buy software. You started outsourcing parts of your operating model.

For DACH companies, this is particularly sensitive because processes, co-determination, documentation, and accountability already carry a different weight here than they do in many US product narratives. And this time that is not just a cultural footnote. It is embedded in the legal and organizational framework.

As soon as agents do not just answer but also shape routines, approvals, evaluations, and implicit selection patterns, the issue automatically lands in the co-determination and governance arena.

It belongs on the desk of the COO, CIO, General Counsel, and works council.

The Fair Counterposition

This thesis can also be overstated. Not every integrated memory mechanism is automatically bad lock-in. Sometimes the integrated solution is simply the better one. Not every organization needs its own memory backbone. Not every team wants to become the operator of its own context infrastructure.

And it is also true that open standards and portable memory layers can soften the hardest version of the problem. That is not just hopeful thinking. It is genuinely buildable.

That is exactly why the stronger version of the thesis is different:

Open standards do not prevent market power from moving into the layer above them.

That is the point.

Not: portability is impossible.

But: portability alone does not protect you from pull.

What To Do Now

The obvious management reaction would be to delegate this to architecture. Please check how we can separate memory cleanly. Please add exit clauses. Please plan vendor-neutral.

All correct. All too little.

What is more useful is a simple four-question audit:

- Which routines in our AI workflows are documented, and which are only learned?

- Which preferences and approvals already live de facto inside a tool?

- What could we export technically but still not restore operationally right away?

- Where are we buying convenience today that could become operational dependency tomorrow?

This is not a call for paranoia. It is an attempt to see a new infrastructure problem in time for what it is.

Because that is what it is. An infrastructure problem. Just no longer at the level of servers, APIs, and file formats. But at the level of habit, context, and organizational memory.

The last wave of platform power controlled data, distribution, and defaults.

The next one controls memory as well.

And where agents do not just work, but remember, the real strategic question begins:

Who owns your organization's memory when more and more work runs through systems that learn?

Maybe that is the most useful formulation for the next few months: Do not just evaluate which model you are buying. Evaluate where you are currently beginning to park your operational memory.

A tool can be replaced.

An outsourced memory much less easily.

The thinking tools for this piece help you audit your own AI operating layer more systematically. The Memory Ownership Audit, the Exit Cost Simulator, and the Governance Boundary Check are three prompts you can apply directly to your organization. Go to the thinking tools

New issues straight to your inbox? Subscribe to the newsletter

Practical test for this essay

Three templates for a conversation with an AI: clarify the goal, check contradictions, test whether the work can be delegated. You do not get a finished strategy or a tool recommendation.

Open the templates