Executive Briefing: The Best Agent Is the Wrong Question

Copilot, Claude Cowork, Codex, Gemini, Salesforce Agentforce, and WPP Open are not versions of the same thing. They fit different kinds of work, data environments, interfaces, and risks. If you want to judge them properly, you first need to understand the problem.

Copilot is not Claude Cowork. Claude Cowork is not Codex. Codex is not Gemini. Gemini is not Salesforce Agentforce. And WPP Open is something else again.

Still, many companies are currently putting all of these products into the same mental drawer: AI agents.

That sounds practical. It is too crude.

An agent that summarizes a text is a tool. An agent that reads your emails, evaluates CRM data, writes briefings, plans campaigns, creates files, and maybe even triggers actions is something else.

It is a piece of organizational architecture.

That is why the most popular question is usually wrong. It asks: which agent is the best?

The better question is: which agent fits which work, which data, and which constraints?

There is no one right agent. There are only better evaluation questions.

The US filter is useful. Just not sufficient.

Nate Jones recently formulated a useful filter for agent launches. In essence, he asks: does the product connect to tools my team already uses? Can other agents build on top of it? Does it have access to relevant data? Is an ecosystem forming? Can agents be stacked on it?

These questions are useful because they move the debate away from model comparison.

The question is no longer whether Claude, GPT, Gemini, or Kimi is three points ahead in some benchmark. The question is which product can reach the data, permissions, and workflows where your work actually happens.

A mediocre agent with access to real customer data can be more useful than a very good model staring at an empty wall. Organizations rarely function through model quality alone. They function through context, permissions, routines, and access.

Nate’s filter separates features from infrastructure. An agent that only works in its own surface is a product. An agent that other systems can use, that inherits permissions and reaches workflows, becomes infrastructure.

So far, so right.

The European catch is this: once an agent becomes infrastructure, it also becomes governance. And very often: co-determination.

Six agent types, six different questions

When an executive asks, "Which agent do we need?", my counter-question would not be, "How large is your budget?"

My counter-question would be: which problem are you trying to solve?

Because Copilot, Claude Cowork, Codex, Gemini, Salesforce Agentforce, and WPP Open sit in different parts of the system.

If the work lives in the Microsoft graph: look at Copilot

If your work mostly happens in Outlook, Teams, SharePoint, Excel, PowerPoint, and M365 documents, Copilot is not just another chatbot. Its decisive advantage is access to organizational context.

The evaluation question is not: is Copilot the best model?

It is: is our problem so deeply anchored in the M365 context that native data access matters more than maximum model control?

If yes, Copilot is often more obvious than a generic agent with connectors bolted on afterwards.

If the work is model-centered, open, and skill-based: look at Claude

Claude and Claude Cowork are stronger when the work is less tied to a single enterprise graph and more tied to reasoning, writing, analysis, coding, or reusable skill patterns.

The evaluation question is: do we mainly need a strong reasoning and working model that we can shape ourselves, or an agent already deeply embedded in a specific enterprise system?

If you want to build, test, and iterate your own work patterns, that is a different decision from "we want an agent to work directly inside the CRM".

If the work depends on interfaces: look at Codex and Computer Use

Many companies have systems built for humans and poorly accessible to agents.

No clean API. No MCP server. Just a graphical interface, a browser window, an old back office, a portal, or some line-of-business application that has worked for years and was therefore never properly integrated.

This is where Codex, Computer Use, and desktop agents become interesting. Not because the interface is the elegant route. APIs are more stable. But many real business processes still live in forms, upload fields, download buttons, and portals that a human has been clicking through.

The evaluation question is: does the agent need to operate a system through an interface because there is no better integration?

If yes, it becomes a bridge technology for messy reality. But precisely because of that, it needs tighter boundaries: sandboxing, recording, approvals, test data, small action spaces, and clear stop points.

If the work lives in the Google stack: look at Gemini and Opal

Not every company lives in Microsoft.

If your work is strongly tied to Gmail, Google Drive, Docs, Sheets, Slides, Meet, and BigQuery, the same mechanism applies as with Copilot: native access to the work graph can matter more than a marginally better model somewhere else.

Google Opal is also interesting because it lowers the entry barrier as a prompt-based workflow builder. Nate describes Opal as a free on-ramp into agent logic: workflows, dynamic routing, context across sessions, and memory stored in Google Sheets. That is not perfect, but it is remarkably inspectable.

The evaluation question is: does our problem live in the Google workspace, or do we want fast, visible workflow prototypes?

If yes, Google should not be missing from the evaluation. But Google-native workflows do not automatically port either.

If the work lives in CRM and revenue operations: look at Salesforce

Salesforce Agentforce and the Headless 360 logic matter when the core of your problem lies in CRM data, opportunities, service cases, flows, customer data, pipeline logic, or revenue operations.

Then the chat is not the product. The product is controlled access to objects, workflows, permissions, and business logic inside the CRM.

The evaluation question is: should an agent merely talk about CRM data, or should it perform CRM work with real permissions, logs, and system logic?

If it is the latter, a loosely connected generalist is often not the right starting point.

If the work is about marketing method, brand, and agency service: look at platforms like WPP Open

WPP Open is another category again. It is not just a tool for individual tasks. It is a platform where data, agents, methods, brand logic, agency knowledge, and service models come together.

That can make sense when the problem is not "automate one task" but "connect marketing work across strategy, creative, media, production, and performance".

The evaluation question is: do we need a tool for a task, or are we adopting a working surface where method and operations arrive with it?

When method and operations come with the platform, the decision becomes more strategic. Then it is no longer only about capability. It is about dependency, ownership, and exit.

Disclosure: in my day job, which has no influence on dekodiert.de, I work for VML, an agency in the WPP network.

This is not a ranking

This makes the agent decision less mystical:

- Office and knowledge work graph: evaluate Copilot.

- Reasoning, writing, coding, or skill work: evaluate Claude and similar model agents.

- Old systems without clean interfaces, but with usable screens: evaluate Codex, Computer Use, and desktop agents.

- Google workspace or quickly prototyped workflows: evaluate Gemini and Opal.

- CRM or revenue operations: evaluate Salesforce-native agents.

- Marketing method, brand systems, and agency services: evaluate platforms like WPP Open.

This is not a ranking. It is tool choice.

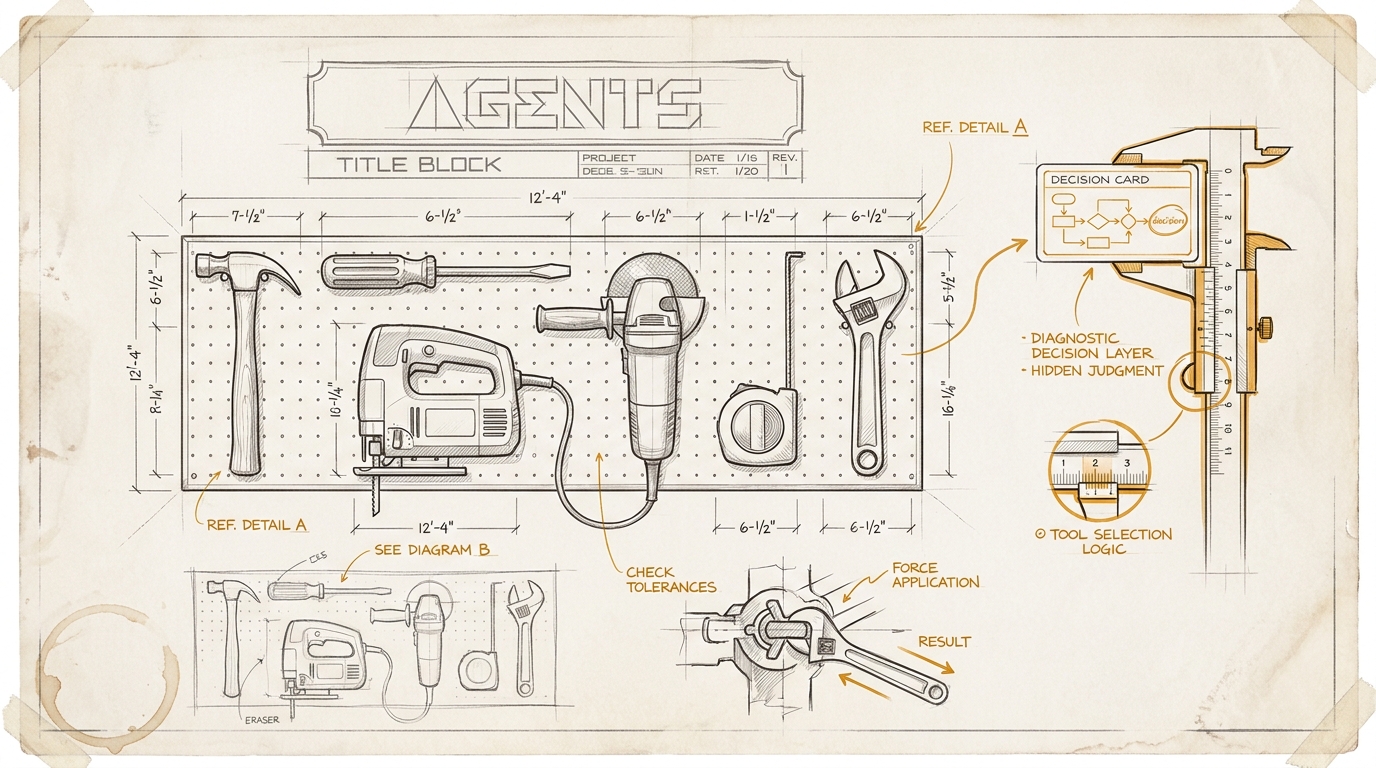

A hammer is not a bad screwdriver. It is a hammer.

Deep integration is never only an advantage

Many AI products are currently selling exactly this depth as the promise.

The agent sees your emails. Your meetings. Your CRM history. Your brand guidelines. Your assets. Your campaigns. Your performance data. Your internal chats. Your documents. Your approval logic.

From a product perspective, that makes sense. An agent without context is an intern wearing a blindfold. Perhaps motivated. Rarely useful.

But in Europe, data access is not just product quality. It is a legal question, a security question, a co-determination question, and an operating model.

Once an agent prepares or executes real work, some very sober questions appear:

- Which data does it really need?

- Does it inherit existing permissions, or does it create shadow permissions?

- Does it store prompts, outputs, files, embeddings, feedback, or memory?

- Which actions is it allowed to perform?

- Who can see the logs?

- Can managers evaluate individual usage, output volume, or quality?

- What happens if the vendor changes the model, the agent logic, or the pricing model?

These are not detail questions for Legal at the end of the pilot. They are part of the decision.

An agent that is deeply integrated enough to be truly useful is almost always deeply integrated enough to cause damage.

WPP Open shows the pattern especially clearly

WPP describes Open as an "agentic marketing platform". The platform is meant to connect marketing work through a shared, secure workspace. WPP teams can use it to deliver services. Client teams can use applications directly. Or both sides can work together in the same environment.

That is strategically plausible. Marketing work is full of handovers, data gaps, media logic, brand guidelines, audience assumptions, production loops, and approvals. If agents are supposed to work there in a meaningful way, they need more than a chat window.

The Agent Hub promises verified, brand-safe agents trained on proprietary data, frameworks, and methods. WPP refers to roughly 30 years of Brand Asset Valuator data and 150 years of WPP creative intelligence.

That is not automatically bad. This is what a serious platform attempt looks like: not a wrapper around a model, but a working layer made of data, method, process, agents, and human expertise.

But precisely for that reason, WPP Open is the right case for the European question.

If an agency platform improves your briefings, shapes your audience logic, operationalizes your brand guidelines, runs your tests, plans your campaigns, and codifies your methods into agents, you are not just buying efficiency.

You are buying a piece of operating system for marketing.

Then the decisive question is not: is the tool good?

The question is: does your organization become more capable, or merely more deeply embedded in someone else’s operating system?

The new lock-in lives in the working method

Vendor lock-in used to be relatively easy to describe.

Data export. Interfaces. Contract duration. Training cost. Migration. Annoying, but at least visible.

With agents, it gets more uncomfortable.

The new lock-in does not only live in files or APIs. It lives in coded workflows, prompt logic, approval patterns, agent memory, evaluation data, brand guidelines as executable working logic, proprietary methods, and team habits.

That is harder to export than a CSV file.

If a vendor says after twelve months, "Of course you can export your data", that is nice. But it does not answer the actual question.

Can you export the way of working too?

Can you take agent definitions, tests, approvals, logs, memories, prompts, brand rules, and process artifacts with you in a format another vendor or your internal team can reuse?

If not, the exit only exists on paper.

Europe is not simply slower here

In many US debates, regulation looks like interference. In Europe, it is more like a reminder that organizations consist of more than productivity.

The EU AI Act distinguishes between providers and deployers. It requires AI literacy for people dealing with AI systems. It regulates based on risk and draws particular boundaries where systems touch people, rights, or sensitive areas.

That changes the management question.

If you introduce an agent, you are not simply a buyer. You become an operator, designer, supervisor, and sometimes co-responsible actor of a system that changes work.

In Germany, § 87 of the Works Constitution Act adds another anchor. Works councils have co-determination rights when technical systems are introduced or used that are intended to monitor employee behavior or performance.

Typical agent products show usage per person, store prompts, log outputs, measure quality, show delegated tasks, and create admin dashboards.

You can call that "adoption analytics".

In Germany, the nice name is not enough.

If a system is suitable for evaluating behavior or performance, it is no longer a pure IT decision. That is not a German quirk. It is a power question with legal text attached.

And frankly, that is not only an obstacle. It forces companies to ask questions they should be asking even without a works council.

Security for agents is not just access control

With classic SaaS, security could often still be thought of roughly in terms of login, roles, permissions, and data storage.

For agents, that is not enough.

An agent does not only read data. It interprets context, plans intermediate steps, calls tools, and can trigger actions: write emails, change tickets, create files, update CRM fields, prepare campaigns, or send reports.

So the security question shifts: what may the agent do on behalf of the user? Which actions are read-only? Where is approval required? Which tool calls are logged? How is indirect prompt injection prevented? What happens with memory poisoning? Is there a kill switch? Can a run be reconstructed forensically?

OWASP rightly treats agentic AI as its own threat field. The Cloud Security Alliance now writes dedicated guides for agentic AI red teaming. This is not academic luxury. It is what happens when context and agency meet.

An agent with read permissions is a research tool. An agent with write permissions is an actor. An agent with memory, tools, and weak approvals is an actor that learns, acts, and may later be hard to explain.

The European agent filter

For decision-makers, the filter has to be shorter than the vendor brochure and harder than the demo.

Before any significant agent decision, I would ask five questions.

1. Which concrete work are we delegating?

Not: which AI capability looks most impressive?

But: which recurring work should improve?

Research. Briefing. Campaign planning. CRM maintenance. Reporting. Proposal preparation. Customer support. Internal knowledge search. Approvals.

If the workflow is not named, the agent becomes a projection surface.

2. Which data and permissions does the agent really get?

An agent needs context. But it does not automatically need everything.

The evaluation question is: which data is necessary per use case, which permissions are inherited, which logs are created, and where are prompts, outputs, files, embeddings, and memories stored?

"The agent sees everything your employees see" sounds like an advantage in the sales call. In the security concept, it sounds different.

3. Who carries responsibility?

Roles blur with agents. The model provider supplies a base model. The platform provider builds agent logic. The agency codifies method. The client brings data, purpose, and approvals. Departments change workflows.

That can work. But only if it is clear who is the provider, deployer, integrator, operator, and business owner.

"We only use GPT underneath" is not a compliance answer. It is a sign that someone did not understand the question.

4. Which actions are technically limited and auditable?

Marketing promises about "humans at the helm" are not enough.

Can the agent only read, or can it write? Can it publish? Can it contact customers? Can it change budgets? Can it export data? Are approvals required? Are there logs for plans, tool calls, data access, decisions, and outputs?

If an agent can act but cannot provide a usable action history, it is not a production system. It is a very well-packaged risk.

5. How do we get back out?

Exit for agents is more than data export.

You need to know whether you can take agent definitions, workflows, prompts, skills, tests, evaluation data, logs, memories, and generated assets with you. Above all, you need to know whether your teams can still explain after twelve months how the work actually works, or whether that knowledge quietly lives inside the platform.

With agents, the lock-in is not the file. The lock-in is the learned working method.

What this means for the next vendor meeting

If you evaluate an agent platform in the next few weeks, I would not start the demo with "show us the features".

I would start with five sentences:

- Describe the concrete workflow your agent takes over for us.

- Show which data it really needs and which data it must not see.

- Make clear who is responsible for system logic, data processing, customization, and operations.

- Show the technical limits, approvals, and audit logs for each relevant action.

- Explain what we can export after twelve months if we switch.

If a vendor can answer cleanly, the next hour is worth it.

If not, that is also an answer.

The actual point

Agent products are currently being sold as if this were about productivity.

That is true. But only halfway.

A good agent saves time, connects systems, and makes work more fluid. But it also touches data, rights, habits, responsibilities, and power. It changes who sees work, who evaluates work, who performs work, and who can explain work.

That is why the US filter is not enough.

In Europe, the question must be:

Not only: is this infrastructure?

But: is this infrastructure auditable, co-determined, limited, and reversible?

If there is no answer to that, you are not buying an agent. You are buying loss of control with a nice interface.

Source anchors

- Nate Jones: "The 5-question filter I run every agent launch through", 29 April 2026.

- Nate Jones: "Every AI agent you use has the same hidden weakness", 4 April 2026.

- WPP: "WPP Open: the AI platform for marketing" and "WPP launches Agent Hub on WPP Open", 5 January 2026.

- European Commission: "AI Literacy - Questions & Answers" and "Navigating the AI Act".

- § 87 BetrVG, co-determination rights.

- OWASP GenAI Security Project: "Agentic AI - Threats and Mitigations".

- Cloud Security Alliance: "Agentic AI Red Teaming Guide".